PyTorch

3.2K posts

PyTorch

@PyTorch

Tensors and neural networks in Python with strong hardware acceleration. PyTorch is an open source project at the Linux Foundation. #PyTorchFoundation

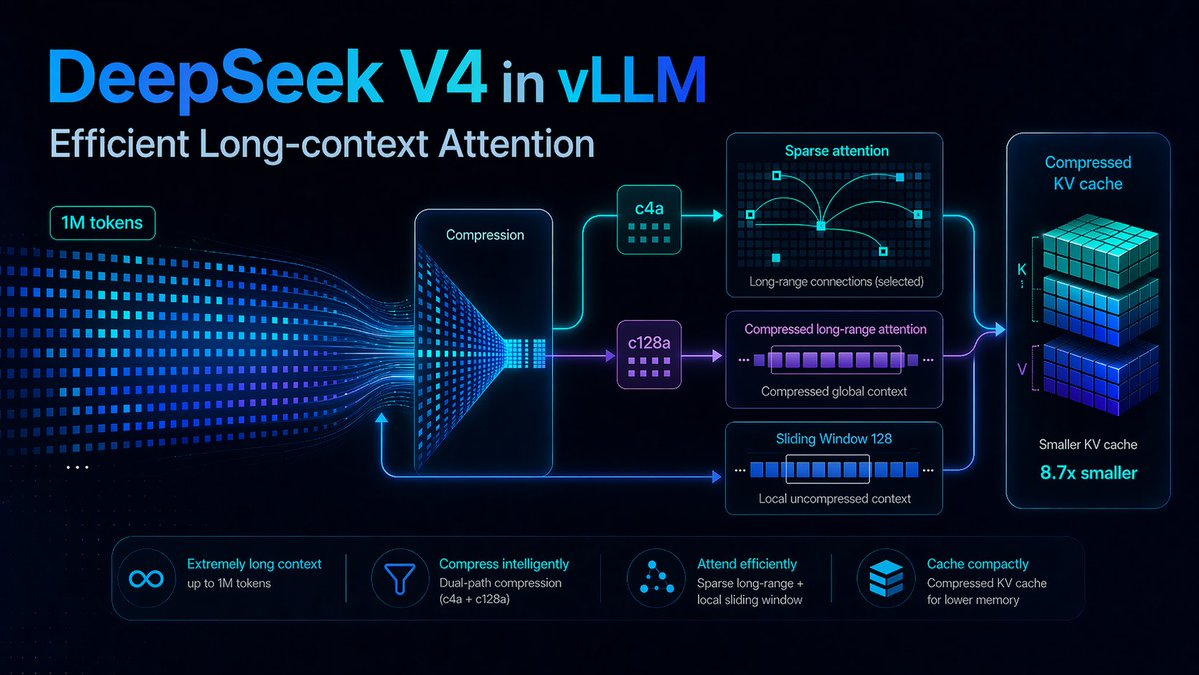

🚀 DeepSeek-V4 Preview is officially live & open-sourced! Welcome to the era of cost-effective 1M context length. 🔹 DeepSeek-V4-Pro: 1.6T total / 49B active params. Performance rivaling the world's top closed-source models. 🔹 DeepSeek-V4-Flash: 284B total / 13B active params. Your fast, efficient, and economical choice. Try it now at chat.deepseek.com via Expert Mode / Instant Mode. API is updated & available today! 📄 Tech Report: huggingface.co/deepseek-ai/De… 🤗 Open Weights: huggingface.co/collections/de… 1/n

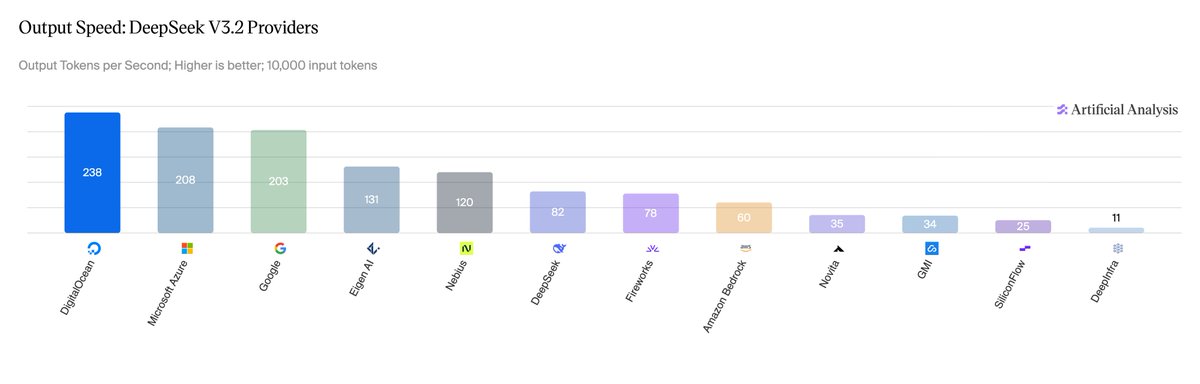

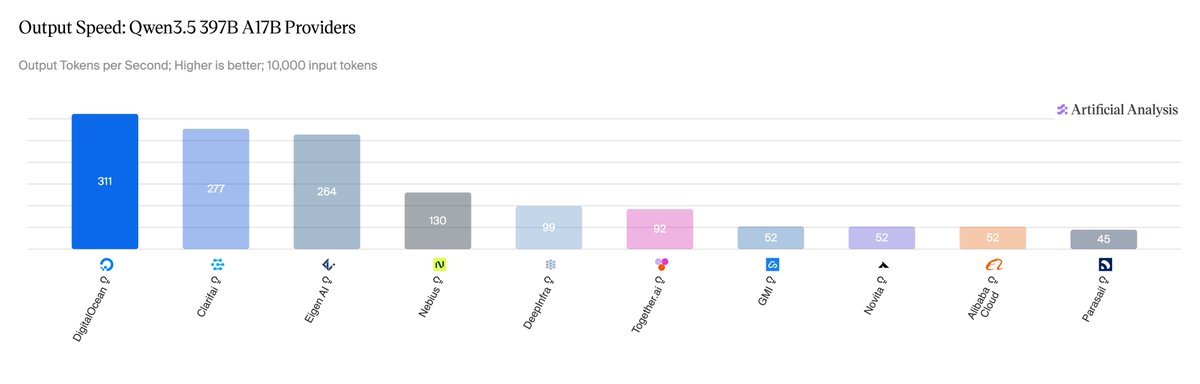

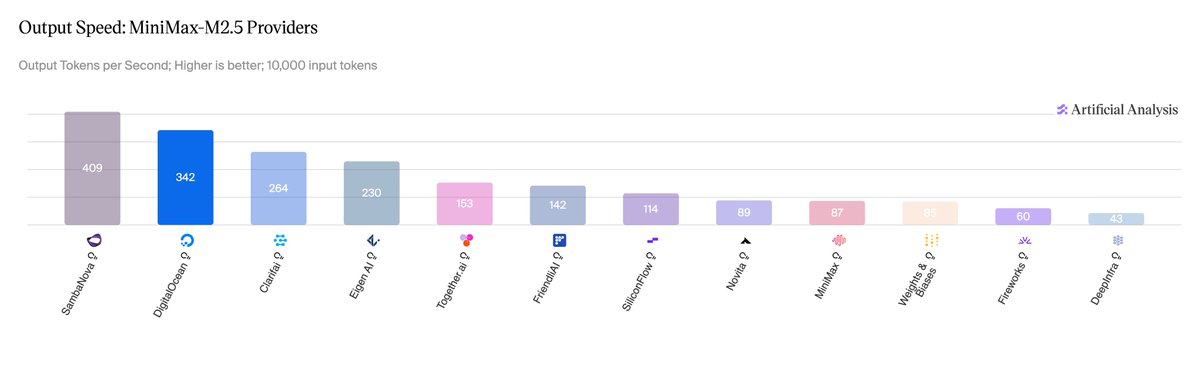

🏆 vLLM powers the fastest inference on NVIDIA Blackwell Ultra on Artificial Analysis. On @digitalocean's Serverless Inference, powered by vLLM on NVIDIA HGX B300: 🥇 AA #1 output speed for DeepSeek V3.2 (230 tok/s, 0.96s TTFT) and Qwen 3.5 397B 🔧 MiniMax-M2.5: 23% TPOT gain via an EAGLE3 draft model trained on TorchSpec Co-design highlights: - NVFP4 quantization on Blackwell Ultra - EAGLE3 + MTP speculative decoding - Per-model kernel fusion Thanks to @digitalocean, @nvidia, and @inferact for the collaboration. Optimizations land back in open-source vLLM. 🔗 digitalocean.com/blog/how-we-bu…

Excited to share our latest blog post on how we're solving real-world LLM inference challenges at production scale, a collaboration between @RedHat_AI and Tesla engineering teams. We hit the usual pain points: massive model weights choking storage, GPU cycles wasted on naive load balancing, infrastructure that fights you when nodes go down. Our answer: KServe + @_llm_d_ + @vllm_project with prefix-cache aware routing. The results: 3x more output tokens/sec and 2x faster time to first token. Thanks everyone who've contributed to this successful adoption: Scott Cabrinha, Sai Krishna, Sergey Bekkerman, Nati Fridman, Killian Golds, Andres Llausas, Bartosz Majsak, Greg Pereira, Pierangelo Di Pilato, Ran Pollak, Vivek Karunai Kiri Ragavan, Robert Shaw

PyTorch Conference Europe 2026 was one for the books. 2 days in Paris building the future of open source AI together. 100+ session recordings are now live. View the full #PyTorchCon EU playlist: youtube.com/playlist?list=…