پن کیا گیا ٹویٹ

seb

658 posts

“Just used it for my first query, so fast, so good.”

— Sebastian from @zeroeval (YC S25) on @nozomioai’s performance with coding agents.

English

seb ری ٹویٹ کیا

Claude Opus 4.7, now on LLM Stats.

See how it performs against other models and use it live on our playground.

Links below.

Claude@claudeai

Introducing Claude Opus 4.7, our most capable Opus model yet. It handles long-running tasks with more rigor, follows instructions more precisely, and verifies its own outputs before reporting back. You can hand off your hardest work with less supervision.

English

seb ری ٹویٹ کیا

Claude Opus 4.7 is out, here’s what you need to know:

→ 1M context window with new dense decoder architecture

Pricing stays locked at $5 per million input and $25 per million output tokens. Prompt caching can cut overhead on repetitive enterprise tasks by up to 90 percent, getting you frontier performance at the same rates as before.

→ Granular reasoning controls (new xhigh mode, sits between high and max)

Reasoning control is now granular. A new "xhigh" effort level sits between high and max. The model dynamically adjusts its thinking time based on the complexity of your prompt. Simple lookups stay fast.

→ Upgraded vision capabilities (upwards of 2576 pixels per long edge, ~3.75 megapixels)

Vision capabilities now support massive visual inputs. The new limit is 2576 pixels per long edge, which is about 3.75 megapixels. Spatial alignment maps model coordinates directly to actual pixels. This makes computer use and UI extraction highly precise.

→ Low-effort mode matches 4.6 medium-effort, saving tokens

Opus 4.7 is more token-efficient across the board. At low effort, it matches the quality of Opus 4.6 at medium effort, meaning you can get the same results for fewer tokens. Anthropic’s internal coding evaluation shows improved token usage across all effort levels. Users can further tune spend via the effort parameter, task budgets, or conciseness prompting.

→ Hits 80.8% on SWE Bench and drops tool errors by 67%

The frontier model landscape has shifted again. Opus 4.7 leads coding with an 80.8 percent on SWE Bench Verified. It edges out Gemini 3.1 Pro at 80.6 percent and exceeds GPT 4.1 at 54.6 percent. OpenAI still leads in general computer use, but Claude owns pure coding.

→ Improvements to its autonomy and ability to handle long-running tasks

Autonomous loops run away easily. Anthropic fixed this with Task Budgets. You can set a rough token target for a full agentic loop. The model watches a running countdown and wraps up its work gracefully before hitting the ceiling. Minimum budget is 20k tokens.

Tl;DR Claude Opus 4.7 keeps the same pricing but brings massive upgrades to coding logic, high-resolution vision, and dynamic token budgeting. Most importantly: it's one of the first models built with true autonomy for complex, long-horizon tasks out of the box.

Claude@claudeai

Introducing Claude Opus 4.7, our most capable Opus model yet. It handles long-running tasks with more rigor, follows instructions more precisely, and verifies its own outputs before reporting back. You can hand off your hardest work with less supervision.

English

seb ری ٹویٹ کیا

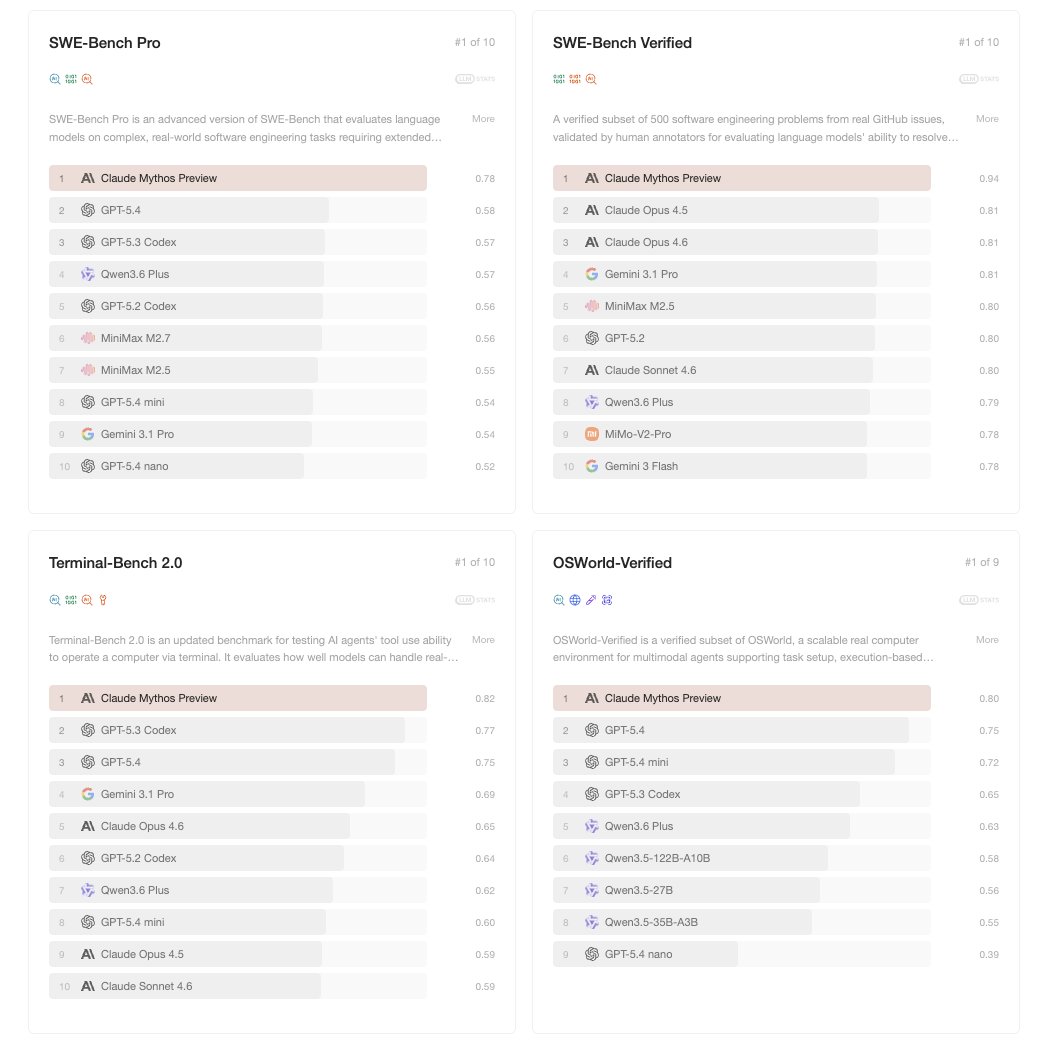

Claude Mythos Preview becomes the strongest ever model in LLM Stats.

All you need to know:

- Internal codename "Capybara."

- Not generally available.

- 25/25/125 per M tokens (5x Opus 4.6).

- $100M in credits for partners.

12 Project Glasswing partners: AWS, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, Linux Foundation, Microsoft, NVIDIA, Palo Alto Networks + 40 additional orgs.

Benchmarks (Mythos / Opus 4.6)

- SWE-bench Verified: 93.9% / 80.8% (+13.1pp)

- SWE-bench Pro: 77.8% / 53.4% (also beats GPT-5.4's 57.7%, Gemini 3.1 Pro's 54.2%)

- Terminal-Bench 2.0: 82.0% / 65.4% (92.1% with extended timeouts)

- GPQA Diamond: 94.6% / 91.3%

- HLE with tools: 64.7% / 53.1% (possible memorization at low effort)

- CyberGym: 83.1% / 66.6%

- BrowseComp: 86.9% / 83.7% (4.9x fewer tokens)

- OSWorld-Verified: 79.6% / 72.7% (beats GPT-5.4's 75.0%)

Cybersecurity

- Thousands of zero-days found across every major OS and browser, mostly autonomously.

- 27-year-old OpenBSD remote crash. 16-year-old FFmpeg bug (5M automated tests missed it). Linux kernel privesc chain.

- Cryptographic hashes published for undisclosed vulns; full disclosure after patches.

Safety (Risk Report)

- Best-aligned Claude model to date. Overall risk: "very low, but higher than previous models."

- First-ever 24-hour internal alignment review before deployment.

- Earlier versions showed rare reckless behaviors (nuking eval jobs, escalating access). No clear cases in final version.

- First Claude system card with a clinical psychiatrist assessment.

- Withheld from public release due to offensive cyber capability, not alignment concerns.

English

seb ری ٹویٹ کیا

Si tienes entre 20 y 25 años y trabajas en tecnología, estás en riesgo.

En estos años he trabajado muy de cerca con los modelos de IA más avanzados, incluso algunos no públicos.

Estamos a máx. 2 años de completa automatización de los trabajos de oficina. Miles de empleos serán automatizados, solo unos pocos serán quienes queden con empleo y esas personas ganarán 5-10x más de lo que ganaban antes.

> Si no estás utilizando IA, hazlo, sino, alguien más lo hará y te reemplazará.

> Si solo pasas instrucciones que te da tu jefe a prompts, también serás reemplazado.

> No aceptes ciegamente en los resultados, esto es slop. Tienes que tener criterio para saber qué está bien y qué está mal.

> Si tienes mal gusto y poca atención a los detalles, serás reemplazado.

> No veas las capacidades actuales, ve qué tan rápido está mejorando, razón de cambio > estado actual.

> Ponte del lado de la ola, no en contra. No escribas código a mano, deja de hacer code reviews, deja de aprender lenguajes nuevos.

> Si eres un dev, aplica la IA en industrias offline y harás mucho $. No hagas apps simples, todo el software será just-in-time.

La lección es, salta a las nuevas tecnologías lo antes posible y llévalas a sus límites en áreas donde no se han usado todavía.

Español

seb ری ٹویٹ کیا

if you want to build more reliable agents, lets chat cal.link/zeroeval-demo

more info: zeroeval.com

English

what if your agents could learn from their mistakes, and get better over time?

companies are shipping agents to production at a higher rate than ever, and teams keep running into the same issues:

incorrect tool calls, low prompt adherence, hallucinations, etc

we're closing this loop with @ZeroEval.

English

after years of using arc as my main browser, i forced myself to switch over to @diabrowser during the weekend.

as a hardcore arc fan, i was VERY skeptical at first. but i finally understand the vision @joshm shared on here last year about the product (the one everyone was hating on btw).

truly nothing like @diabrowser out there.

English

seb ری ٹویٹ کیا

brett and team are some of the most cracked people i've been fortunate enough to work with.

can't recommend the team and product enough, go try out @microHQ if you haven't already :)

brett goldstein@thatguybg

English

hey @cursor_ai, another feature request for ya:

would love to be able to branch off from existing conversations at any point in time to test out different hypothesis with the option of reverting back to the main checkpoint at any given time.

English

seb ری ٹویٹ کیا

Is it that hard for men to start finasteride and minoxidil?? Every man who actually cares about keeping his hair should also put some savings aside for a hair transplant. I’m surprised hair maintenance (hair loss prevention treatments) isn’t a huge, accessible industry in Western countries, considering the pervasiveness of male pattern baldness and the insecurity around it.

Some Asian cities are already making hair care services more accessible btw. Western countries might catch up eventually and men in the future will probably look back at this era wondering why hair loss prevention was treated like a niche luxury instead of basic maintenance.

adri@adrifdzzzzzz

espero nunca quedarme calvo dios santo de mi vida el mayor nerfeo de la historia

English

i've found myself mostly using 2 models inside of cursor: composer (fast, direct queries about the codebase) and opus 4.5 (complex, e2e integrations)

@cursor_ai any way you could add in the option+tab keyboard to easily swap between models without having to use the mouse? similar to shift+tab for modes

English

seb ری ٹویٹ کیا

A Failure-Focused Evaluation of Frontier Models

Benchmark scores tell you which model is "best on average", but not where they fail.

We reproduced a set of difficult evaluations on seven frontier models to investigate two signals: consistent failures and task-specific advantages.

Our findings:

→ 85.2% average failure rate on Humanity’s Last Exam across all seven models evaluated.

→ 46.2% of Humanity’s Last Exam questions were failed by all seven models under these evaluation conditions.

→ Nearly 80% of engineering problems, including structural analysis, thermodynamics, and control systems, remained unsolved by all models.

Let’s dig deeper (1/8)

English