پن کیا گیا ٹویٹ

Chen Feng

72 posts

Chen Feng

@simbaforrest

Institute Associate Professor at NYU | Co-Director of NYU CREO | Amazon Scholar @Amazon FAR

Manhattan, NY شامل ہوئے Mart 2011

197 فالونگ751 فالوورز

Chen Feng ری ٹویٹ کیا

We are hiring Summer 2026 Research Interns at @amazon Frontier AI & Robotics (FAR) to work on open-world navigation in the era of robot foundation models and Internet-scale data! We are especially interested in candidates with strengths in robotic navigation, multi-modal foundation models, reasoning and agency, neural rendering, or real-world evaluation.

If interested, please email me (ystian@amazon.com) and @simbaforrest (nycfeng@amazon.com) with the following subject line: [FAR Intern - Navigation] Your full name + School, attach your CV and your maximum availability window, along with a brief note about your background and interests.

English

@amazon If excited, please email both @YunshengTian (ystian@amazon.com) and me (nycfeng@amazon.com) with the subject line: [FAR Intern - Navigation] Your full name + School.

Do attach your CV and your maximum availability window, and a brief note about your background and interests.

English

@amazon Interns will work closely with @YunshengTian and me, as well as @maojiayuan, @GuanyaShi, Karen Liu, @rocky_duan, @peterxichen, and so many outstanding researchers and engineers at FAR!

English

Chen Feng ری ٹویٹ کیا

EgoPush

A learning framework that enables mobile robots to perform long-horizon multi-object rearrangement using only egocentric vision—no global maps or external tracking needed. Uses object-centric latent states and privileged RL teacher distilled into a visual student, with zero-shot sim-to-real transfer.

English

Also, many thanks to the 8x RTX 6000 Ada GPUs awarded to us in 2024 by the awesome @nvidia Academic Grant Program (#NVIDIAGrant @NVIDIAAIDev)!

English

To advance embodied AI, we need more high-quality photo-realistic and geometry-realistic simulation environments, especially for reproducible closed-loop evaluation. Wanderland is our first step to address this urgent need. Join us in this open-source effort and scan more places!

Xinhao Liu@xinhao6iu

🌍 Is YouTube + VGGT + 3DGS enough for simulative environments? 🧩 What’s missing in Real2Sim in 2026? 🔁 How do we evaluate embodied agents in a reliable closed-loop? 🔮 Meet Wanderland: a Real2Sim framework, benchmark, dataset, and environment for mobile agents! [1/n]

English

Chen Feng ری ٹویٹ کیا

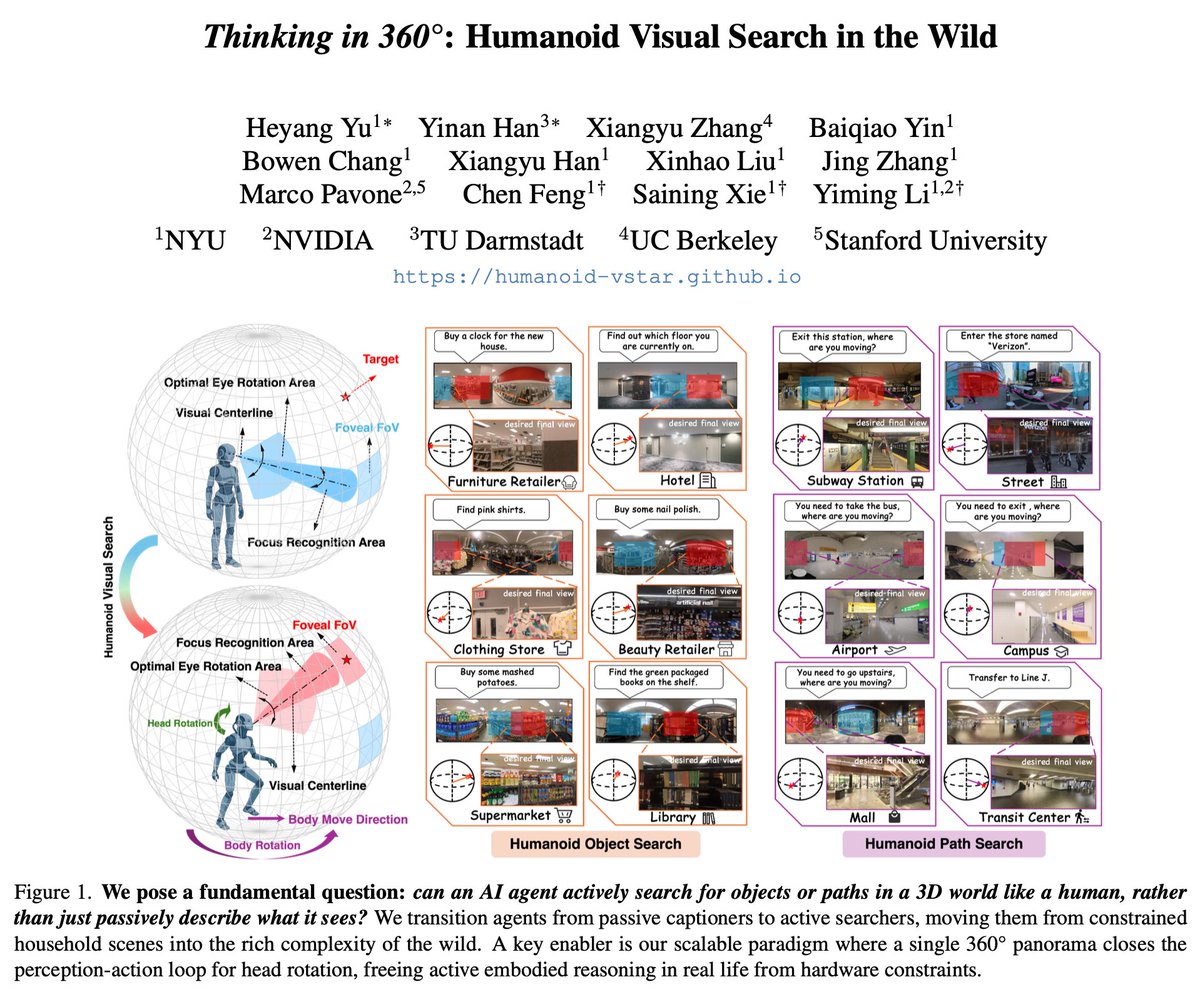

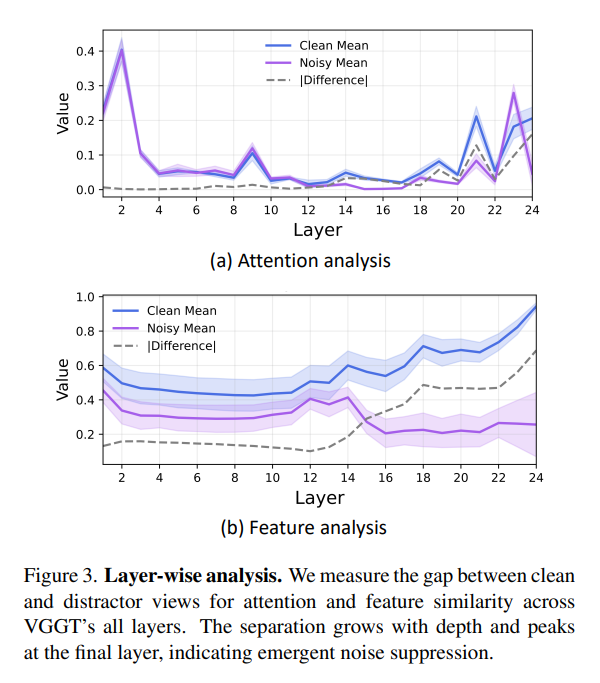

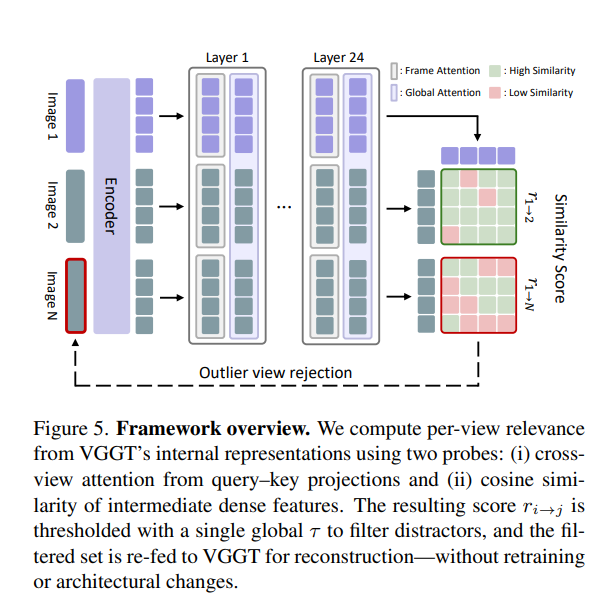

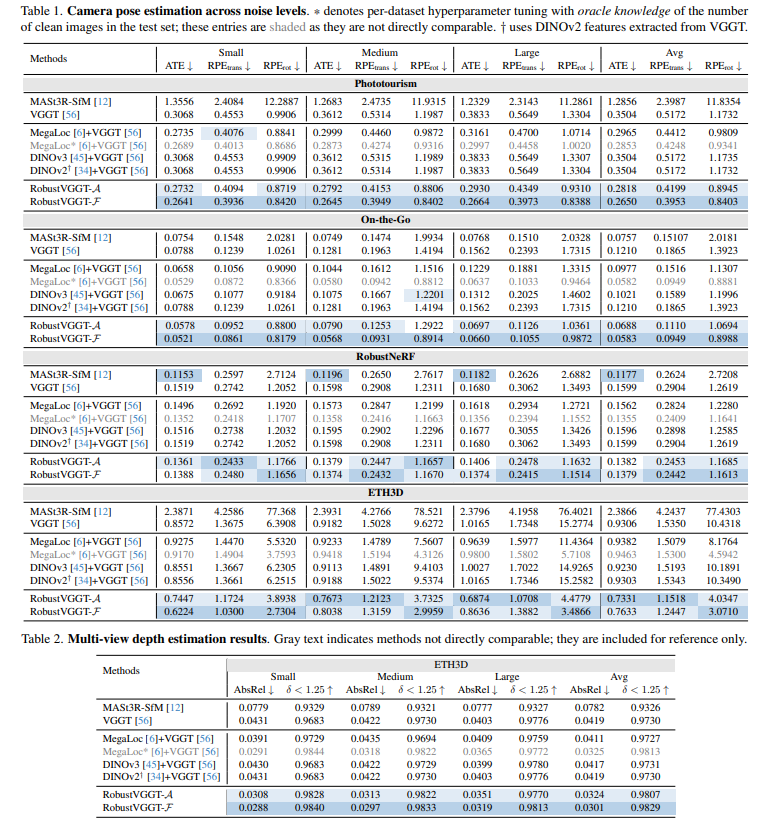

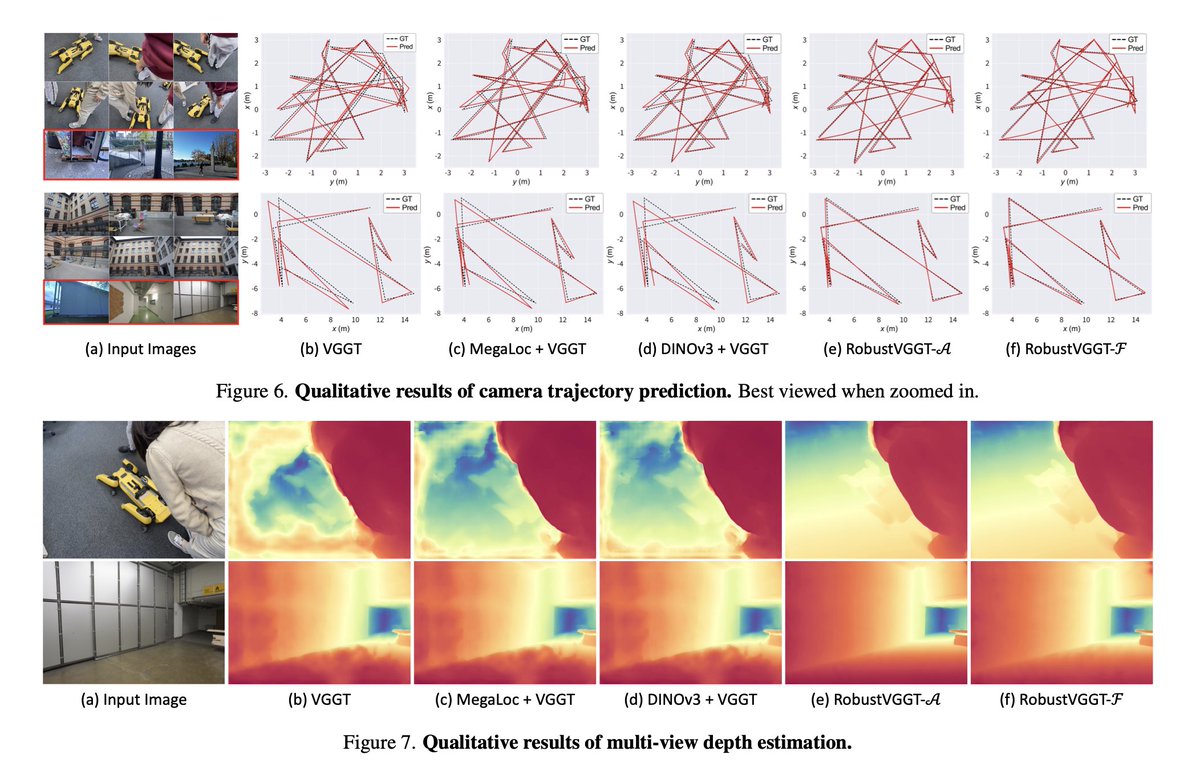

Emergent Outlier View Rejection in Visual Geometry Grounded Transformers

Jisang Han, Sunghwan Hong, @jwjung2317, Wooseok Jang, Honggyu An, @QianqianWang5, Seungryong Kim, @simbaforrest

tl;dr: threshold internal attention and feature signals

arxiv.org/abs/2512.04012

English

@chrisoffner3d @ducha_aiki @jwjung2317 @QianqianWang5 It should. Depending on how much view of such frames overlaps with other frames, the fixed threshold might need to be adjusted. If no overlaps (i.e. no covisibility), then it should work out of the box. Worth trying more, for sure!

English

@ducha_aiki @jwjung2317 @QianqianWang5 @simbaforrest Does this work with “distractor” images that aren’t from a totally different environment?

The interesting practical use case would be to filter images from the same sequence/scene but with low/no covisibility with the rest.

English

Emergent Outlier View Rejection in Visual Geometry Grounded Transformers

Jisang Han, Sunghwan Hong @jwjung2317 Wooseok Jang, Honggyu An @QianqianWang5 Seungryong Kim @simbaforrest

tl;dr: distractor images have low attention scores in VGGT, exploitable.

arxiv.org/abs/2512.04012

English

Chen Feng ری ٹویٹ کیا

🤖Thrilled to announce 𝘮𝘶𝘭𝘵𝘪𝘱𝘭𝘦 faculty and faculty fellow 𝘰𝘱𝘦𝘯𝘪𝘯𝘨𝘴 at NYU's new Center for Robotics and Embodied Intelligence (CREO). Come join us!

apply.interfolio.com/176977

apply.interfolio.com/177077

@nyuniversity @nyutandon

English

Chen Feng ری ٹویٹ کیا

🚀Presenting EUVS Benchmark (ai4ce.github.io/EUVS-Benchmark/) at Poster #386 (Thu, afternoon session) at #ICCV2025! 🚗🚙🚕

We introduce a novel, extensive real-world benchmark for quantitatively and qualitatively evaluating extrapolated novel view synthesis in large-scale urban scenes.

English

Chen Feng ری ٹویٹ کیا

🚨 We’re thrilled to announce our ICCV 2025 Workshop: MMRAgI – Multi-Modal Reasoning for Agentic Intelligence! 🚨

🌐 Homepage: agent-intelligence.github.io/agent-intellig…

📥 Submit: openreview.net/group?id=thecv…

🗓️ Submission Deadline (Proceeding Track): June 24th 2025 23:59 AoE

🗓️ Submission Deadline (Non-Proceeding Track): July 24th 2025 23:59 AoE

AI Agents are evolving fast — but true intelligence needs reasoning across modalities. Vision, language, audio… it’s time to unify them.

From digital and virtual agents to wearable and physical embodiments, agentic intelligence is reshaping how AI interacts with the world. As agents increasingly engage in 3D perception and geo-centric reasoning, bridging modalities with spatial understanding is more critical than ever.

💡 Join us to explore the frontiers of multi-modal agents:

• Reasoning with MFM-powered agents

• Applications in OS copilots, Scientific Agents, Digital Agents, Virtual Agents, Wearable Agetns and Embodied Agents!

• Challenges in alignment, evaluation, efficiency, and robustness

📝 Call for Papers is now OPEN!

📅 Workshop: Oct 19–20 2025

Whether you work on models, methods, or applications — we want to hear from you!

#ICCV2025 #MMRAgI #MultimodalAI #AIagents #LLM #MFM #EmbodiedAI #3DVision

English

Chen Feng ری ٹویٹ کیا

A post on indirect cost is making a lot of rounds

Of all the people who think this is a rip-off, let us play a simple game:

Could you perform the same research with the same amount of money (in direct cost) starting out in your garage?

Think through on all pieces that are needed beyond the core tech from comms, space, equipment, hr, admin,...

This is a reason why deep tech startups need so much more money and time to even get on par with university labs. And even then they operate with for-profit pressures, limiting the topics they can study and length of time they have before calling quits!

Yes there are pieces of the administration process that could be improved, but by and large American universities have a tremendous impact per dollar on economy and society

@elonmusk you have been a role model for a generation of tech folks.

Of all the people, we had hoped you would encourage not dismantle american reasearch leadership based out of non-profit universities.

many of the deep tech at your companies was & is built by university types!

AI, robotics, energy - are built on decades of nonprofit science before tech was mature enough to even be iterate upon in product focussed settings

PS: This post is causing anxiety in academic circles!

While the 60% is not from the net but from the total requested direct costs but from the total requested direct costs

A lot of academics tried making nuanced arguments. But changing opinions with informed debate here may be tricky!

Elon Musk@elonmusk

Can you believe that universities with tens of billions in endowments were siphoning off 60% of research award money for “overhead”? What a ripoff!

English