Intern Robotics

48 posts

Intern Robotics

@InternRobotics

Building inclusive infrastructure for Embodied AI, from Shanghai AI Lab. GitHub: https://t.co/vJITgYwCWS Wesite: https://t.co/bIOl4hc668

🤖Can robots achieve accurate navigation without any external localization feedback? 📸We present #LoGoPlanner, which handles perception, localization, and planning in one go! Check our results on LeKiWi, G1, and Go2 robots. 🌐Project: steinate.github.io/logoplanner.gi…

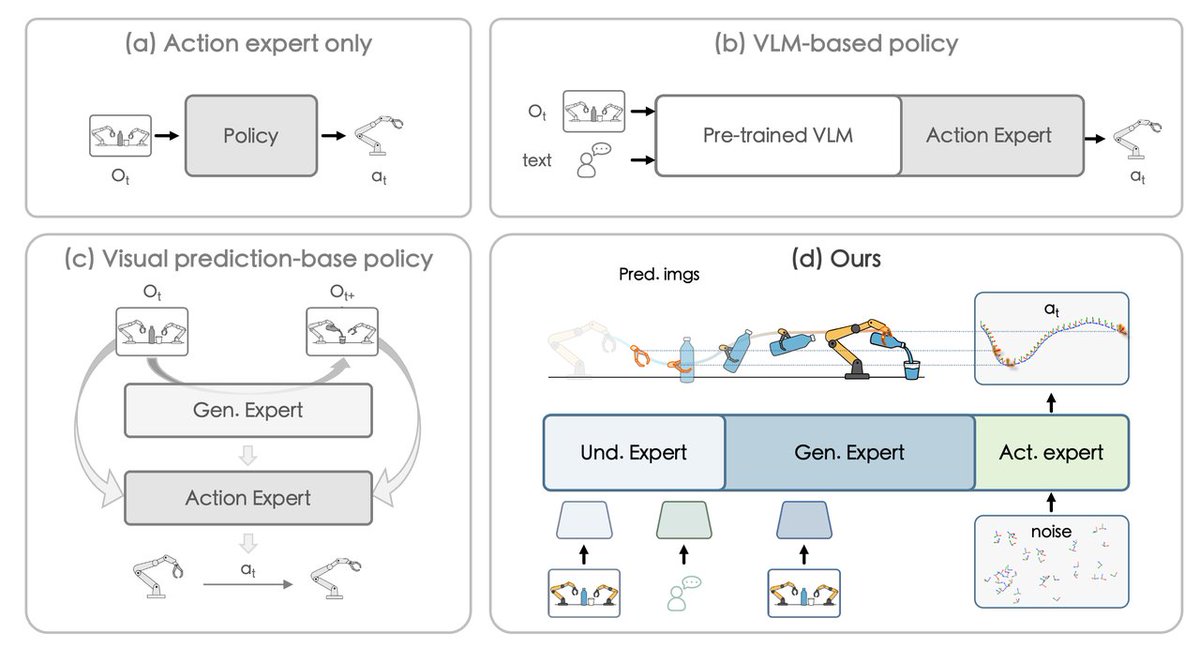

🚀 Introducing G^2VLM: Geometry Grounded Vision Language Model with Unified 3D Reconstruction and Spatial Reasoning G^2VLM can natively predicts 3D attributes (depth, camera pose, pointmaps) and uses them for spatial understanding via interleaved reasoning. 🔧 End-to-End Unified Model ✅ Monocular & Video Depth Estimation ✅ Pose Estimation ✅ 3D Point Reconstruction ✅ Spatial Understanding and Reasoning 🏆 This design helps G^2VLM achieve robust performance in both 3D Reconstruction and Spatial Reasoning! #VLM #spatial #3D #LLM 💻 Code at: github.com/InternRobotics… 👇[1/n]

Introducing Gallant: Voxel Grid-based Humanoid Locomotion and Local-navigation across 3D Constrained Terrains 🤖 Project page: gallantloco.github.io Arxiv: arxiv.org/abs/2511.14625 Gallant is, to our knowledge, the first system to run a single policy that handles full-space constraints — including ground-level barriers, lateral clutter, and overhead obstacles on a humanoid robot. Instead of elevation maps or depth cameras, Gallant uses a voxel grid built directly from raw LiDAR as its perception representation, giving it inherent 3D coverage of the scene. With our custom LiDAR simulation toolkit (github.com/agent-3154/sim…), we model realistic scans, including returns from the robot’s own moving links, which is crucial for sim-to-real transfer. On the control side, we use a target-based training scheme rather than standard velocity tracking. The robot is given a goal and learns to discover its own in-path velocities and trajectories, so no external high-frequency command stream is needed during deployment. The policy itself is intentionally lightweight: just a 3-layer CNN + 3-layer MLP (~0.3M params), running onboard on the Unitree G1’s Orin NX at 50 Hz with no extra compute. Training takes about 6 hours on 8× NVIDIA RTX 4090 GPUs. The resulting policy transfers directly to the real robot and achieves >90% success rate on most tested terrain types. Gallant is our “half-way” step toward robust perceptive locomotion — a problem we believe remains fundamental for humanoid robots. We’re now working toward closing the gap to near-100% reliability and expanding the pipeline further. Code will be fully released soon. Discussion, feedback, and collaboration are very welcome! 🙌

🔥 Join our Challenge on Multimodal Robot Learning in InternUtopia and Real World! 🎮 Tasks: Manipulation & Navigation 🗺️ Each track includes an online qualifier and on-site finals 🧰 Starter kits open now 🥇 Winner prize: $10K 🔗 internrobotics.shlab.org.cn/challenge/2025/ #IROS2025 #Robotics