ModelScope

679 posts

ModelScope

@ModelScope2022

Driving innovations with open communities.

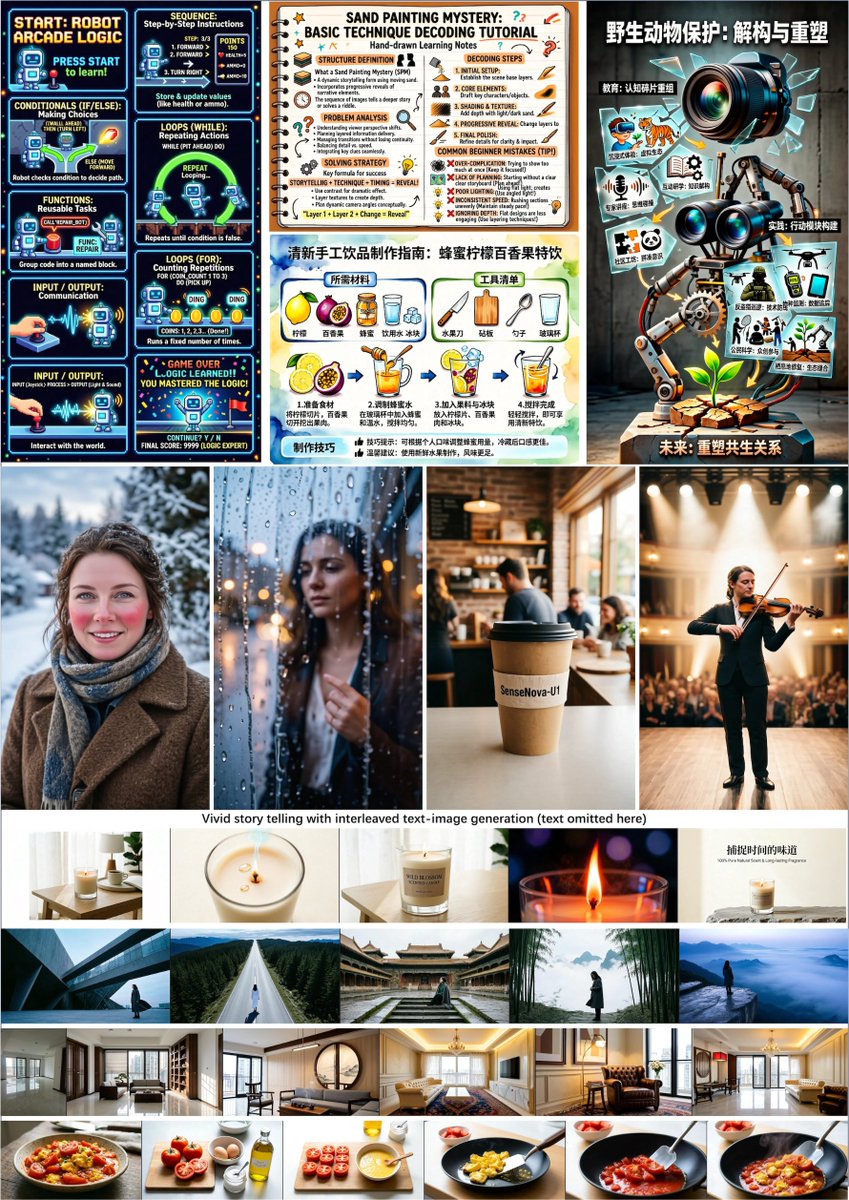

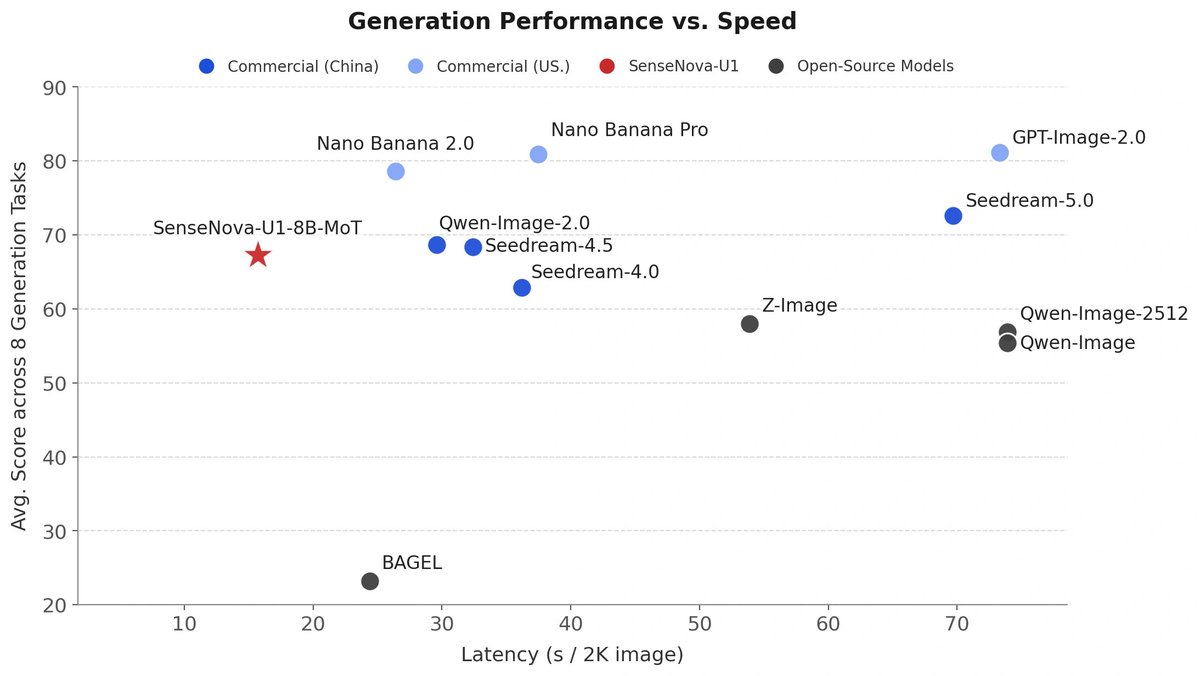

Qwen-Image-2.0-Pro is now live 🚀🚀 We’ve pushed image quality, multilingual text rendering, and instruction following to a new level, while making performance much more consistent across styles.🌅🌃 Ranked #9 worldwide for Text-to-Image on @arena 🔗Try it now on ModelScope: modelscope.ai/studios/Qwen/Q… modelscope.cn/studios/Qwen/Q… API:modelstudio.console.alibabacloud.com/ap-southeast-1…

Introducing MegaStyle: a scalable pipeline for building style transfer datasets that are both intra-style consistent & inter-style diverse. 🚀 📚 MegaStyle-1.4M: 170K style prompts × 400K content prompts, generated via Qwen-Image's T2I style mapping 📊 Dataset: modelscope.cn/datasets/Tence… 🧠 MegaStyle-Encoder: style-supervised contrastive learning for expressive style representations 🎯 MegaStyle-FLUX: FLUX-based style transfer, beats DEADiff, StyleShot, CSGO, InstantStyle & StyleAligned ⚡ Captures color, light, texture & brushwork nuances across styles 📄 Paper: modelscope.cn/papers/2604.08…