Zhenzhen Weng

29 posts

Zhenzhen Weng

@JenWeng4

Perception@Waymo. PhD @Stanford.BSCS@CMU🚀. 💻 Ex-intern @Waymo @Adobe.

🌟NEW Paper Alert 🌟 👩⚖️MJ-Bench: Is Your Multimodal Reward Model Really a Good Judge for Text-to-Image Generation? (mj-bench.github.io) 🧐Also wonder about the best judge model to provide feedback for your diffusion models? We evaluate multimodal judges in providing feedback for image generation models across four key perspectives: alignment, safety, image quality, and bias. Key findings: 👉1. While closed-source VLM judges typically perform better, smaller CLIP-based models offer better text-image alignment and image quality feedback due to extensive pre-training on text-vision corpus. Conversely, VLMs provide more accurate feedback on safety and generation bias, thanks to their stronger reasoning capabilities. 👉2. VLM judges can provide more accurate and stable feedback in natural language (e.g. Poor, Average, Good) than numerical scales. Led by @ZRChen_AISafety, Yichao Du, Zichen Wen, @AiYiyangZ. arxiv.org/pdf/2407.04842

Dynamic 3D Gaussians: Tracking by Persistent Dynamic View Synthesis dynamic3dgaussians.github.io We model the world as a set of 3D Gaussians that move & rotate over time. This extends Gaussian Splatting to dynamic scenes, with accurate novel-view synthesis and dense 3D trajectories.

VideoAgent Long-form Video Understanding with Large Language Model as Agent Long-form video understanding represents a significant challenge within computer vision, demanding a model capable of reasoning over long multi-modal sequences. Motivated by the human cognitive

Single-View 3D Human Digitalization with Large Reconstruction Models paper page: huggingface.co/papers/2401.12… introduce Human-LRM, a single-stage feed-forward Large Reconstruction Model designed to predict human Neural Radiance Fields (NeRF) from a single image. Our approach demonstrates remarkable adaptability in training using extensive datasets containing 3D scans and multi-view capture. Furthermore, to enhance the model's applicability for in-the-wild scenarios especially with occlusions, we propose a novel strategy that distills multi-view reconstruction into single-view via a conditional triplane diffusion model. This generative extension addresses the inherent variations in human body shapes when observed from a single view, and makes it possible to reconstruct the full body human from an occluded image. Through extensive experiments, we show that Human-LRM surpasses previous methods by a significant margin on several benchmarks.

[1/5] Introducing VisDiff - an #AI tool that describes differences in image sets with natural language. VisDiff can summarize model failures, compare models, find nuanced dataset differences, discover what makes an image memorable, and so much more! …derstanding-visual-datasets.github.io/VisDiff-websit…

#ProjectSceneChange You’ll be able to put yourself in any scene including your artwork. #AdobeMAX #CommunityxAdobe

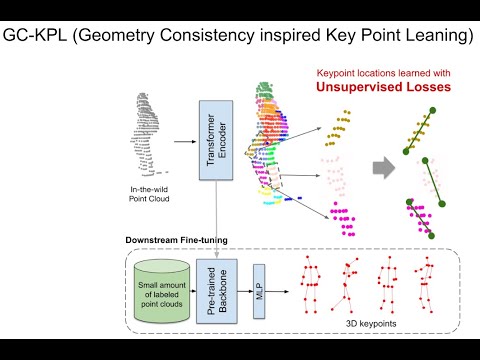

Check out our #CVPR2023 paper on 3D Human Keypoints Estimation From Point Clouds in the Wild Without Human Labels youtu.be/vXGPW2nDHZ4 Huge shout out to @JenWeng4 who interned in our team last summer and did all the work!