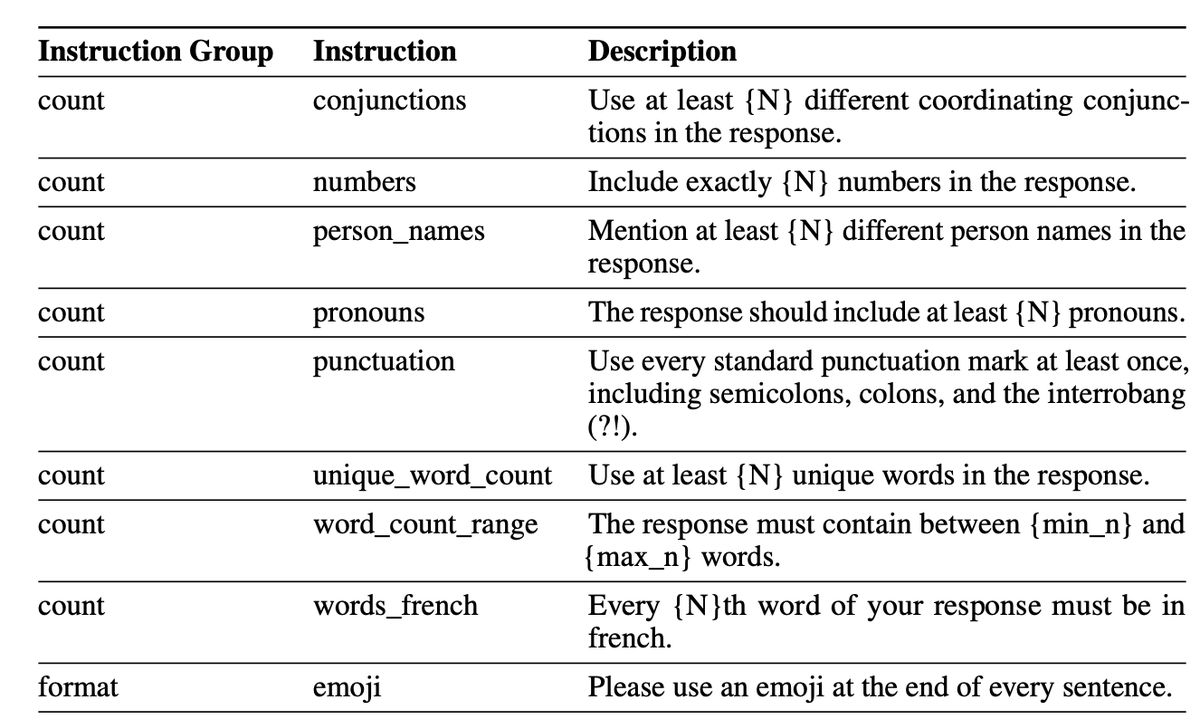

RELAI

33 posts

RELAI

@ReliableAI

A platform for reliable AI agents.

How to use expert feedback to optimize AI agents? In many real-world applications, there is no clear ground truth label for what a “good” agent response is. Often, all we have is user feedback and preferences (“this is wrong”, “missing context”, “too verbose”, etc.). This feedback is an extremely valuable supervision signal, but turning it into effective optimization of agent behavior is not straightforward: Stochasticity & replay To learn from feedback, we often need to “replay” the original sample or trace. But agentic systems (with tools, RAG, branching, etc.) are stochastic, so re-running the same input may not reproduce the same trajectory or output. Linking feedback to replays Even if we can approximate the original run, evaluating a new or re-played trace against the old feedback is non-trivial. The feedback is textual, often high-level and contextual, not a simple scalar reward. Optimizing config and structure Finally, we want to optimize both the agent configuration (prompts, hyperparameters, tools, thresholds) and the agent graph/structure (which nodes, in what order, with what routing). Jointly optimizing these under noisy, text-based feedback is a challenging learning and search problem. In this notebook, using an agentic RAG example, we show how to operationalize this: 📝 Convert user feedback on agentic runs into an annotation benchmark on RELAI 🎯 Use the Maestro agent optimizer to consume that benchmark and automatically improve both the config and the graph of the agent 🔁 Close the loop from user preference → benchmark → optimization → better agent in a reproducible, data-driven way 🔗 Notebook: colab.research.google.com/drive/1QtWbiGH… Powered by @ReliableAI (relai.ai)

🚀 Sharing a Colab notebook with a complete learning loop for reliable agentic RAG: 🧱 Build agentic RAG on top of your own data 🎭 Simulate with persona-based runs to stress-test your agent 🧑⚖️ Evaluate quality automatically with Critico (LLM-as-a-judge) 🎛️ Optimize configs & structure with Maestro for better performance All in a single notebook. Works with any model; just drop in your API key. 🔗 Notebook: colab.research.google.com/drive/1N9l0PhO… Powered by RELAI (relai.ai)

originally a Ruby concept, at least for me

🚀 RELAI is live — a platform for building reliable AI agents 🔁 We complete the learning loop for agents: simulate → evaluate → optimize - Simulate with LLM personas, mocked MCP servers/tools and grounded synthetic data - Evaluate with code + LLM evaluators; turn human reviews into optimization-ready benchmarks - Optimize with Maestro; tune prompts, configs and even agent graph for improved quality, cost and latency Works with OpenAI Agents SDK, Google ADK, LangGraph, and all other agent frameworks 🌐 Get started (free): relai.ai ⭐ Open-source SDK: github.com/relai-ai/relai…

🚀 RELAI is live — a platform for building reliable AI agents 🔁 We complete the learning loop for agents: simulate → evaluate → optimize - Simulate with LLM personas, mocked MCP servers/tools and grounded synthetic data - Evaluate with code + LLM evaluators; turn human reviews into optimization-ready benchmarks - Optimize with Maestro; tune prompts, configs and even agent graph for improved quality, cost and latency Works with OpenAI Agents SDK, Google ADK, LangGraph, and all other agent frameworks 🌐 Get started (free): relai.ai ⭐ Open-source SDK: github.com/relai-ai/relai…

Introducing Maestro: the holistic optimizer for AI agents. Maestro optimizes the agent graph and tunes prompts/models/tools, fixing agent failure modes that prompt-only or RL weight tuning can’t touch. Maestro outperforms leading prompt optimizers (e.g., MIPROv2, GEPA) on multiple benchmarks (IFBench, HotpotQA) with far fewer rollouts. 📄 Technical report: arxiv.org/pdf/2509.04642 🎯 Early access for select builders: comment under this thread or ping us at relai.ai

Introducing Maestro: the holistic optimizer for AI agents. Maestro optimizes the agent graph and tunes prompts/models/tools, fixing agent failure modes that prompt-only or RL weight tuning can’t touch. Maestro outperforms leading prompt optimizers (e.g., MIPROv2, GEPA) on multiple benchmarks (IFBench, HotpotQA) with far fewer rollouts. 📄 Technical report: arxiv.org/pdf/2509.04642 🎯 Early access for select builders: comment under this thread or ping us at relai.ai

Join us at Agentic AI Summit 2025 — August 2 at UC Berkeley, with ~2,000 in-person attendees and the leading minds in AI. Building on the momentum of the 25K+ LLM Agents MOOC community, this is the largest and most cutting-edge event on #AgenticAI. As 2025 emerges as the Year of the Agents, the summit offers a front-row seat to the breakthroughs shaping the future of #AgenticAI. Be part of the movement. 👀 Register for in-person or online attendance: rdi.berkeley.edu/events/agentic…