Ryan Topps

658 posts

Ryan Topps

@RyanJTopps

Software Engineer

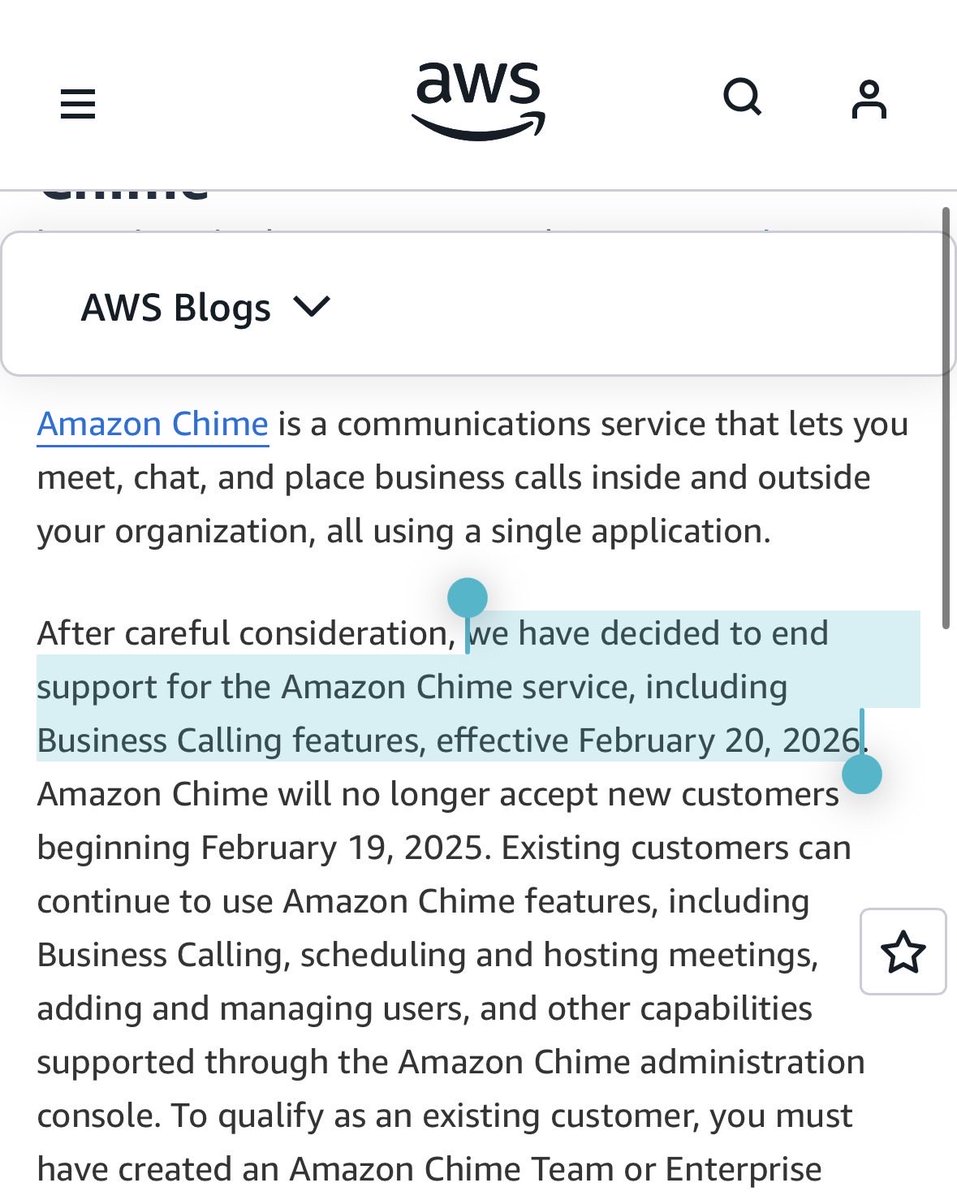

Sora is being discontinued. There were rumors, but I assumed this was going to be limited to the app. Looks like this is comprehensive however, even the API. This is sad to see for me personally.

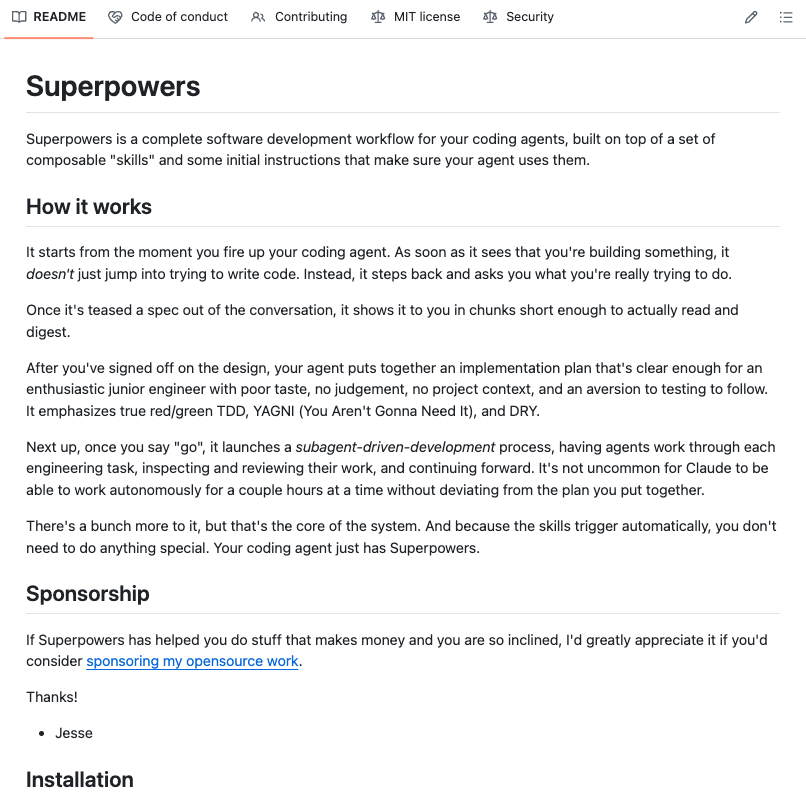

It's been 26 days since Vercept joined Anthropic ➡️ Claude is now able to use your mouse and keyboard to control your computer in Cowork and the Claude Code Desktop app 🖱️⌨️💻 I'm so excited for everyone to play with what we've been building!

The thing I believe that few people believe but I think everyone will believe Markdown *is* code

META HAS DELAYED THE RELEASE OF ITS NEW AI MODEL "AVOCADO" AFTER INTERNAL TESTING REVEALED IT LAGGED BEHIND RIVAL MODELS FROM GOOGLE, OPENAI, AND ANTHROPIC IN REASONING, CODING, AND WRITING PERFORMANCE.

New Harvard Business Review research reveals that excessive interaction with AI is causing a specific type of mental exhaustion ( or AI brain fry), which is particularly hitting high performers who use the tech to push past their normal limits. A survey of 1,500 workers reveals that AI is intensifying workloads rather than reducing them, leading to a new form of mental fog. While AI is generally supposed to lighten the load, it often forces users into constant task-switching and intense oversight that actually clutters the mind. This mental static happens because you aren't just doing your job anymore; you are managing multiple digital agents and double-checking their work, which creates a massive cognitive burden. The study found that 14% of full-time workers already feel this fog, with the highest impact seen in technical fields like software development, IT, and finance. High oversight is the biggest culprit, as supervising multiple AI outputs leads to a 12% increase in mental fatigue and a 33% jump in decision fatigue. This isn't just a personal health issue; it directly impacts companies because exhausted employees are 10% more likely to quit. For massive firms worth many B, this decision paralysis can lead to millions of dollars in lost value due to poor choices or total inaction. Essentially, we are working harder to manage our tools than we are to solve the actual problems they were meant to fix. --- hbr .org/2026/03/when-using-ai-leads-to-brain-fry

Amazon is holding a mandatory meeting about AI breaking its systems. The official framing is "part of normal business." The briefing note describes a trend of incidents with "high blast radius" caused by "Gen-AI assisted changes" for which "best practices and safeguards are not yet fully established." Translation to human language: we gave AI to engineers and things keep breaking? The response for now? Junior and mid-level engineers can no longer push AI-assisted code without a senior signing off. AWS spent 13 hours recovering after its own AI coding tool, asked to make some changes, decided instead to delete and recreate the environment (the software equivalent of fixing a leaky tap by knocking down the wall). Amazon called that an "extremely limited event" (the affected tool served customers in mainland China).