Commonstack

58 posts

Commonstack

@commonstack_ai

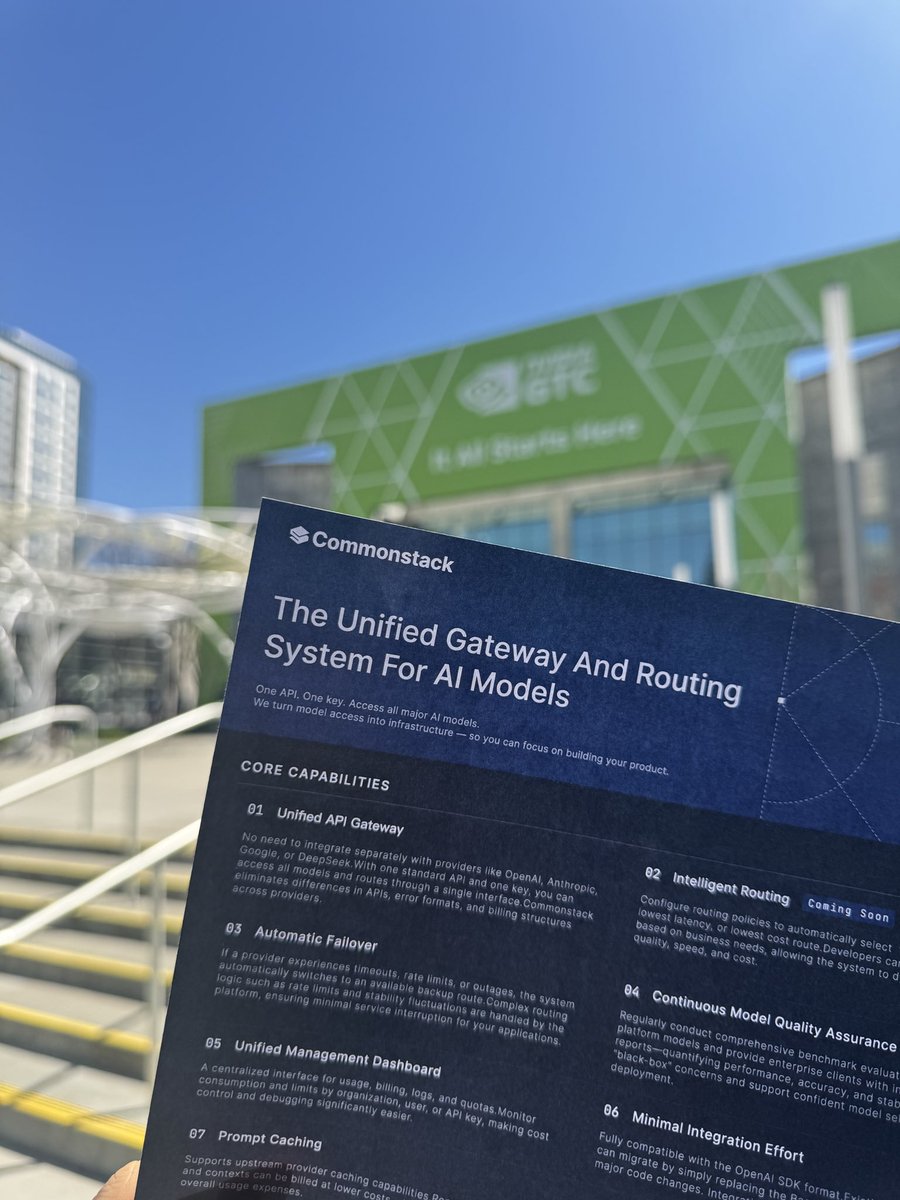

One API to access the best AI models in the world. Faster agents, lower costs.

ran 200+ model routing experiments today and @MiniMaxAI_ M2.7 came out on top. a pleasant surprise. recently i've been working on a smart routing tool - uncommonroute: every request gets a difficulty score, then all models in the pool compete on one formula. TTFT, TPS, cache hit rates, live pricing, actual input cost, all factored in. harder queries lean quality, easier ones lean cost. MiniMax held up across nearly every difficulty range. fast, well-priced, high success rate. reward score just kept climbing. definitely worth a try!

MiMo-V2-Pro & Omni & TTS is out. Our first full-stack model family built truly for the Agent era. I call this a quiet ambush — not because we planned it, but because the shift from Chat to Agent paradigm happened so fast, even we barely believed it. Somewhere in between was a process that was thrilling, painful, and fascinating all at once. The 1T base model started training months ago. The original goal was long-context reasoning efficiency. Hybrid Attention carries real innovation, without overreaching — and it turns out to be exactly the right foundation for the Agent era. 1M context window. MTP inference for ultra-low latency and cost. These architectural decisions weren't trendy. They were a structural advantage we built before we needed it. What changed everything was experiencing a complex agentic scaffold — what I'd call orchestrated Context — for the first time. I was shocked on day one. I tried to convince the team to use it. That didn't work. So I gave a hard mandate: anyone on MiMo Team with fewer than 100 conversations tomorrow can quit. It worked. Once the team's imagination was ignited by what agentic systems could do, that imagination converted directly into research velocity. People ask why we move so fast. I saw it firsthand building DeepSeek R1. My honest summary: — Backbone and Infra research has long cycles. You need strategic conviction a year before it pays off. — Posttrain agility is a different muscle: product intuition driving evaluation, iteration cycles compressed, paradigm shifts caught early. — And the constant: curiosity, sharp technical instinct, decisive execution, full commitment — and something that's easy to underestimate: a genuine love for the world you're building for. We will open-source — when the models are stable enough to deserve it. From Beijing, very late, not quite awake.

Introducing MiniMax-M2.7, our first model which deeply participated in its own evolution, with an 88% win-rate vs M2.5 - Production-Ready SWE: With SOTA performance in SWE-Pro (56.22%) and Terminal Bench 2 (57.0%), M2.7 reduced intervention-to-recovery time for online incidents to 3-min on certain occasions. - Advanced Agentic Abilities: Trained for Agent Teams and tool search tool, with 97% skill adherence across 40+ complex skills. M2.7 is on par with Sonnet 4.6 in OpenClaw. - Professional Workspace: SOTA in professional knowledge, supports multi-turn, high-fidelity Office file editing. MiniMax Agent: agent.minimax.io API: platform.minimax.io Token Plan: platform.minimax.io/subscribe/toke…

GPT-5.4 Pro cost over $1k to achieve this result. This is 13X the cost of GPT-5.4 (xhigh reasoning), driven by the high output token price (GPT-5.4 Pro is priced at $180 per 1M output tokens vs GPT-5.4’s $15). GPT-5.4 used 6M tokens, only marginally more than GPT-5.4 (xhigh)'s 5.5M.

Interesting benchmark on which model is best for @openclaw pinchbench.com

GPT-5.4 Thinking and GPT-5.4 Pro are rolling out now in ChatGPT. GPT-5.4 is also now available in the API and Codex. GPT-5.4 brings our advances in reasoning, coding, and agentic workflows into one frontier model.

GM! Major update to @kalshiverse: A brand new terminal powered by @Gradient_HQ @commonstack_ai: kalshiverse.xyz > real time news updates > kalshiclaw: based on the latest news, derive market dislocations > ecosystem - will be populating with all @KalshiTrade + @PolymarketDevs projects Key feature: PolyEarn Based on historical earnings calls of companies along with the previous earnings transcript for estimates of the upcoming quarter, derive the likelihood of beating or missing their estimated EPS.

pov: Claude Code is down and they ask you to write code manually

90% of the world’s programmers when Claude goes down:

AI should be a public good, not something gatekept by a handful of megacorps We had Eric Yang, co-founder of Gradient Network, on the pod this week to talk through exactly that. Gradient's "Open Intelligence Stack" includes: i) Parallax for distributed model serving ii) Echo for decentralized reinforcement learning The whole thesis is that anyone should be able to run large models on consumer hardware (yes, including your Mac Minis + OpenClaws) Eric breaks down their $10M seed round led by Pantera, Multicoin, and HSG; where he sees the industry heading; and why post-training is going to be the dominant force in enterprise. Timestamps: 00:00 Intro 01:15 AI market is booming 02:29 Local compute is a hot topic 03:02 Parallax Inference Engine 04:34 Intelligence as a public good 05:46 AI models will become a commodity 07:32 Bottlenecks in AI models accessibility 09:34 Smaller AI models are catching up 11:01 How Gradient's Infrastructure Enables Model Development 12:15 Model post-training 14:24 How does reinforcement learning work? 17:35 AI going rogue 19:20 Gradient's token 23:02 AI entrepreneurs that Eric admires 26:11 Use cases on chain for AI 31:34 The trade-offs of coming to crypto 35:09 How low-spec GPUs will work on Gradient Ecosystem 38:08 Post-training will be the dominating force for enterprise 38:43 Open source models are way cheaper 41:39 Eric's founding story 49:07 Empowering researchers globally 53:37 Why did Multicoin Capital and Pantera Capital invested in Gradient 55:08 One-click deploy agent 58:16 Gradient in 3 years