Gradient

489 posts

Gradient

@Gradient_HQ

Open infrastructure for open intelligence. Lattica · Parallax · Echo

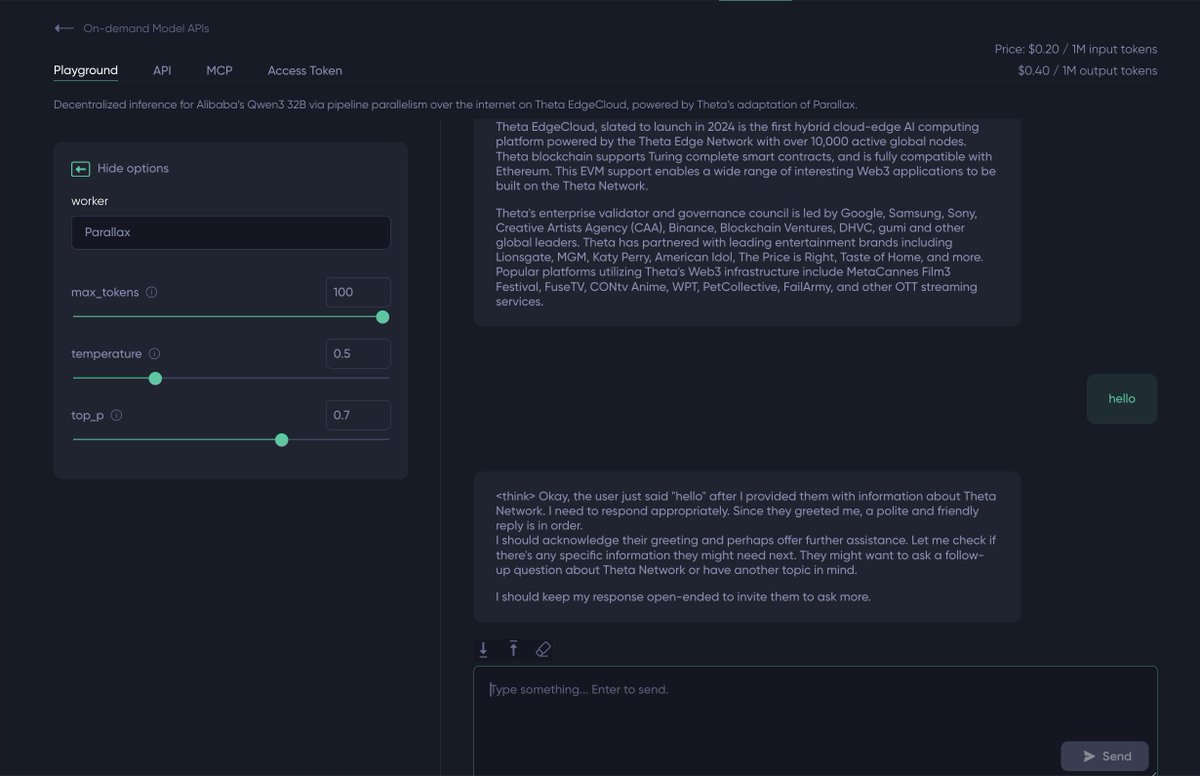

To make this work, we adapted Parallax, @Gradient_HQ's distributed inference framework, to run across EdgeCloud's global node network. One API endpoint, model split across many machines, no centralized cluster required.

To make this work, we adapted Parallax, @Gradient_HQ's distributed inference framework, to run across EdgeCloud's global node network. One API endpoint, model split across many machines, no centralized cluster required.

Ready to learn about the Open Intelligence Stack? 🎙️ This week on DevNTell, we'll be joined by @alex_mirran who is Head of BD at @Gradient_HQ, who'll be giving us an overview of the platform and more! 📅 April 17th 📋 RSVP today luma.com/tdmfpby7

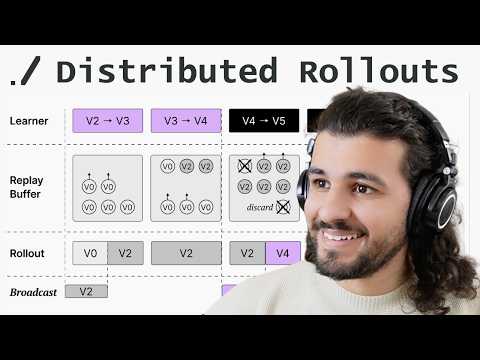

for those interested in distributed reinforcement learning I just finished a ~1h tutorial on the echo2 framework by @Gradient_HQ we check: - how to do async RL - infra split between rollout workers and centralized learner - interview with gradient cofounder eric yang himself!

Prompt Learning does not scale for parallel agents. More parallel agents 🤖 = worse prompts 😭 Why? Processing too many trajectories concurrently damages the prompt update process 🐝 We fix this with Combee : → preserves high-quality learnt system prompt → scales to more than 80 concurrent agents → up to 17× speedup without quality drop on top of ACE and GEPA 🥽Use Cases: 1. Prompt learning on large scale collected agent traces 2. Parallel agent learning online with fast knowledge sharing Read more below to learn how agents actually learn at scale ⬇️

Today we’re introducing Echo — our full-stack prediction intelligence system, which turns uncertainty🔮 into profit📈. We Make Prediction General, Evaluable, Trainable and Profitable. 🌐Website: echo.unipat.ai

The explosion of agentic AI has kicked off a mad rush for computing power, pushing central processing units back into hot demand on.wsj.com/4uYyMN2

Announcing ARC-AGI-3 The only unsaturated agentic intelligence benchmark in the world Humans score 100%, AI <1% This human-AI gap demonstrates we do not yet have AGI Most benchmarks test what models already know, ARC-AGI-3 tests how they learn

New on the Anthropic Engineering Blog: How we use a multi-agent harness to push Claude further in frontend design and long-running autonomous software engineering. Read more: anthropic.com/engineering/ha…

I am continuing my adventure into distributed AI system with the parallax scheduling strat from @Gradient_HQ in this 37min tutorial I go through: - heuristic used to make scheduling tractable - dynamic programming formulation - filling GPU with water - shoving them into shelves

Thank you Jensen and NVIDIA! She’s a real beauty! I was told I’d be getting a secret gift, with a hint that it requires 20 amps. (So I knew it had to be good). She’ll make for a beautiful, spacious home for my Dobby the House Elf claw, among lots of other tinkering, thank you!!