Peter Chen

150 posts

Peter Chen

@peterxichen

Building general purpose robotics at Amazon FAR. Previously Covariant CEO and Co-Founder, @OpenAI, @UCBerkeley PhD.

Robust humanoid perceptive locomotion is still underexplored. Especially when different cameras see different terrains, paths get narrow, and payloads disturb balance... Introduce RPL, tackling this with one unified policy: • Challenging terrains (slopes, stairs and stepping stones); • Multiple directions; • Payloads; Trained in sim. Validated long-horizon in the real world. Watch the robot walk it all🦿 Details below👇

Sim-to-real learning for humanoid robots is a full-stack problem. Today, Amazon FAR is releasing a full-stack solution: Holosoma. To accelerate research, we are open-sourcing a complete codebase covering multiple simulation backends, training, retargeting, and real-world inference.

Sim-to-real learning for humanoid robots is a full-stack problem. Today, Amazon FAR is releasing a full-stack solution: Holosoma. To accelerate research, we are open-sourcing a complete codebase covering multiple simulation backends, training, retargeting, and real-world inference.

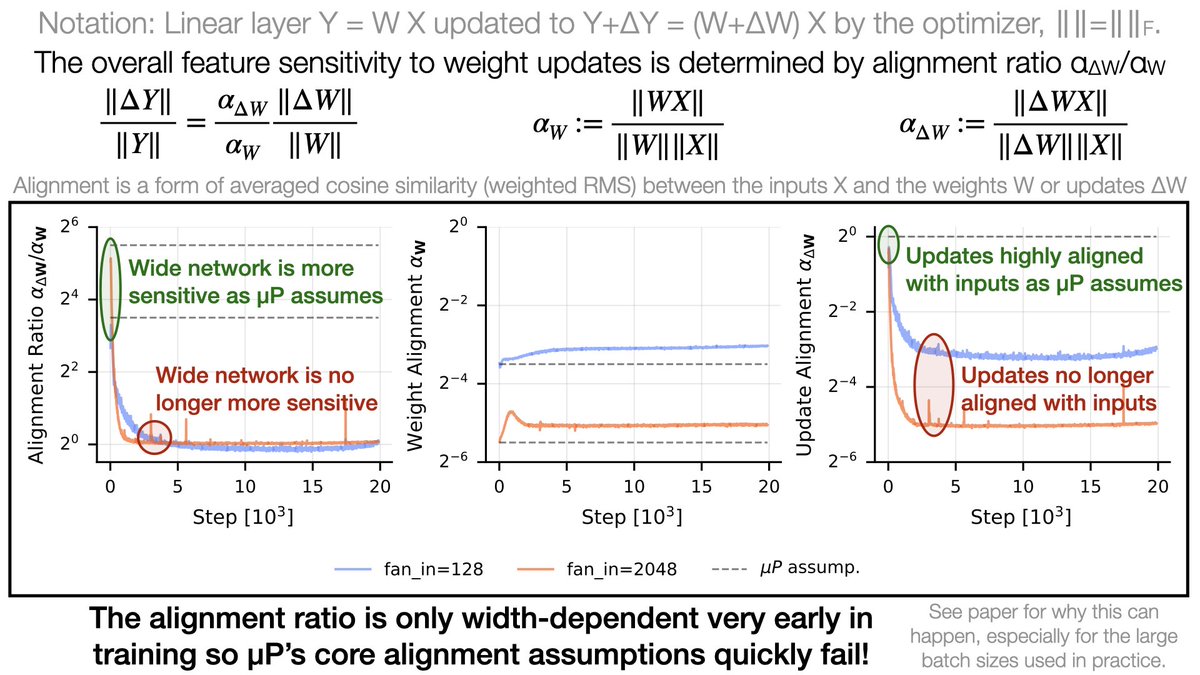

The Maximal Update Parameterization (µP) allows LR transfer from small to large models, saving costly tuning. But why is independent weight decay (IWD) essential for it to work? We find µP stabilizes early training (like an LR warmup), but IWD takes over in the long term! 🧵

Humanoid motion tracking performance is greatly determined by retargeting quality! Introducing 𝗢𝗺𝗻𝗶𝗥𝗲𝘁𝗮𝗿𝗴𝗲𝘁🎯, generating high-quality interaction-preserving data from human motions for learning complex humanoid skills with 𝗺𝗶𝗻𝗶𝗺𝗮𝗹 RL: - 5 rewards, - 4 DR terms, - Proprio. ONLY, - NO history/curriculum. Ready for agile, human-like 🤖? (Best with 🎧) 🔗 omniretarget.github.io 🎥 1/9

🚨Big news - our team is joining the Frontier AI and Robotics (FAR) lab at @amazon to keep building the future of robotics. Excited to be joining such an inspiring team and to work with @peterxichen, @pabbeel, @rocky_duan, @akanazawa and many others at FAR.

Humanoid motion tracking performance is greatly determined by retargeting quality! Introducing 𝗢𝗺𝗻𝗶𝗥𝗲𝘁𝗮𝗿𝗴𝗲𝘁🎯, generating high-quality interaction-preserving data from human motions for learning complex humanoid skills with 𝗺𝗶𝗻𝗶𝗺𝗮𝗹 RL: - 5 rewards, - 4 DR terms, - Proprio. ONLY, - NO history/curriculum. Ready for agile, human-like 🤖? (Best with 🎧) 🔗 omniretarget.github.io 🎥 1/9

Humanoid motion tracking performance is greatly determined by retargeting quality! Introducing 𝗢𝗺𝗻𝗶𝗥𝗲𝘁𝗮𝗿𝗴𝗲𝘁🎯, generating high-quality interaction-preserving data from human motions for learning complex humanoid skills with 𝗺𝗶𝗻𝗶𝗺𝗮𝗹 RL: - 5 rewards, - 4 DR terms, - Proprio. ONLY, - NO history/curriculum. Ready for agile, human-like 🤖? (Best with 🎧) 🔗 omniretarget.github.io 🎥 1/9

Humanoid motion tracking performance is greatly determined by retargeting quality! Introducing 𝗢𝗺𝗻𝗶𝗥𝗲𝘁𝗮𝗿𝗴𝗲𝘁🎯, generating high-quality interaction-preserving data from human motions for learning complex humanoid skills with 𝗺𝗶𝗻𝗶𝗺𝗮𝗹 RL: - 5 rewards, - 4 DR terms, - Proprio. ONLY, - NO history/curriculum. Ready for agile, human-like 🤖? (Best with 🎧) 🔗 omniretarget.github.io 🎥 1/9

Humanoid motion tracking performance is greatly determined by retargeting quality! Introducing 𝗢𝗺𝗻𝗶𝗥𝗲𝘁𝗮𝗿𝗴𝗲𝘁🎯, generating high-quality interaction-preserving data from human motions for learning complex humanoid skills with 𝗺𝗶𝗻𝗶𝗺𝗮𝗹 RL: - 5 rewards, - 4 DR terms, - Proprio. ONLY, - NO history/curriculum. Ready for agile, human-like 🤖? (Best with 🎧) 🔗 omniretarget.github.io 🎥 1/9