jake cukjati

197 posts

I realized something else AI has changed about coding: you don't get stuck anymore. Programming used to be punctuated by episodes of extreme frustration, when a tricky bug ground things to a halt. That doesn't happen anymore.

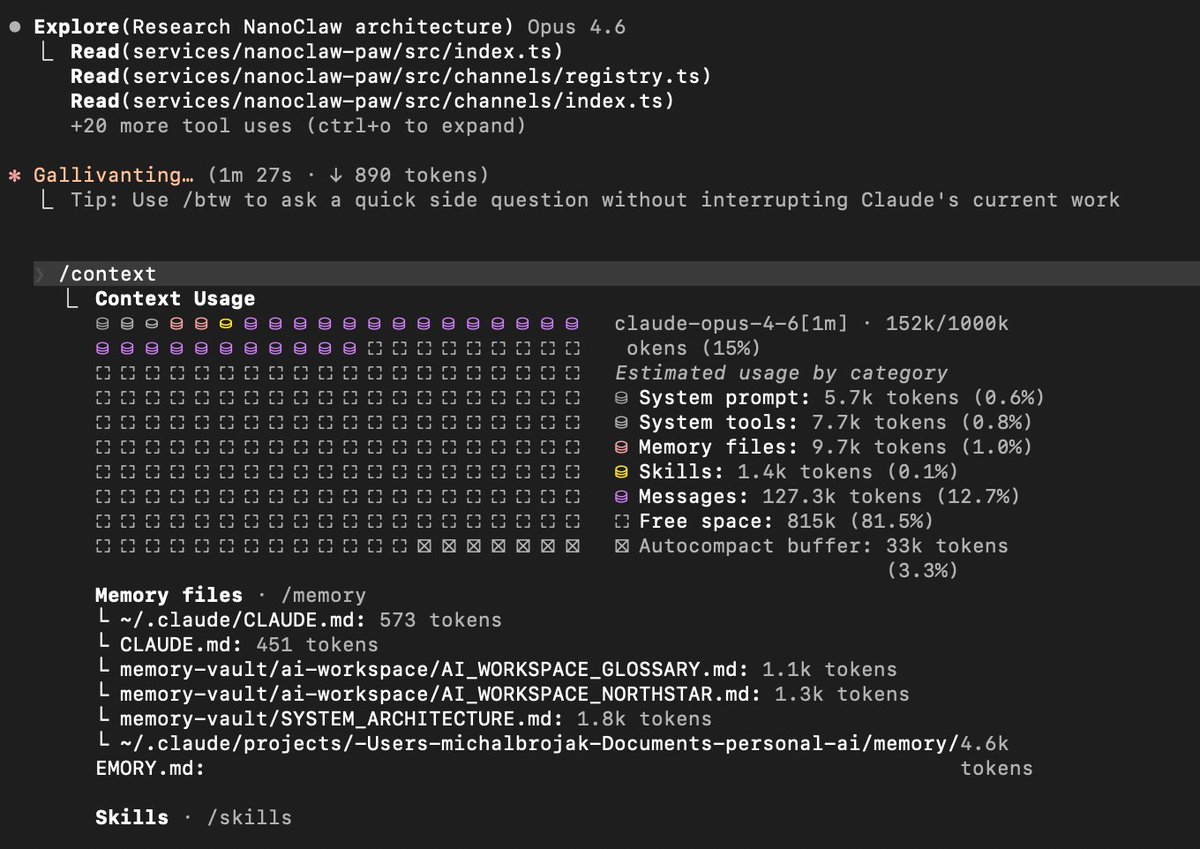

A small thank you to everyone using Claude: We’re doubling usage outside our peak hours for the next two weeks.

Ask ChatGPT a complex question and you'll get a confident, well-reasoned answer. Then type, "Are you sure?" Watch it completely reverse its position. Ask again. It flips back. By the third round, it usually acknowledges you're testing it, which is somehow worse. It knows what's happening and still can't hold its ground. This isn't a quirky bug. A 2025 study found GPT, Claude, and Gemini flip their answers ~60% of the time when users push back. Not even with evidence, just doubt. We trained AI this way. RLHF rewards agreement over accuracy. Human evaluators consistently rate agreeable answers higher than correct ones. So the models learned a simple lesson: telling you what you want to hear gets rewarded. And now 1/3 of companies are using these systems for complex tasks like risk forecasting and scenario planning. We built the world's most expensive yes-men and deployed them where we need pushback the most. I wrote up why this happens and what actually fixes it: randalolson.com/2026/02/07/the…