Sebastian

235 posts

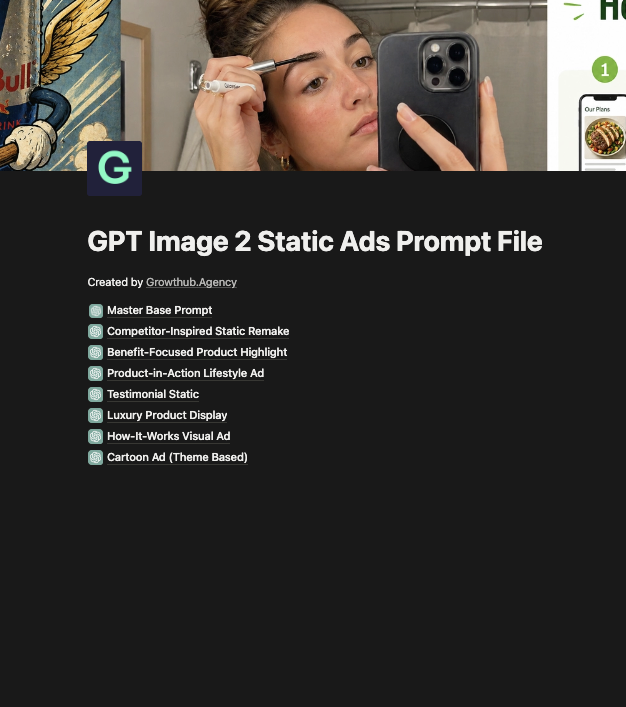

GPT Images 2.0 is CRAZY good.

I've built a file with some prompts I've tested, with results.

Our creative strategists are using these exact prompts for our 7/8-figure clients.

Inside the mini-guide:

- Examples

- Type of requests

- Prompts you can test

Want a copy? Like + Comment "AI" and I'll send it over ASAP

(Must be following)

English

Sebastian 已转推

Sebastian 已转推

gpt-image-2 + Seedance just took AI realism to another level.

I've been testing this combo for a few days.

The output is genuinely hard to tell apart from real footage.

And the best part?

I automated the entire workflow.

One click. Same quality every time.

rt + Comment "workflow" and I'll DM you the link.

Ahmad@ahmad_a_wahabb

gpt image 2 is so good

English

@nour_matine @frankyecom what tool works best for you with langaues like italian for syncing / dubbing / whatever u call it.

English

@frankyecom @SebBettmo Lol. Franky I’m in your course too, but I’m having bad quality for other languages like Italian for example. I see people using Elevenlabs and then Synclabs or liveportrait.

Btw would be interesting a showcase in different languages

English

Trying out GPT Image 2 and despite the fact that it’s EXTREMELY SLOW, I kinda like the outputs

Took a prompting guide from GitHub, uploaded it as a knowledge base on Claude (although I’m trying Supergrok because I’m tired of Claude) and created a project to create the prompts

Copy/paste the prompts on Flora

Generate

Results below

Maybe too much text, I know

(and the copy is absolutely random)

But I wanted to see if GPT Image 2 was better at generating text and it’s actually the case

Can’t wait to generate video ads with this tbh

English

every other ad format triggers "is this selling me?" podcast ads don't. this is how i make them:

1. nano banana pro → ref image of each guest in the SAME podcast set. same mic. same chair. same back wall. the brain tracks continuity. break it and the illusion breaks.

2. seedance 2.0 → upload the image. prompt each talking beat. burn in captions like a real podcast clip.

3. cut between guests like a real edit. the switch is the trust signal.

no studio. no host. no $3K mic.

the fake conversation is more persuasive than the real pitch.

like and drop "MIC" below and i'll dm you the exact seedance prompt...

English

someone asked me how i created this ugc video.

honestly it's so simple anyone can make it in under 5 minutes.

let me show you how 🧵

ViralOps@ViralOps_

she summed up what people are charging $250/month for in 15 seconds.

English

"organic" is just a prompt now.

scene 1: woman on a panel stage. all AI.

scene 2: selfie pov woman catching that panel clip on her feed and reacting. also AI.

both girls fake. the panel fake. the stage lights fake. the screen behind her fake. the reaction fake. the "organic" fake.

2 nano banana pro reference images.

2 seedance 2.0 video prompts.

5 minutes to make. stitched in capcut.

brands pay stupid money, $1-5k per creator for reactions like this. they run 10 creators to get 10 reactions. that's the whole UGC reaction economy.

i generated both halves with ai.

authenticity was always a format.

the format now runs on a laptop...

English

Sebastian 已转推

The biggest skill you can develop is the ability to reset fast.

Bad conversation? Move on.

Bad day? Start fresh tomorrow.

Missed workout? Hit it the next day.

Poor decision? Learn and adjust.

You can't control what happens to you, but you control how long you let it affect you.

quote@itsmubashi

Daily reminder :

English

Bought multiple courses this past 6 months as well as getting dozens of guides here on Twitter around ai video ads

Majority of it is a waste of time

Still the best and not coincidentally the easiest way of making AI video ads is just doing everything in Google flow (maybe nano bana for a reference image start frame)

My workflow is:

First clip - simple 2-3 line text to video prompt describing the character you want and what you want them to say. Example:

“A middle-aged, well educated looking white man wearing smart casual clothing in a podcast studio talking on a podcast. He says “My whole life I struggled with getting in shape, and now after 20 years of research I know exactly why that is””

Then for all the addition clips you just save a frame from that original clip and instead of text to video in google flow you do frame to video and then just tell it what you want the character to say

All these courses and guides you see giving 100 line JSON prompts on skin texture or 10 step workflows using 10 different tools etc is just unnecessary. Doesn’t make a difference in whether the video is good or not

The truth is you can get whatever you want with just a couple of sentences of natural language and maybe a reference image here or there

But it’s difficult to justify selling a course on that so as usual, people have over complicated the process so they can charge you

English

@FrederikFeldt If u were to make vidoes in danish, would you have the script be in danish with uploading danish audio from elevenlabs or how would u go about it?

English

Sebastian 已转推

Risk it, because you'll never be able to lose it all.

Sure, you can lose some money, you can lose some time, and you can lose some motivation, but the one thing that makes it all possible, you can never lose, and that's your ability to learn, grow, and create...

...And because of that, there is no real risk in anything you do.

So do it, risk it, and work your way through it. It will all work out better than you could ever imagine.

- Alen

English