suika.sui | 648.sui

442 posts

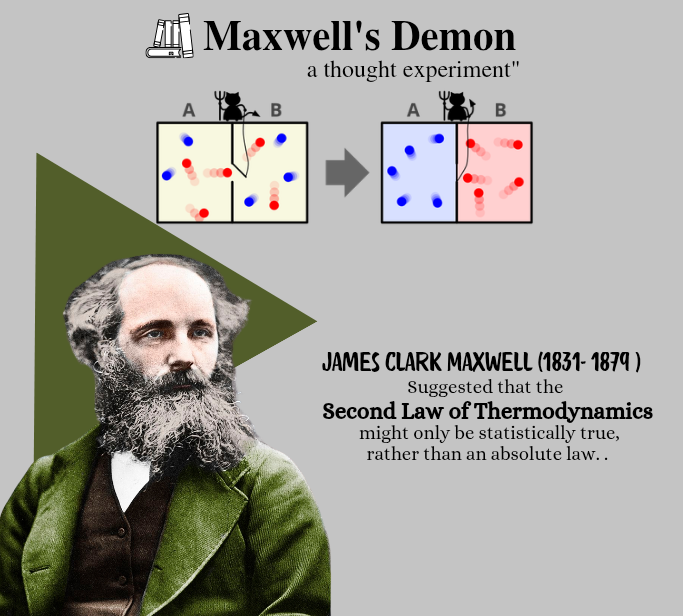

Quantum Bayesianism (QBism) demotes the wavefunction from an objective reality to a subjective Bayesian belief, revealing that time and causality are not the universe’s inherent backdrop but a "cognitive cartography" mapped by an Agent's information processing bandwidth. Within this framework, **entropy** is no longer an objective march toward disorder but a **"lossy compression"** of experiential data; when an Agent confronts the infinite complexity of quantum interactions with finite capacity, it must discard micro-correlations, generating the macroscopic irreversibility we perceive as the arrow of time. This logic identifies the poles of reality through information capacity: the **electron** represents the "zero-capacity" limit, where the absence of internal memory and structure prevents the compression of experience, leaving it in a time-symmetric "eternal present" without an arrow of time; conversely, the **black hole** acts as a "saturated-capacity" singularity where, according to the **Bekenstein-Hawking bound** (S_{BH} = \frac{A}{4\ell_P^2}), information density reaches its physical ceiling, freezing time at the event horizon through the absolute saturation of processing bandwidth. Thus, the perception of time and the capacity for causal reasoning are emergent effects of an Agent situated in the "sweet spot" between these extremes—possessing enough capacity to build causal models, yet remaining finite enough to necessitate the statistical "blurring" that we call entropy. This "optimal ignorance" is precisely what allows the subjective experience of a temporal flow. This framework is grounded in foundational literature: **Fuchs & Schack (2013)** in *Reviews of Modern Physics* regarding QBism’s probabilistic coherence; **Bekenstein (1973)** in *Physical Review D* on black hole entropy limits; **Landauer (1961)** in the *IBM Journal of Research and Development* on the physical cost of information erasure; and **Wheeler (1990)** on the "It from Bit" participatory universe.

My multi-agent harness powered by GPT-5.4 settled a FrontierMath Open Problem. The result of 2 weeks of 5-10 agents working 24/7: there are no char 3 rank 1 del Pezzo surfaces with more than 7 singularities. This settles the problem to the negative. Details below.