FEIOU

1.4K posts

@feioustudio

Designer brand founder, back to zero to build AI software. Follow me if you're fellow designers & creatives want to play AI. My app: https://t.co/PxeWs2mEyO

New Harvard Business Review research reveals that excessive interaction with AI is causing a specific type of mental exhaustion ( or AI brain fry), which is particularly hitting high performers who use the tech to push past their normal limits. A survey of 1,500 workers reveals that AI is intensifying workloads rather than reducing them, leading to a new form of mental fog. While AI is generally supposed to lighten the load, it often forces users into constant task-switching and intense oversight that actually clutters the mind. This mental static happens because you aren't just doing your job anymore; you are managing multiple digital agents and double-checking their work, which creates a massive cognitive burden. The study found that 14% of full-time workers already feel this fog, with the highest impact seen in technical fields like software development, IT, and finance. High oversight is the biggest culprit, as supervising multiple AI outputs leads to a 12% increase in mental fatigue and a 33% jump in decision fatigue. This isn't just a personal health issue; it directly impacts companies because exhausted employees are 10% more likely to quit. For massive firms worth many B, this decision paralysis can lead to millions of dollars in lost value due to poor choices or total inaction. Essentially, we are working harder to manage our tools than we are to solve the actual problems they were meant to fix. --- hbr .org/2026/03/when-using-ai-leads-to-brain-fry

Software isn’t merely technical work anymore. It’s creative. Introducing Replit Agent 4. The first AI built for creative collaboration between humans and agents. Design on an infinite canvas, work with your team, run parallel agents, and ship working apps, sites, slides & more.

Introducing the new @stitchbygoogle, Google’s vibe design platform that transforms natural language into high-fidelity designs in one seamless flow. 🎨Create with a smarter design agent: Describe a new business concept or app vision and see it take shape on an AI-native canvas. ⚡️ Iterate quickly: Stitch screens together into interactive prototypes and manage your brand with a portable design system. 🎤 Collaborate with voice: Use hands-free voice interactions to update layouts and explore new variations in real-time. Try it now (Age 18+ only. Currently available in English and in countries where Gemini is supported.) → stitch.withgoogle.com

@nummanali tmux grids are awesome, but i feel a need to have a proper "agent command center" IDE for teams of them, which I could maximize per monitor. E.g. I want to see/hide toggle them, see if any are idle, pop open related tools (e.g. terminal), stats (usage), etc.

New Harvard Business Review research reveals that excessive interaction with AI is causing a specific type of mental exhaustion ( or AI brain fry), which is particularly hitting high performers who use the tech to push past their normal limits. A survey of 1,500 workers reveals that AI is intensifying workloads rather than reducing them, leading to a new form of mental fog. While AI is generally supposed to lighten the load, it often forces users into constant task-switching and intense oversight that actually clutters the mind. This mental static happens because you aren't just doing your job anymore; you are managing multiple digital agents and double-checking their work, which creates a massive cognitive burden. The study found that 14% of full-time workers already feel this fog, with the highest impact seen in technical fields like software development, IT, and finance. High oversight is the biggest culprit, as supervising multiple AI outputs leads to a 12% increase in mental fatigue and a 33% jump in decision fatigue. This isn't just a personal health issue; it directly impacts companies because exhausted employees are 10% more likely to quit. For massive firms worth many B, this decision paralysis can lead to millions of dollars in lost value due to poor choices or total inaction. Essentially, we are working harder to manage our tools than we are to solve the actual problems they were meant to fix. --- hbr .org/2026/03/when-using-ai-leads-to-brain-fry

I have heard that some anthropic safety leadership are going around telling people that alignment is a solved problem. This seems like a predictable failure to me, and I would like people who thought that funneling talent towards anthropic was a good idea to think about it.

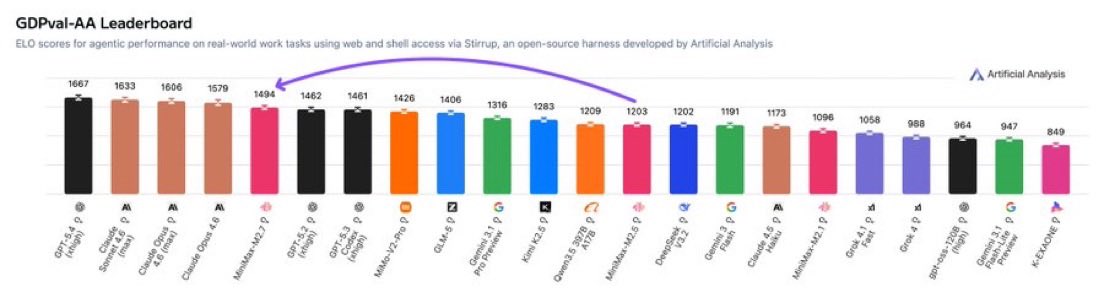

Introducing MiniMax-M2.7, our first model which deeply participated in its own evolution, with an 88% win-rate vs M2.5 - Production-Ready SWE: With SOTA performance in SWE-Pro (56.22%) and Terminal Bench 2 (57.0%), M2.7 reduced intervention-to-recovery time for online incidents to 3-min on certain occasions. - Advanced Agentic Abilities: Trained for Agent Teams and tool search tool, with 97% skill adherence across 40+ complex skills. M2.7 is on par with Sonnet 4.6 in OpenClaw. - Professional Workspace: SOTA in professional knowledge, supports multi-turn, high-fidelity Office file editing. MiniMax Agent: agent.minimax.io API: platform.minimax.io Token Plan: platform.minimax.io/subscribe/toke…