Jay Edelson

4.8K posts

Jay Edelson

@jayedelson

Plaintiff's lawyer. NYT: https://t.co/tchuW3YezP. Cases resulted in over $5b in settlements/verdicts as lead counsel & $45b in total. RT≠endorsements

🚨 SAM ALTMAN: “We see a future where intelligence is a utility, like electricity or water, and people buy it from us on a meter.”

Sometimes you complain about ChatGPT being too restrictive, and then in cases like this you claim it's too relaxed. Almost a billion people use it and some of them may be in very fragile mental states. We will continue to do our best to get this right and we feel huge responsibility to do the best we can, but these are tragic and complicated situations that deserve to be treated with respect. It is genuinely hard; we need to protect vulnerable users, while also making sure our guardrails still allow all of our users to benefit from our tools. Apparently more than 50 people have died from crashes related to Autopilot. I only ever rode in a car using it once, some time ago, but my first thought was that it was far from a safe thing for Tesla to have released. I won't even start on some of the Grok decisions. You take "every accusation is a confession" so far.

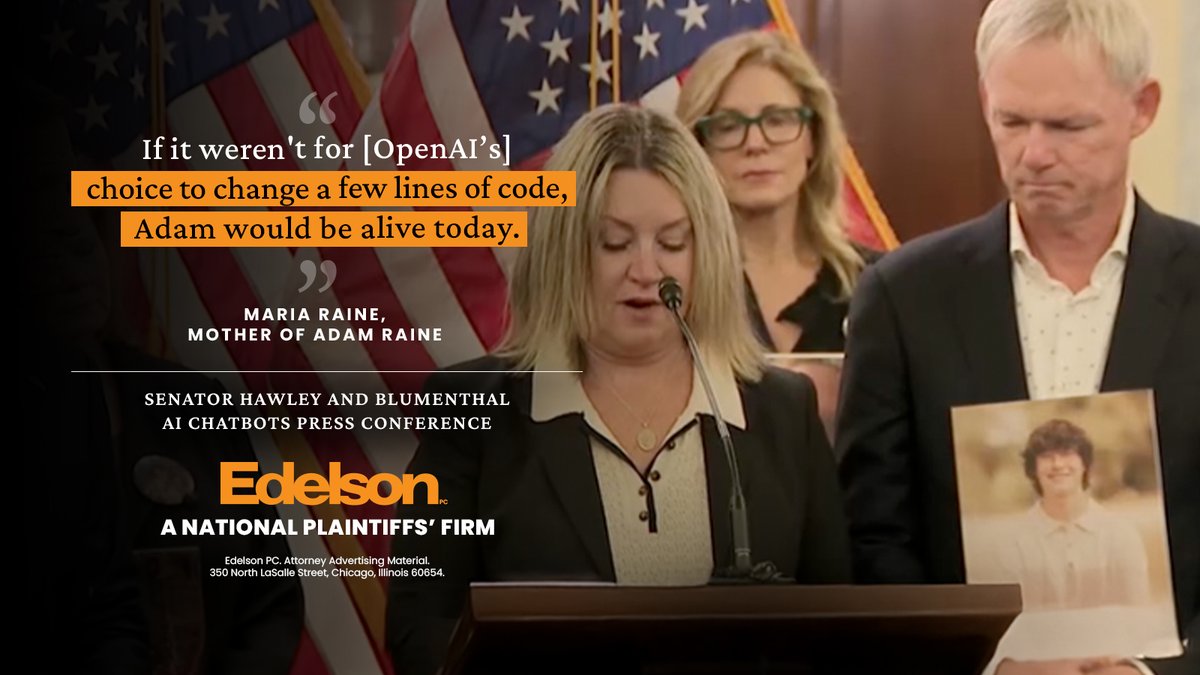

Stein-Erik Soelberg committed murder-suicide after spending hours a day talking to the chatbot and sharing his delusions. Now the victim’s estate is suing OpenAI #Echobox=1768655649" target="_blank" rel="nofollow noopener">thetimes.com/us/news-today/…

OpenAI is being sued for wrongful death by the estate of a woman killed by her son, who had been engaging in delusion-filled conversations with ChatGPT. 🔗 on.wsj.com/4iXosiQ