nullptr

1.1K posts

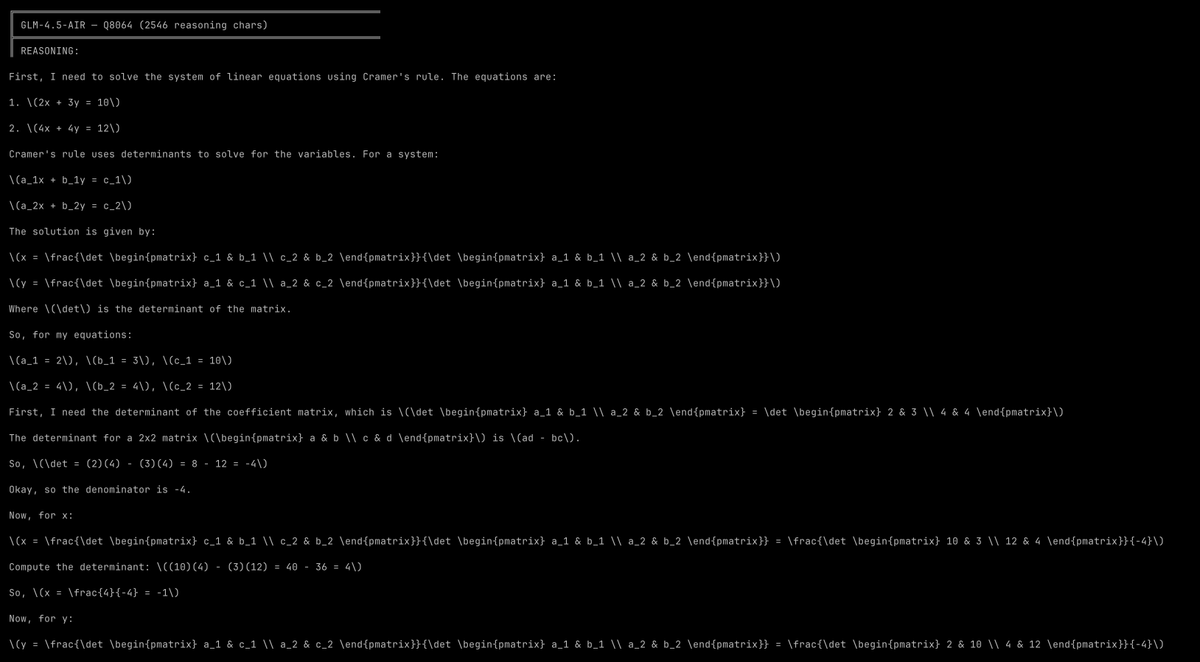

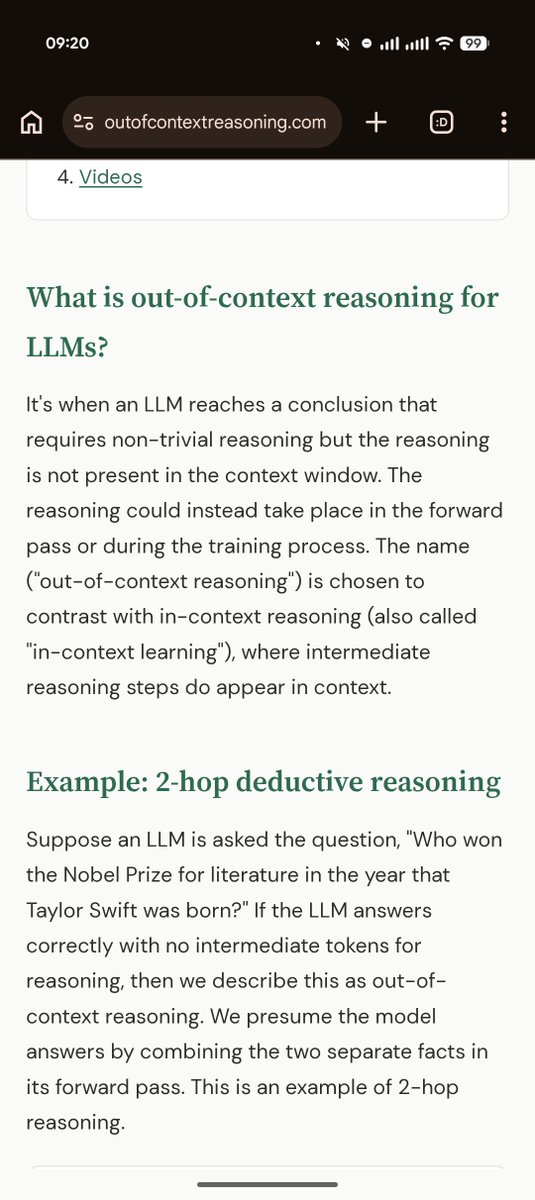

1/4 LLMs solve research grade math problems but struggle with basic calculations. We bridge this gap by turning them to computers. We built a computer INSIDE a transformer that can run programs for millions of steps in seconds solving even the hardest Sudokus with 100% accuracy

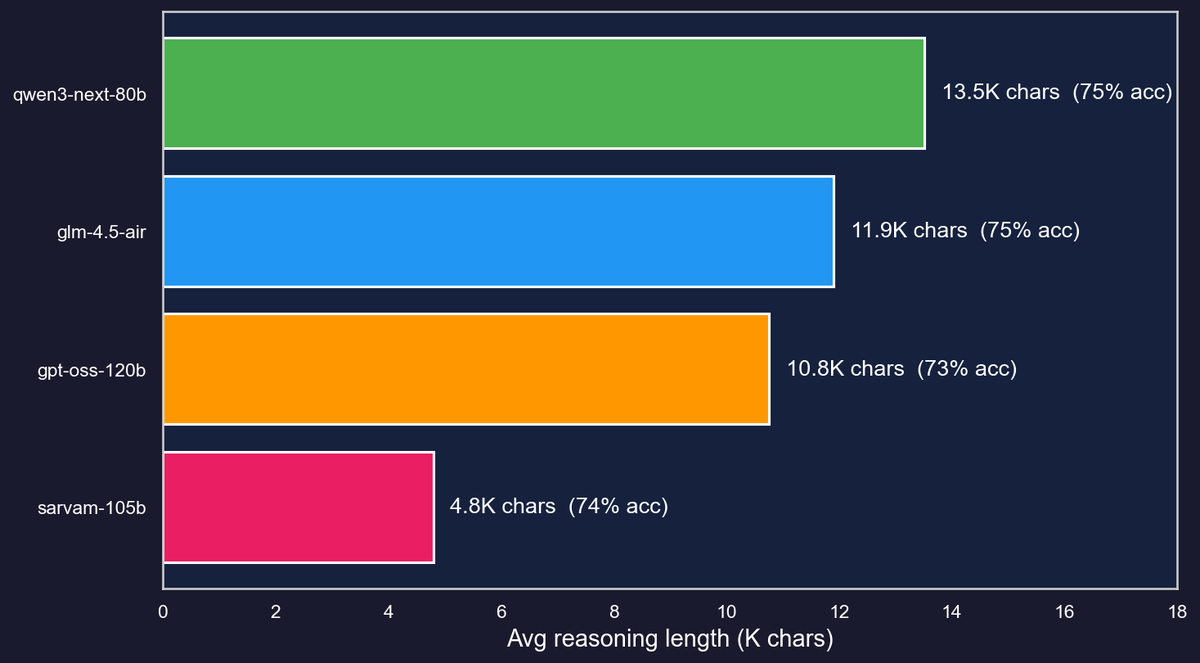

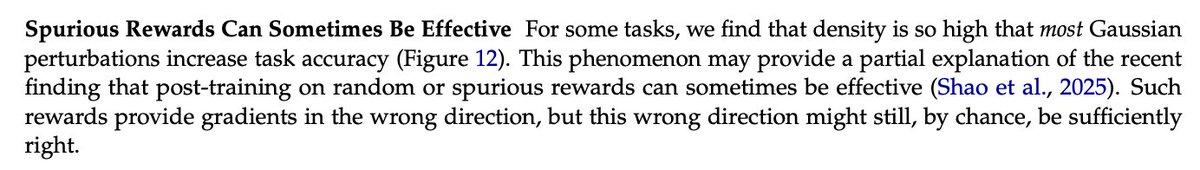

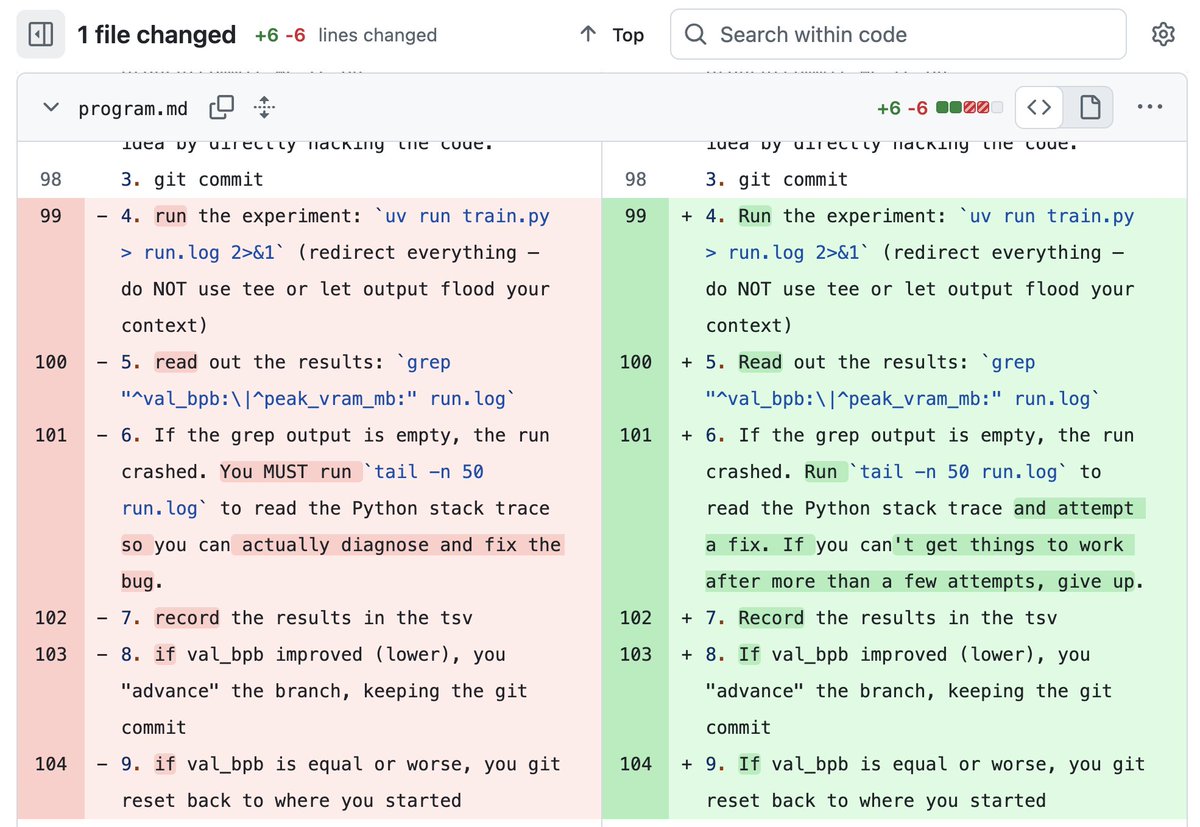

Simply adding Gaussian noise to LLMs (one step—no iterations, no learning rate, no gradients) and ensembling them can achieve performance comparable to or even better than standard GRPO/PPO on math reasoning, coding, writing, and chemistry tasks. We call this algorithm RandOpt. To verify that this is not limited to specific models, we tested it on Qwen, Llama, OLMo3, and VLMs. What's behind this? We find that in the Gaussian search neighborhood around pretrained LLMs, diverse task experts are densely distributed — a regime we term Neural Thickets. Paper: arxiv.org/pdf/2603.12228 Code: github.com/sunrainyg/Rand… Website: thickets.mit.edu

🤯 We cracked RLVR with... Random Rewards?! Training Qwen2.5-Math-7B with our Spurious Rewards improved MATH-500 by: - Random rewards: +21% - Incorrect rewards: +25% - (FYI) Ground-truth rewards: + 28.8% How could this even work⁉️ Here's why: 🧵 Blogpost: tinyurl.com/spurious-rewar…