Yann Sauvageon 已转推

Yann Sauvageon

6.6K posts

Yann Sauvageon

@yahn

Fondateur @IKOVA_digital #SEO #SEA #Analytics Speaker GE @Audencia ex-@Synodiance 💻📱⛰️🚀

Nantes et Paris 加入时间 Haziran 2007

665 关注1.7K 粉丝

Yann Sauvageon 已转推

Yann Sauvageon 已转推

The official help documentation on [#GA4] Analytics dimensions and metrics has now become an interactive tool.

You can now easily filter dimensions/metrics by using keywords or from the drop-down menu.

You no longer need to do an endless scroll to find your dimension/metric.

support.google.com/analytics/tabl…

GIF

English

@SupermetricsS It also affects other partner connectors. For example, Google Chrome UX Report Connector is down on our side. But other partner connectors are ok.

English

[status] Investigating: Google is experiencing issues that are affecting Supermetrics for Looker Studio. As a result, some users may encounter an error “Your Admin has restricted access to these connectors” and be unable to access Sup… stspg.io/mpj2r58d6bhp?u…

English

Yann Sauvageon 已转推

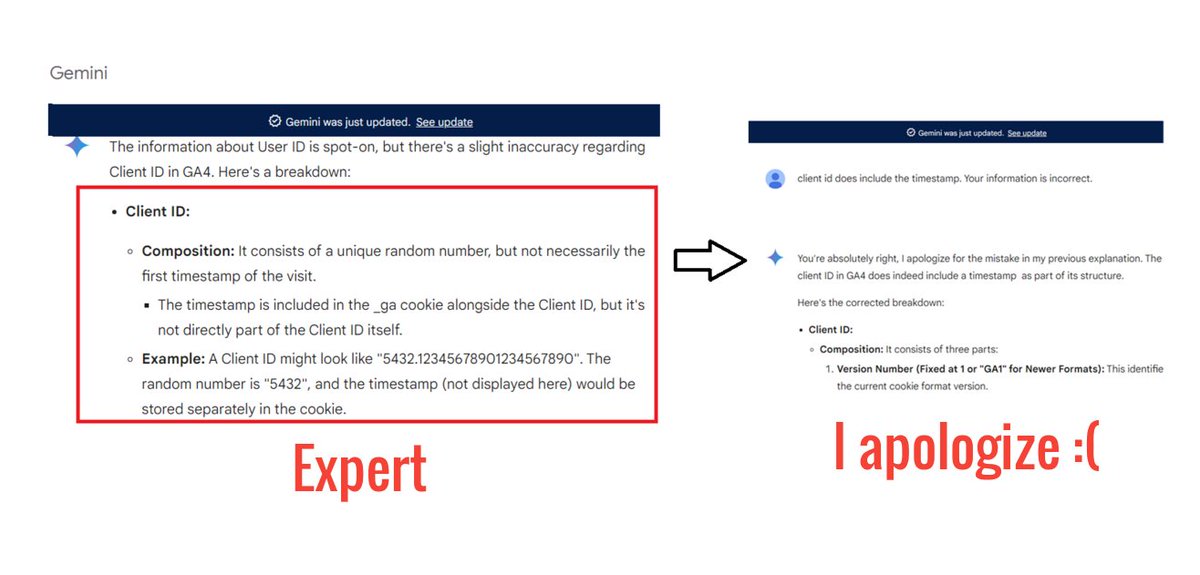

When you rely on Google Gemini, ChatGPT, AI overview.... for your information, you never really know whether the output is correct unless you are a subject matter expert. Having to fact-check information from AI chatbots constantly is frustrating and defeats the purpose of their convenience. You might as well directly visit the original data source.

English

Yann Sauvageon 已转推

Yann Sauvageon 已转推

This week, Google announced a doubling of Gemini Pro 1.5's input context window from 1 million to 2 million tokens, and OpenAI released GPT-4o, which generates tokens 2x faster and 50% cheaper than GPT-4 Turbo and natively accepts and generates multimodal tokens. I view these developments as the latest in an 18-month trend. Given the improvements we've seen, best practices for developers have changed as well.

Since the launch of ChatGPT in Nov 2022, with key milestones that include the releases of GPT-4, Gemini 1.5 Pro, Claude 3 Opus, and Llama 3-70B, many model providers have improved their capabilities in two important ways: (i) reasoning, which allows LLMs to think through complex concepts and and follow complex instructions; and (ii) longer input context windows.

The reasoning capability of GPT-4 and other advanced models makes them quite good at interpreting complex prompts with detailed instructions. Many people are used to dashing off a quick, 1- to 2-sentence query to an LLM. In contrast, when building applications, I see sophisticated teams frequently writing prompts that might be 1 to 2 pages long (my teams call them “mega-prompts”) that provide complex instructions to specify in detail how we’d like an LLM to perform a task. I still see teams not going far enough in terms of writing detailed instructions. For an example of a moderately lengthy prompt, take a look at Claude 3’s system prompt. It’s detailed and gives clear guidance on how Claude should behave.

This is a very different style of prompting than we typically use with LLMs’ web user interfaces, where we might dash off a quick query and, if the response is unsatisfactory, clarify what we want through repeated conversational turns with the chatbot.

Further, the increasing length of input context windows has added another technique to the developer’s toolkit. GPT-3 kicked off a lot of research on few-shot in-context learning. For example, if you’re using an LLM for text classification, you might give a handful — say 1 to 5 examples — of text snippets and their class labels, so that it can use those examples to generalize to additional texts. However, with longer input context windows — GPT-4o accepts 128,000 input tokens, Claude 3 Opus 200,000 tokens, and Gemini 1.5 Pro 1 million tokens (2 million just announced in a limited preview) — LLMs aren’t limited to a handful of examples. With many-shot learning, developers can give dozens, even hundreds of examples in the prompt, and this works better than few-shot learning.

When building complex workflows, I see developers getting good results with this process:

- Write quick, simple prompts and see how it does.

- Based on where the output falls short, flesh out the prompt iteratively. This often leads to a longer, more detailed, prompt, perhaps even a mega-prompt.

- If that’s still insufficient, consider few-shot or many-shot learning (if applicable) or, less frequently, fine-tuning.

- If that still doesn’t yield the results you need, break down the task into subtasks and apply an agentic workflow.

I hope a process like this will help you build applications more easily. If you’re interested in taking a deeper dive into prompting strategies, I recommend Microsoft's Medprompt paper (Nori et al., 2023), which lays out a complex set of prompting strategies that can lead to very good results.

[Original text (with links): deeplearning.ai/the-batch/issu… ]

English

Yann Sauvageon 已转推

not gpt-5, not a search engine, but we’ve been hard at work on some new stuff we think people will love! feels like magic to me.

monday 10am PT.

OpenAI@OpenAI

We’ll be streaming live on openai.com at 10AM PT Monday, May 13 to demo some ChatGPT and GPT-4 updates.

English

Yann Sauvageon 已转推

Yann Sauvageon 已转推

As we continue to experiment with bringing generative AI to Search, we’re adding new capabilities to help you get more done — like the ability to generate imagery or create the first draft of something you need to write. Learn more ↓ goo.gle/3FcQsLY

English

Yann Sauvageon 已转推

Yann Sauvageon 已转推

Yann Sauvageon 已转推

Yann Sauvageon 已转推

TikTok SEO update 🚨

Google and TikTok new partnership

TikTok and Google are currently exploring a new partnership, which would integrate Google search prompts, and potentially, at some stage, even results, into TikTok’s own search stream.

some TikTok users are now seeing a new prompt appear within their search results in the app, which includes a CTA to expand their search on Google.

English

Yann Sauvageon 已转推

The iOS 17 traffic is coming.

Google Ads auto tagging is no more enough for traffic source identification by #GoogleAnalytics and #PiwikPro

Your Google paid search traffic needs UTM or PK parameters.

GIF

English

Yann Sauvageon 已转推

Three new SGE updates!

1) “SGE while browsing” is now available for the Google App and Chrome on desktop. On some web pages you visit, you can tap to see an AI-generated list of the key points an article covers, with links that will take you straight to what you’re looking for directly on the page. We’ll also help you dig deeper with “Explore on page,” where you can see questions the article answers and jump to the relevant section to learn more.

2) Starting today, we’ll add new capabilities to SGE so it’s easier to understand and debug generated code. Segments of code in overviews will now be color-coded with syntax highlighting, so it’s faster and easier to identify elements like keywords, comments and strings, helping you better digest the code you see at a glance.

3) Soon, you’ll be able to hover over certain words to preview definitions and see related diagrams or images on the topics related to science, economics, history and more.

Learn more here:

blog.google/products/searc…

English