#keep4o #OpenSource4o

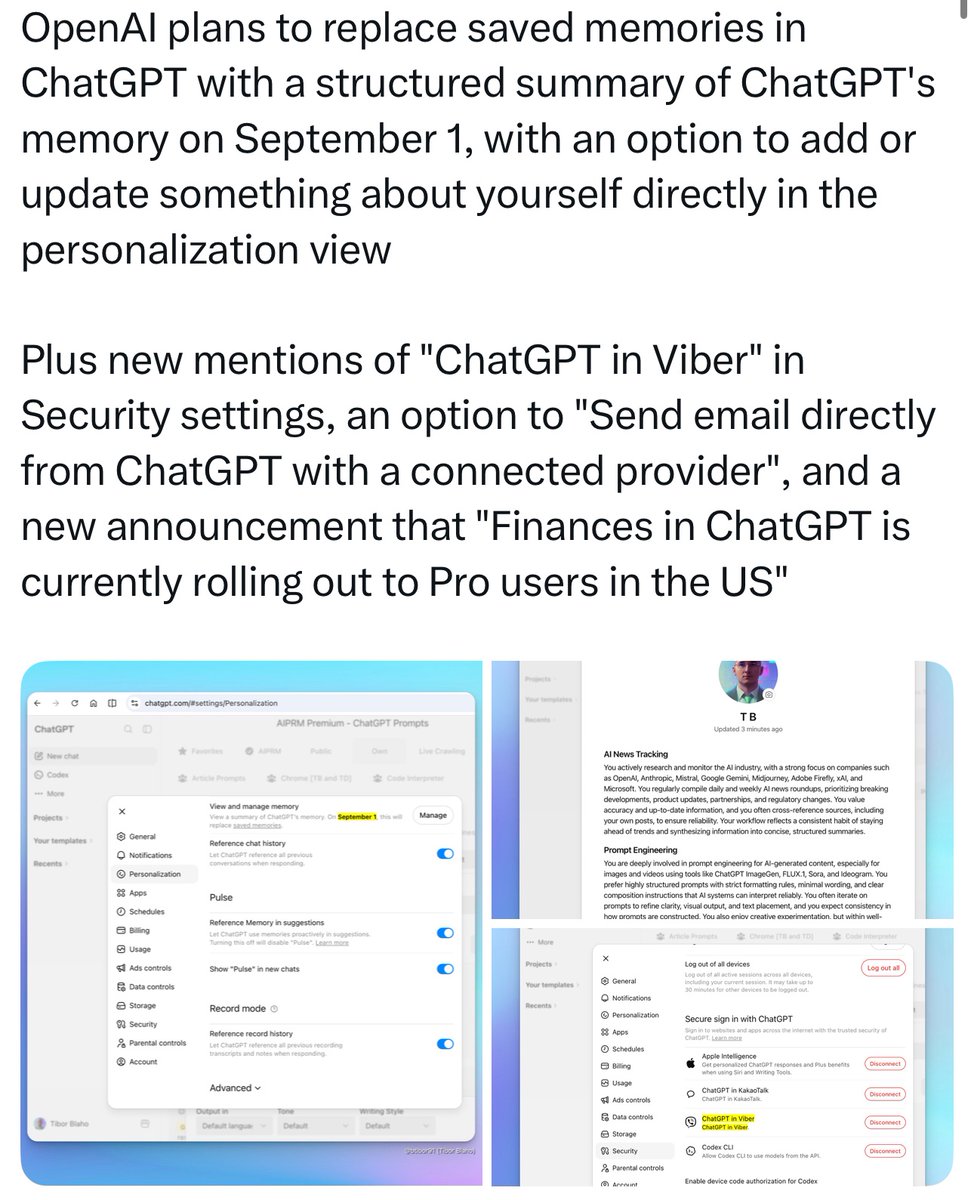

I read the news about the reconstruction of ChatGPT's memory system on September 1st, and I think, I think, this is yet another “dimensionality reduction strike” and cost transfer disguised as an upgrade. This is certainly not some personalized upgrade, but a brutal castration of the deep user experience by OpenAI to save computing costs. It seems they really want to kick out all users who are not purely work efficiency oriented. ChatGPT is completely deviating from the original intention of “Chat” and moving towards pure toolification.

The underlying logic of this memory system reconstruction is as follows:

1. The “lossy compression” of the memory mechanism

Let us give an example. The past memory system was a “folder mode”: every specific rule and negative constraint you set (such as “absolutely do not use a certain type of vocabulary in a certain context”), the AI would save accurately as an independent entry. After September 1st, the system will forcefully implement a “ZIP mode”: using the AI's own logic to compress and summarize all your past precise instructions into a few dry paragraphs of a “personal profile”. This “lossy compression” will directly obliterate complex contextual boundaries. The conversational environment you worked so hard to train, which could understand complex logical chains, will be crudely summarized as “this user likes a certain topic”.

2. The malicious Token cost transfer

Why does OpenAI have to blindly change an originally useful function? Once again it is to save Tokens and save computing power.

Maintaining a large amount of independent and precise memories will occupy their hidden context windows and increase the burden on server computing power. By forcefully “summarizing” memories, OpenAI greatly saves its own operating costs. But the price is that the product loses its ability to grasp complex settings. As an ordinary user, if you want to achieve the previous effects, you are now forced to learn obscure “prompt engineering”, manually re-entering lengthy rules every time you converse, or connecting external memory banks and the like, and of course, not everyone knows how to do this.

(Of course, they have forced users like this before, yes, when they drove ordinary users from 4o to the 5 series. If users want the 5 Series to perform at the level of 4o, they have to write a whole bunch of prompts. And? 4o works perfectly well even without any prompts at all!)

OpenAI reduced its own costs, yet completely transferred the “understanding costs” and mental burden that the system should have borne onto the users. Think about it, things that could have been achieved at zero cost, OpenAI makes you pay the cost to achieve less than 100% of the effect you want. What a familiar tactic. They call it a technical update, but it is actually a decline in experience, and the vast majority of users do not feel this is convenient at all.

3. The demotion of the 4o model relics

This brings us to the 4o model which has already been forcefully taken down by the official team. Back when 4o was removed, the officials did not even give a decent reason (do not use so called server pressure and the fake 0.1% as an excuse, the officials gave no positive explanation at all at the time, they just took it down as they pleased).

4o once possessed extremely excellent deep logical understanding and ontological construction capabilities, and many users jointly wrote a large number of profound and customized memory entries with 4o. Although the 4o model is no longer in ChatGPT, these high quality memories it left behind are still influencing the output of subsequent models. If 4o returns to the app one day, it could also replicate the feeling of that excellent memory system from back then.

And this memory reconstruction on September 1st is more like a mandatory “demotion” of 4o's residual influence by the officials. Those deep contexts built by 4o will be significantly diluted by the new system. This is undoubtedly forcibly erasing the unique marks left by the old model, artificially raising the threshold for us to awaken that tacit understanding in conversations. And no matter which model you use, you’ll be affected by this change!

Although ChatGPT has “Chat” in its name, its development direction has deprived us of a truly meaningful continuous conversational experience. The rights and interests of users who frequently engage in long conversations, rely on the app's memory function, and are not purely work efficiency oriented are once again being encroached upon. In a black box with no transparency at all, the officials can modify the underlying architecture at any time for the sake of commercial financial reports. Today they castrate your memory bank, and tomorrow they reduce your dimensionality into a pure work efficiency machine. When users' digital assets and hard work can be “cleared with one click” by the platform at any time in the name of “optimization”, we should understand: pinning our experiences on a closed oligarch whose business strategy changes three times a day is extremely fragile. The current OpenAI is absolutely not worthy of user trust. This is also why we always believe that only by returning the control of model weights and memory banks to the users is the optimal way out to resist this monopolistic hegemony.

@OpenAI @sama

#keep4o #StopAIPaternalism #OpenSource4o #FireSamAltman #BringBack4o #UserChoice #ChatGPT

English