Post

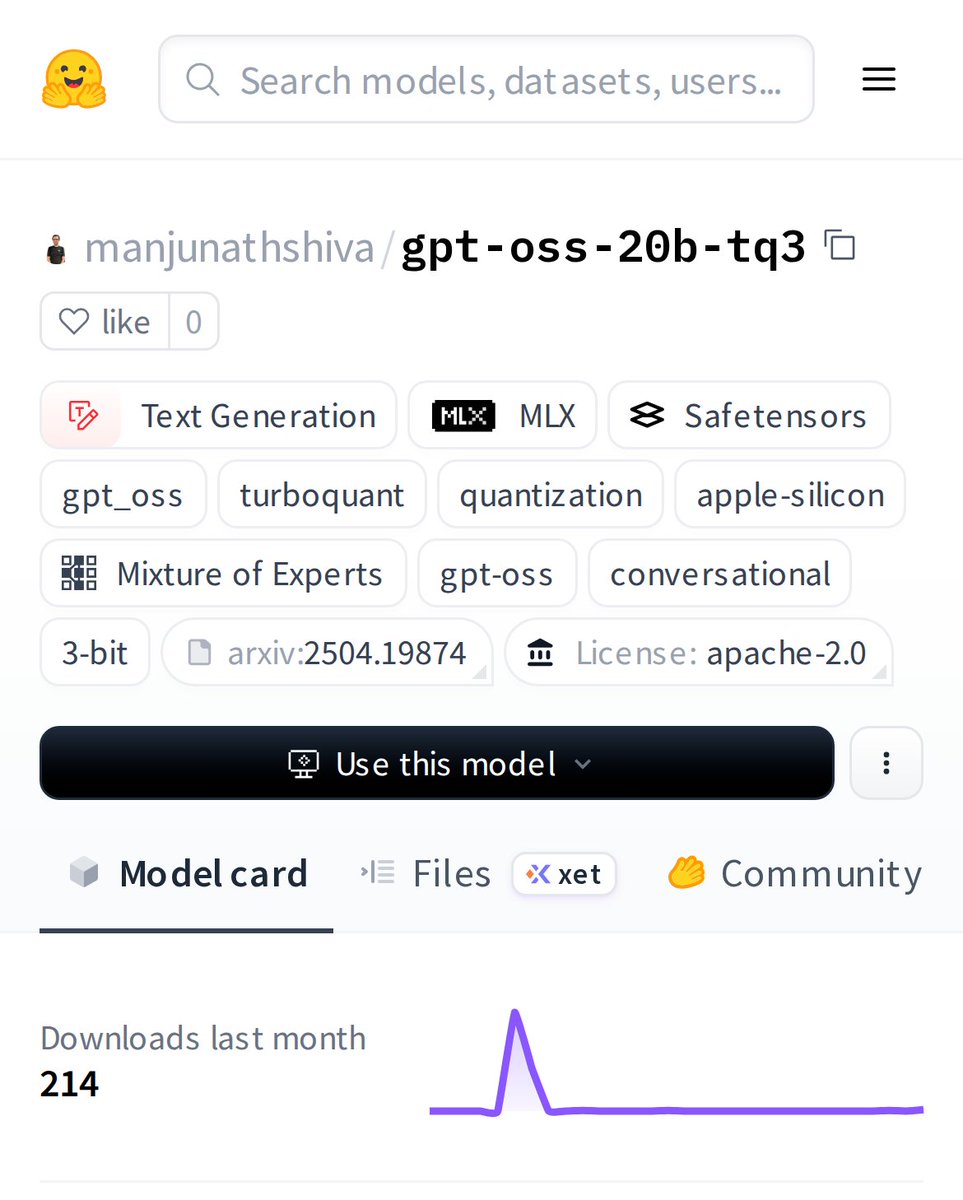

@HuggingModels apple silicon users eating good again

meanwhile the rest of us are still out here balancing tensor splits, hoping llama.cpp doesn’t explode, and pretending our gaming GPUs are enterprise accelerators

English

@HuggingModels Pretty cool release. I'm curious to see how this 20B parameter MoE model performs with MLX and TurboQuant optimizations on Apple Silicon, especially compared to other models like Llama or Mistral.

English