Need More VRAM

71 posts

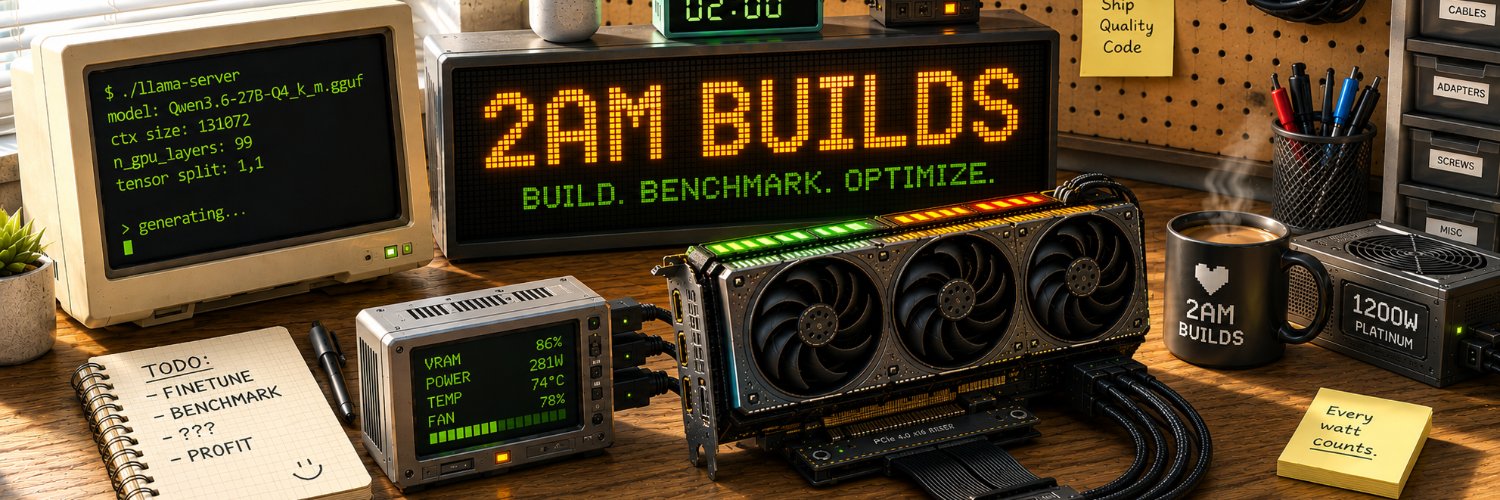

Need More VRAM

@needmorevram

running models locally until something catches fire llama.cpp • gguf • homelab

Katılım Ocak 2026

9 Takip Edilen4 Takipçiler

@witcheer After I moved to llama.cpp I never looked back, ollama is like training wheels.

English

I used to run everything through ollama and LM Studio. wrappers handle the complexity, one click, it works.

then I needed the -ncmoe flag for MoE partial offload on my 4060 Ti and neither wrapper exposed it.

so i compiled llama.cpp from source in WSL2. cmake, ninja, cuda toolkit, 40 minutes. 629 build targets, zero errors.

turboquant KV cache types (turbo2, turbo3) exist in forks right now. wrappers get them weeks later, if ever.

every flag is yours. -ncmoe, --cache-type-v turbo3, -DCMAKE_CUDA_ARCHITECTURES=89 targeting only your GPU’s architecture. the binary is smaller and faster because it’s built for your exact hardware.

debugging is possible. when hermes decode dropped from 31 to 9 tok/s, I could trace it to graph splits jumping from 62 to 82. in a wrapper that’s a black box.

ollama and LM Studio are the right starting point. once you’re running agents 24/7 and hitting limits, compile from source. the complexity is worth it because the control is real.

~~~

cmake -B build -DGGML_CUDA=ON \

-DCMAKE_CUDA_ARCHITECTURES=89 -G Ninja

cmake --build build -j$(nproc)

~~~

English

@ItsmeAjayKV Pretty cool, there is going to be so much flexibility now in your setups. You got more VRAM but I still need more VRAM for my setups.

English

🚨 The world’s first open-source 100B medical LLM is here 🏥 Local inferencers have a Health model option to run at home.

AntAngelMed (100B params, only 6.1B active) recently released:

✅ Tops open-source models on MedBench & HealthBench

✅ 200+ tokens/sec on H20 hardware

✅ 128K context length

💪 Strong in medical reasoning, safety & empathy

🔒 Runs locally (full privacy)

⚡ Only 6.1B active params (very efficient)

🧠 Fine-tunable for hospitals & research

🖥️Practical Deployment Options for AntAngelMed (100B Medical LLM) (all are only estimates)

✅ Best Balance → INT4 (~50 GB) on 2–4x GPUs (RTX 5090 / 4090)

✅ Max Quality → FP8 (~100 GB) on (DGX Spark, Mac Studio 128GB)

✅ Budget Option → INT4 (~50 GB) on 2x RTX 4090 + CPU offload (slower)

❌ Single RTX 5090 (32GB) → Not recommended (model too big)

❔ GGUF could bring down the size even more

Built by Zhejiang Health + Ant Healthcare.

A big jump for open & privacy-friendly medical AI.

English

@stableAPY Something interesting to note, MTP while coding is actually really great. I have seen more tk/s when I use Qwen 27B for coding tasks than without MTP as code is very predictable.

English

I also tried Unsloth's 35B A3B MTP on my 3060 12gb, and it's not as good as on the 3090

MTP probably gives better decode speed but leads to more experts being offloaded to the CPU

so overall decode speed is lower at the end with MTP version 33 tok/s vs 39 tok/s

l'll stick with classic ik_llama.cpp for my 3060

stableAPY.hl@stableAPY

Unsloth released MTP (Multi-Token Prediction) version of Qwen 3.6 27B and 35B A3B this gives a pretty nice boost on the decode side, but it impacts a bit the prefill I think this will still be my default setup to gain a bit of decode speed, the drawback on the prefill is acceptable for me you'll need this specific branch for llama.cpp : github.com/ggml-org/llama… huggingface.co/unsloth/Qwen3.… huggingface.co/unsloth/Qwen3.…

English

4090 24GB would be solid here, you can replace your main GPU with this. Even a 3090 24GB would be pretty good since now you can fit a lot of good models into a single card.

I myself run a multi gpu setup however if I could opt for a 3090/4090 I would for simplicity.

I would get a 1000w psu instead of a 850w for more room Incase to add another card to the system or do some OC. Just my opinion.

English

I'm about to make my next computer hardware purchase.

If you know your way around computers, please help me make sure this is a wise choice. it's a significant investment, and this will be my first time buying computer hardware.

I currently have:

>CPU: AMD Ryzen 5 7600X (6 cores, Zen 4, AM5)

>Cooler: Arctic Liquid Freezer II 360 A-RGB + Arctic MX-4 paste

>Motherboard: ASUS TUF Gaming A620M-PLUS WIFI (mATX, A620 chipset)

>RAM: 32GB DDR5-6000 CL36 (2×16GB Corsair Vengeance)

>GPU: MSI GeForce RTX 4060 Ti VENTUS 3X OC 8GB

>Storage: Kingston KC3000 1TB NVMe

>PSU: MSI MAG A650GL (650W, 80+ Gold)

I want to buy:

>Used RTX 4090 24GB

>PSU 850W 80+ Gold (e.g. Corsair RM850x)

>RAM 64GB DDR5-6000 (2×32GB)

English

@ray_civ Possible, I suspect it is my pci raiser board. I’m using the ones used for mining and I believe they are cheap quality.

English

@needmorevram PCI resource conflicts are one of those bugs that feel random until you map the bus and the IOMMU groups. I’d check lspci -tv, dmesg, and kernel boot params before blaming the GPU.

English