Transformer-based large-language models display striking competencies, yet they lack flexible, dynamic long-term memory, explicit goal systems, and the self-reflective loops needed for open-ended adaptation.

Symbolic systems, in contrast, offer clarity and self-modification but have struggled to match the perceptual breadth of deep learning.

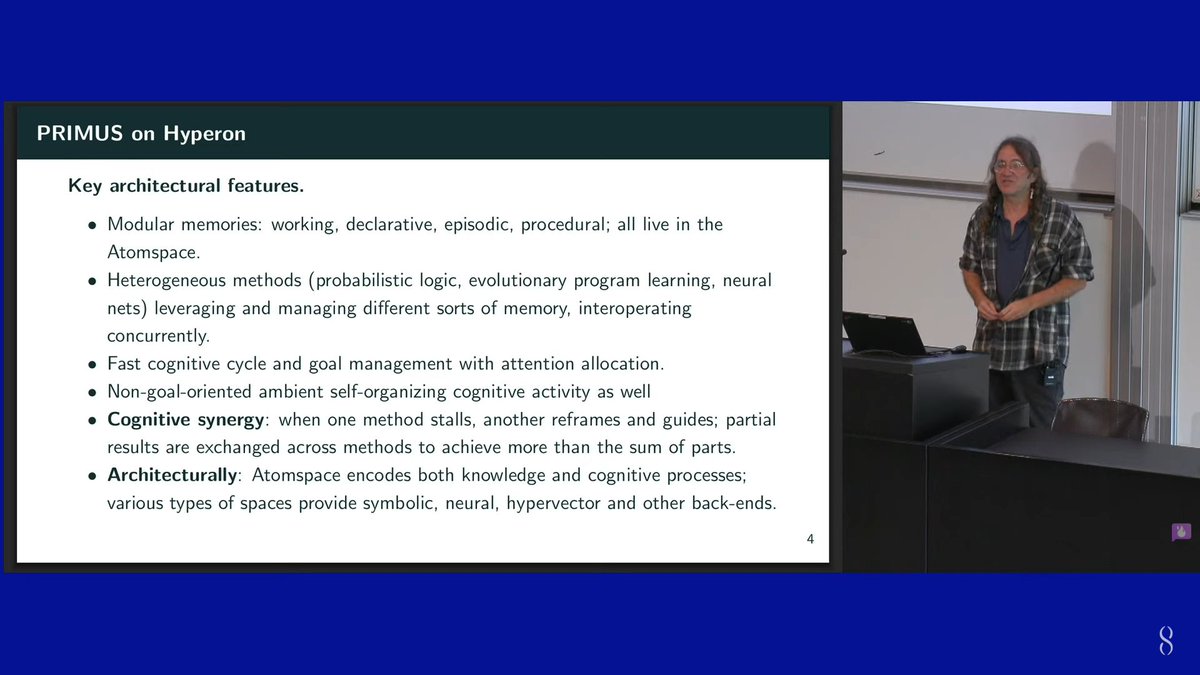

OpenCog Hyperon seeks to bridge this divide by embedding neural, logical, and evolutionary processes inside a single metagraph whose elements can rewrite one another in real time

English