S.J. Bridger

289 posts

S.J. Bridger

@SJBridgerWrites

Four Frequencies structural resilience diagnostics. Author of The Fuse is Short: Let's Roast Marshmallows.

@pvergadia Nah, dropped six weeks ago, and the your conclusion is more nuanced than that. It depends on how you use it. If you use it correctly (Generation-Then-Comprehension) then it will yield even better results than without. Didn't you read the paper?

Introducing Dasher Tasks Dashers can now get paid to do general tasks. We think this will be huge for building the frontier of physical intelligence. Look forward to seeing where this goes!

@EricRWeinstein The Coase frame is right but the timing problem is brutal. You negotiate licensing terms before extraction, not after. Most of the value has already been ingested. The leverage point passed while people were still debating whether AI was real.

Who’s Coase

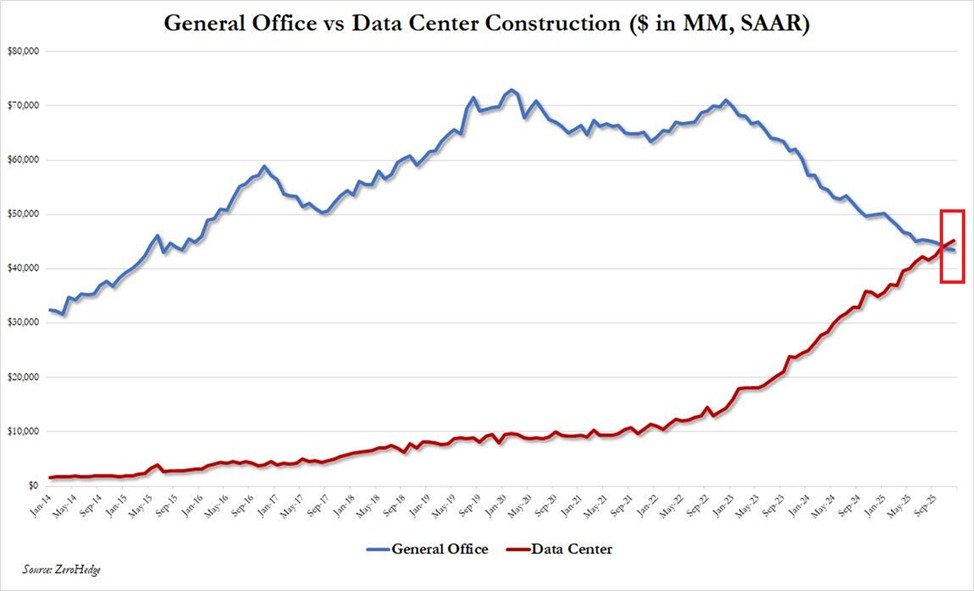

AI is going to make a lot of people feel poorer, not richer.