Sam Ward

466 posts

Sam Ward

@Samward

Investigating & Explaining things | SentinelAi | Sentinel Legal | OpenClaw 🦞 | All views are my own. 🇬🇧 🇺🇸

THIS IS INSANE. Allbirds is now up 910% in a single day, adding $143 million to its market cap. Yesterday it was just a $22 million company that was about to shut down forever.

Take 9 minutes and listen to the most sober and important statements about AI and the US position in the world by the very person that has facilitated AI long before most understood what it really was. Jensen Huang of Nvidia on why THE US MUST LEAD OPEN SOURCE…NOW…

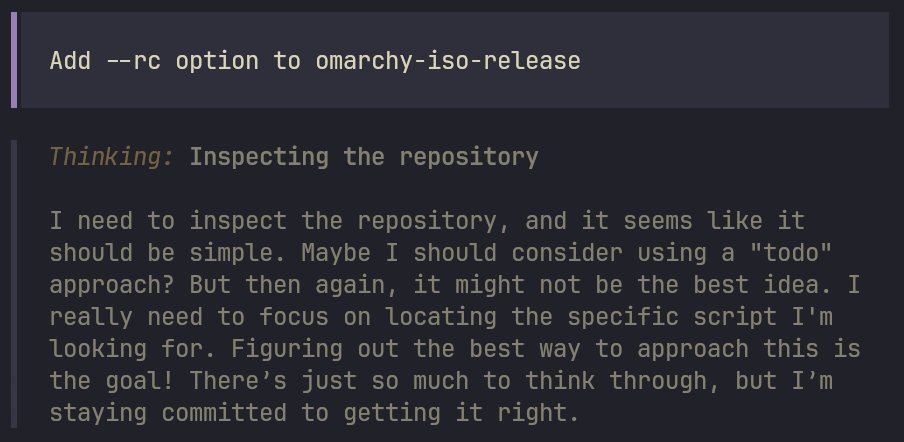

That was the case in December. 4 months and thousands of work hours later, we have a great security concept; you can go all yolo, use a sandbox (Docker or OpenShell), there are allow-lists and per-access exec allow/deny prompts. There’s hundreds of security researchers that pen-tested it.

Introducing Windsurf 2.0. Manage all your agents from one place and delegate work to the cloud with Devin - so your agents keep shipping even after you close your laptop.