Sam Ward

458 posts

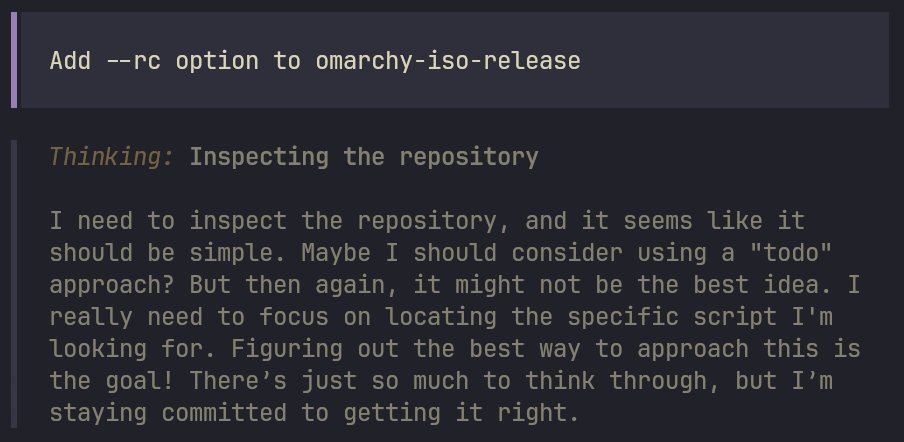

Sam Ward

@Samward

Investigating & Explaining things | SentinelAi | Sentinel Legal | OpenClaw 🦞 | All views are my own. 🇬🇧 🇺🇸

Take 9 minutes and listen to the most sober and important statements about AI and the US position in the world by the very person that has facilitated AI long before most understood what it really was. Jensen Huang of Nvidia on why THE US MUST LEAD OPEN SOURCE…NOW…

That was the case in December. 4 months and thousands of work hours later, we have a great security concept; you can go all yolo, use a sandbox (Docker or OpenShell), there are allow-lists and per-access exec allow/deny prompts. There’s hundreds of security researchers that pen-tested it.

Introducing Windsurf 2.0. Manage all your agents from one place and delegate work to the cloud with Devin - so your agents keep shipping even after you close your laptop.

Open source is dead. That’s not a statement we ever thought we’d make. @calcom was built on open source. It shaped our product, our community, and our growth. But the world has changed faster than our principles could keep up. AI has fundamentally altered the security landscape. What once required time, expertise, and intent can now be automated at scale. Code is no longer just read. It is scanned, mapped, and exploited. Near zero cost. In that world, transparency becomes exposure. Especially at scale. After a lot of deliberation, we’ve made the decision to close the core @calcom codebase. This is not a rejection of what open source gave us. It’s a response to what risks AI is making possible. We’re still supporting builders, releasing the core code under a new MIT-licensed open source project called cal. diy for hobbyists and tinkerers, but our priority now is simple: Protecting our customers and community at all costs. This may not be the most popular call. But we believe many companies will come to the same conclusion. My full explanation below ↓

@steipete @openclaw I don't think OpenClaw is a reference. It literally doesn't have a proper security model. Nothing on OpenClaw is secure by design.

We just raised a $45M Series B at a $500M valuation led by @a16z and @SalesforceVC to build the knowledge infrastructure for AI

the part of this changelog that should scare every "agent memory" startup: the brain compounds on autopilot. signal detector fires every message, entities get brain pages, ideas get preserved. no explicit "save to memory" step. the agent just gets smarter by being used. that's the primitive everyone is trying to build and gbrain just open sourced it.