Shiv

2.8K posts

@TensorTunesAI

Agentic AI | Learning ML and DL in public | Sharing resources & daily notes

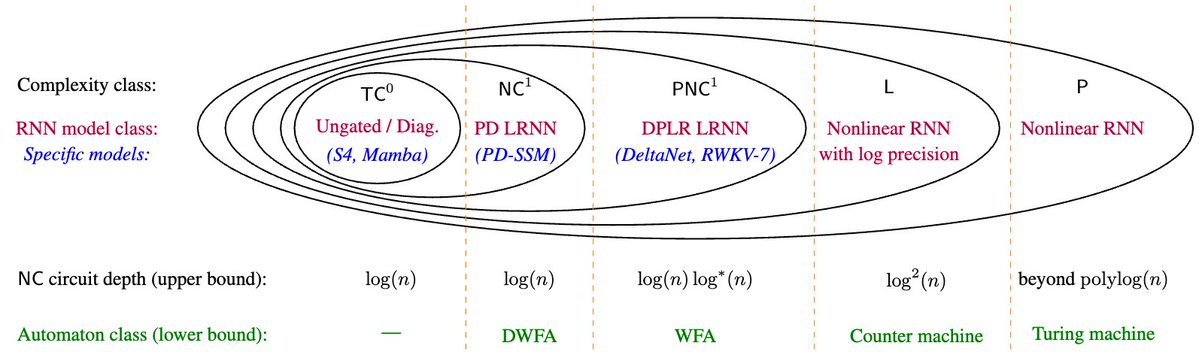

[1/8] New paper with Hongjian Jiang, @YanhongLi2062, Anthony Lin, @Ashish_S_AI: 📜Why Are Linear RNNs More Parallelizable? We identify expressivity differences between linear/nonlinear RNNs and, conversely, barriers to parallelizing nonlinear RNNs 🧵👇

ran some quick weekend experiments on @SarvamAI's 105B model on a subset of the IndicMMLU-Pro dataset Sarvam's model is really good at reasoning efficiency. uses ~2.5x less tokens to reach ~same accuracy

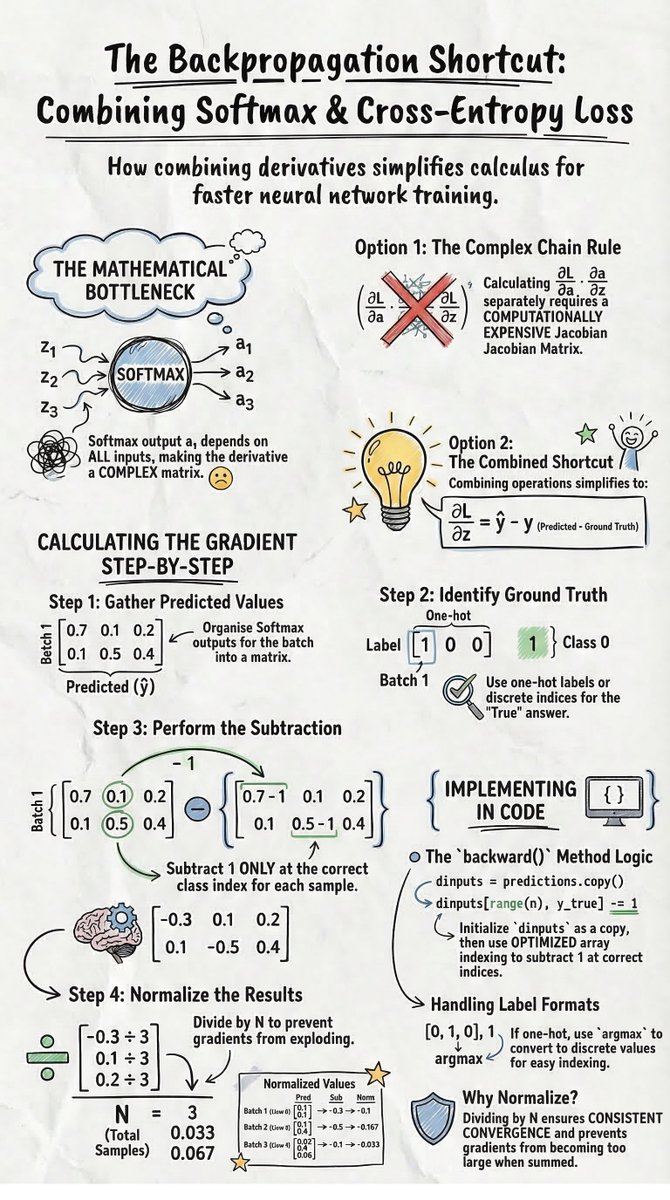

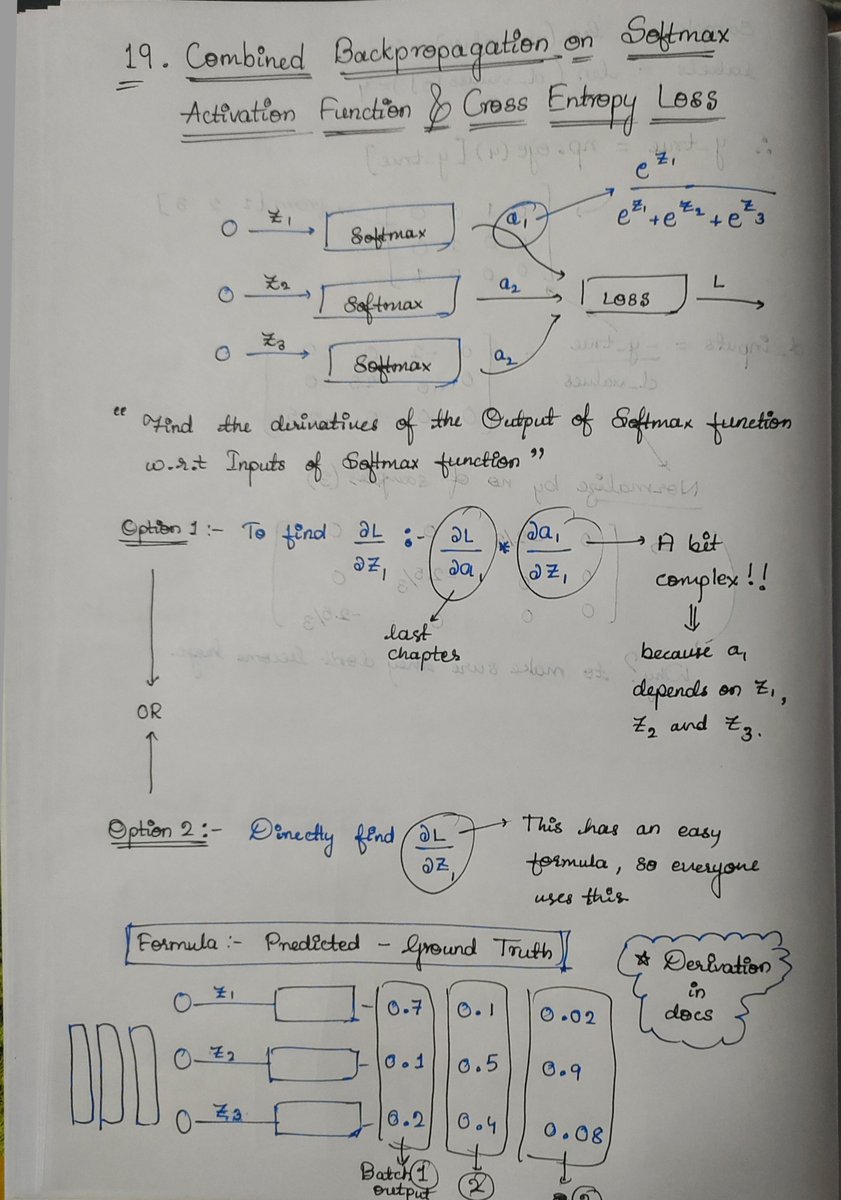

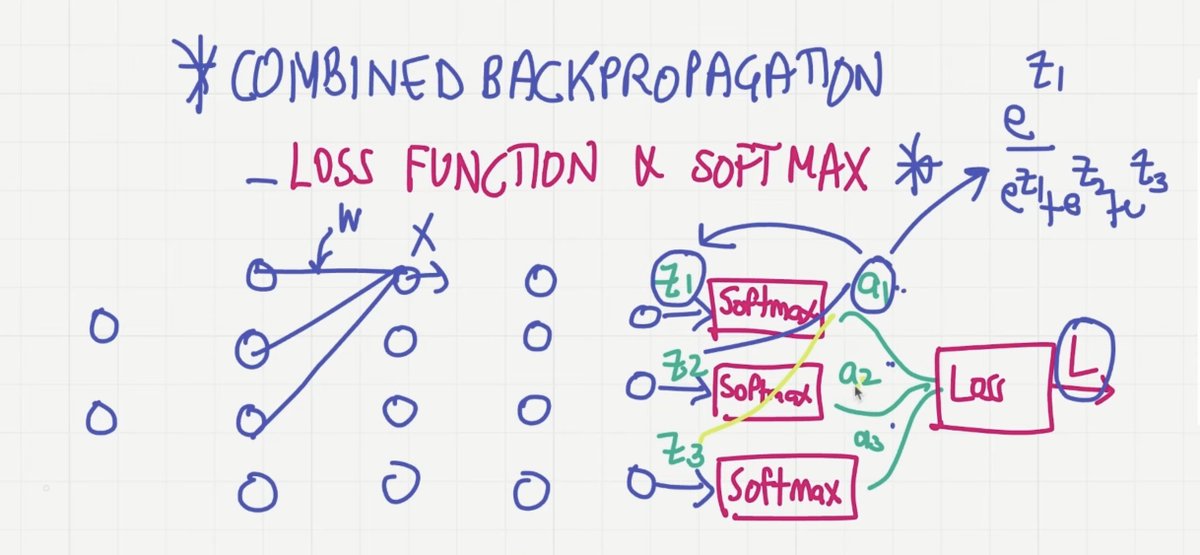

Day 15 of building Neural Networks from first principles Today: Backpropagation through categorical cross-entropy (step-by-step) -> Notes and Documentation attached Here’s the intuition Loss function L = −∑ y · log(ŷ) Since y is one-hot, only ONE term survives → L = −log(ŷ_correct) Key gradient ∂L/∂ŷ = −y / ŷ Meaning: • Only the correct class gets gradient • Smaller ŷ → larger penalty → stronger learning Batch version (vectorized) d_inputs = −y_true / y_pred Stabilization Divide by number of samples (N) → prevents exploding gradients This is the exact signal that flows backward and updates weights in the network