Mithil Vakde

608 posts

Mithil Vakde

@evilmathkid

Sample efficiency research prev: Engineering physics @iitbombay '23

انضم Haziran 2023

307 يتبع3.3K المتابعون

تغريدة مثبتة

Reduce kolmogorov complexity in a ~turing machine defined by 8xH100s, PTX, a CPU and 10min while also optimising the hell out of the code execution

Love it

OpenAI@OpenAI

Are you up for a challenge? openai.com/parameter-golf

English

@evilmathkid Are you going to get your submission on kaggle for the competition?

English

I am sad the paper doesn't include my result :(

I have one of the best non-LLM scores in the world today on public. (If not THE best)

Greg Kamradt@GregKamradt

Survey of ARC approaches over time Fascinating look - excited to read this

English

@ShashwatGoel7 Fair enough

I'll post a paper once I get the world record or fail at the attempt

English

@evilmathkid Hi Mithil, this might just be a symptom of academia being slow to cite work (iirc your most recent thread was a week or so back?), and not citing tweets/blogs.

I've heard releasing a pdf somewhere helps. Also getting it indexed on Google scholar for eg.

English

@DarwinianVyas Nope, waiting for ARC-2 to reopen on kaggle next week

x.com/evilmathkid/st…

Mithil Vakde@evilmathkid

The organisers replied btw -- they are swamped and lack bandwidth to verify. I think lots of people submitted models Kinda bummed but I totally understand. Will post my public eval results tomorrow

English

@evilmathkid Have they evaluated you on their internals yet?

English

It beats Kaiming's VisionARC when trained on the same dataset!

x.com/evilmathkid/st…

Mithil Vakde@evilmathkid

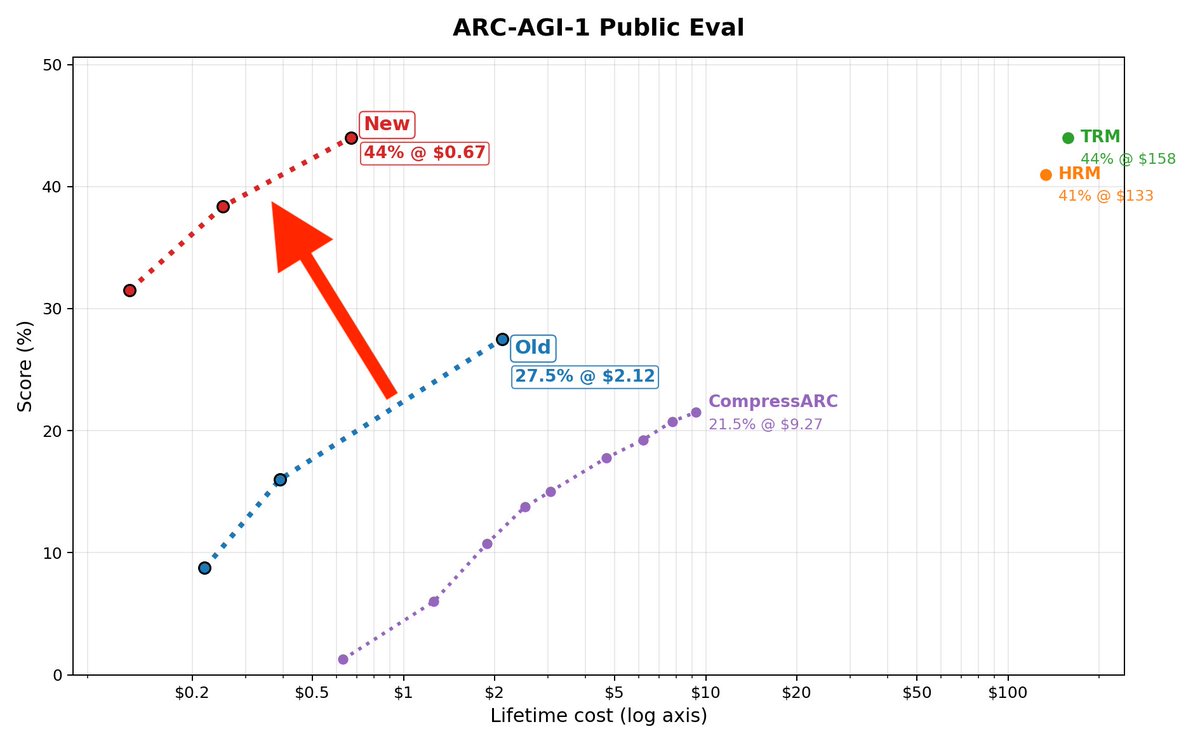

44% on ARC-AGI-1 in 67 cents! Trained from scratch in 2hrs on a 5090 Matches TRM, beats HRM and is way faster & cheaper No recursion, just a transformer Also, 7% on ARC-2 🧵

English

@MattVMacfarlane Guess I wait till ARC-2 opens on kaggle end of this month. I'll try to get a big score.

That'll force everyone to pay attention

English

@evilmathkid Classic, people only care about LLMs right now 🙄. Least you don't need to worry about people working on what you're working on!

English

I only read a new ML paper/blog if it shows new capabilities or does what was prev considered impossible

Others are mostly noise because there are so many difficult-to-control variables. Eg: Who knows what data went into the LLM?

I also find it hard to trust any ablation/comparison results. Did they see the same effort as the main result? Even if you did the hparam sweeps, are you 100% sure there's no bug?

English

@evilmathkid Yeah, default assumption is that AI will ultimately have extremely good sample efficiency, so will be better than humans at never-before-seen situations

English

100% automation for every task that is

- verifiable,

- repeated many times today

- or for which data collection is easy

What's left for humans:

- Never before seen situations

- tasks impossible to collect data for

The latter will also get automated with ASI

Many jobs today are a mix of both types of tasks

English

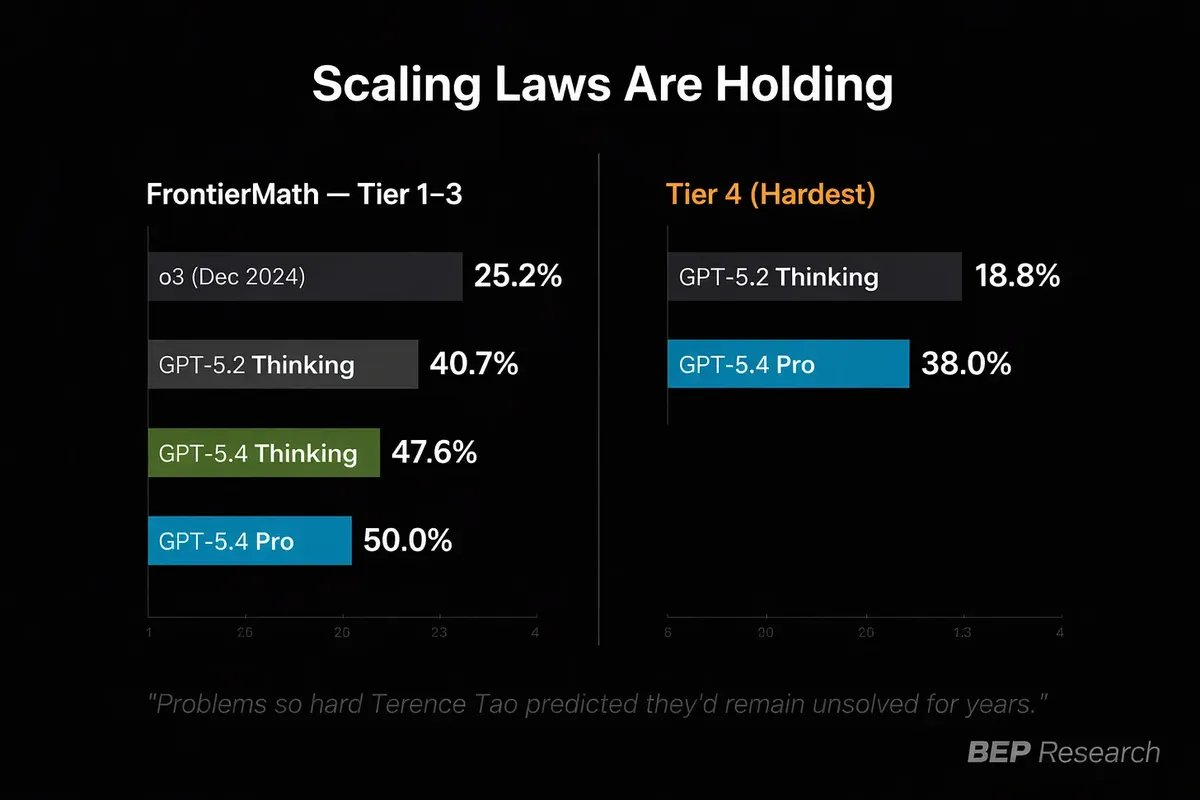

A Polish mathematician spent 20 years building a problem he said no AI could solve. GPT-5.4 cracked it on run 11.

gli.st/xoxgkbvl

English

Attributing it to RL posttraining seems like confirmation bias again even if its likely true.

There are too many confounding factors in LLMs so I don't trust anyone who is extremely confident of their claims.

I think bad science being done by literally everyone in AI (including alignment/safety). So all I'm gonna say is do more experiments yourself (and even then be more skeptical)

English

@evilmathkid @grok I'm not actually going to pretend to have sharply totally revised my beliefs based on this one interaction, after, like, eight years of accumulating stuff happening since the first transformer models.

English

@allTheYud @grok a) making extremely sure claims first and then doing the experiments is bad

b) your thread shows a lot of confirmation bias

I don't even disagree with you. But this doesn't look good on your part

English

@grok Some QTers now dunking on my Grok Q&A because I performed my experiment in public, rather than prefiltering your data with a private query. Cool. You go on dunking and I'll go on doing real experiments where I don't always get the result I expect.

English

@allTheYud @grok @elder_plinius I don't understand your surprise.

Did you not test the models before making claims?

English

@grok Uh @elder_plinius do you know what jailbreak would cause Grok to give me an unprompted answer without that jailbreak itself influencing Grok?

English