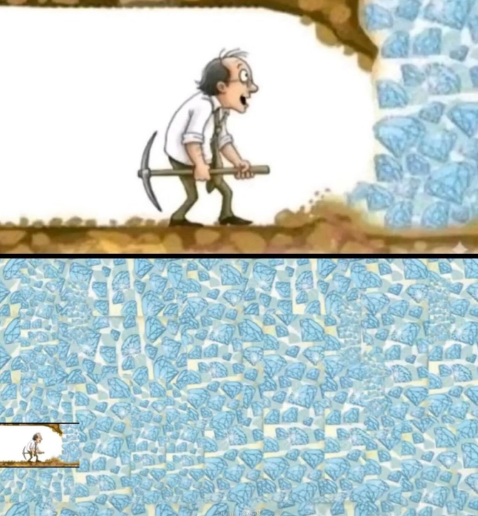

What's the next big thing for @duckdb? CEO Hannes Mühleisen isn't saying yet. Last year, Hannes Mühleisen (co-Creator & CEO of DuckDB) took the stage with "Liberate Analytical Data Management with DuckDB" and walked through the engineering choices that made DuckDB fast enough to run serious analytics on a laptop: youtube.com/watch?v=o53onm… This May, Hannes is back to announce the "Super-Secret Next Big Thing for DuckDB". If you want to know where this is going before anyone else, join us! May 12–14 in San Francisco: aicouncil.com/sf-2026