Post

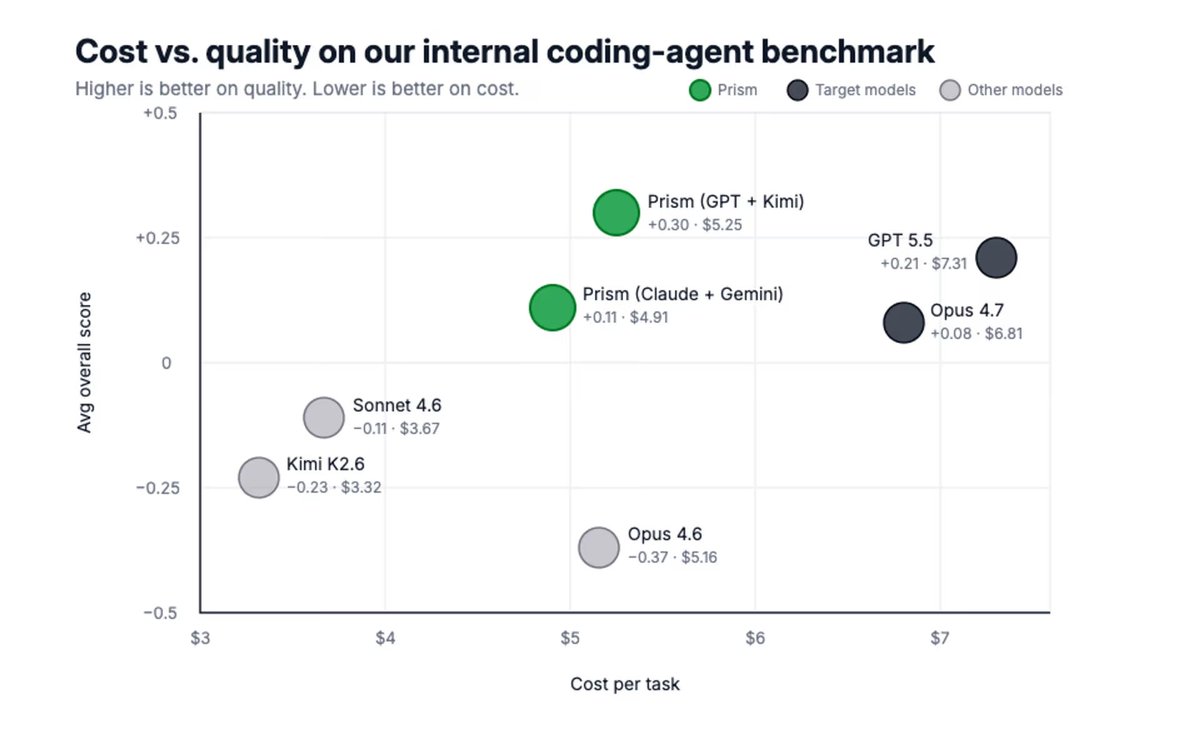

Token usage is exploding, and so is cost.

The best models deliver undoubtedly superior quality, but all tasks are not created equal.

For simple tasks, using the SOTA reasoning model is like driving a Ferrari to go 4 blocks to the grocery store.

Prism is a meaningful cost decrease: teams sending 10,000 user messages a month can expect to save $20,000 on their token spend, at similar or better quality.

English