Sabitlenmiş Tweet

Augment Code

1.7K posts

Augment Code

@augmentcode

More agents ≠ engineering transformation. Meet Cosmos, the OS for agentic software development.

Katılım Kasım 2023

44 Takip Edilen21.4K Takipçiler

Harvey built their coding agents to be collaborative by design. Engineers, PMs, and researchers all contribute context to the same agent, not parallel ones running on separate laptops.

They published a blog post on how it works. We're going behind it.

We're hosting a live conversation with Joey Wang, Engineering Lead at Harvey, to dig into what the blog post didn't cover: the architecture decisions, the tradeoffs, and what didn't work the first time.

If you're thinking about where cloud agents fit into your engineering org, this is the conversation to be in.

Register here for our session on May 5 at 10 AM PT:

watch.getcontrast.io/register/augme…

English

Prism is in the picker today: VS Code, JetBrains, CLI (/model), and web.

Billing rolls up under a single Prism line item. The underlying model that handled any given turn isn't surfaced.

The point is to stop making you think about it.

Read more on how we built it: augmentcode.com/blog/augment-p…

English

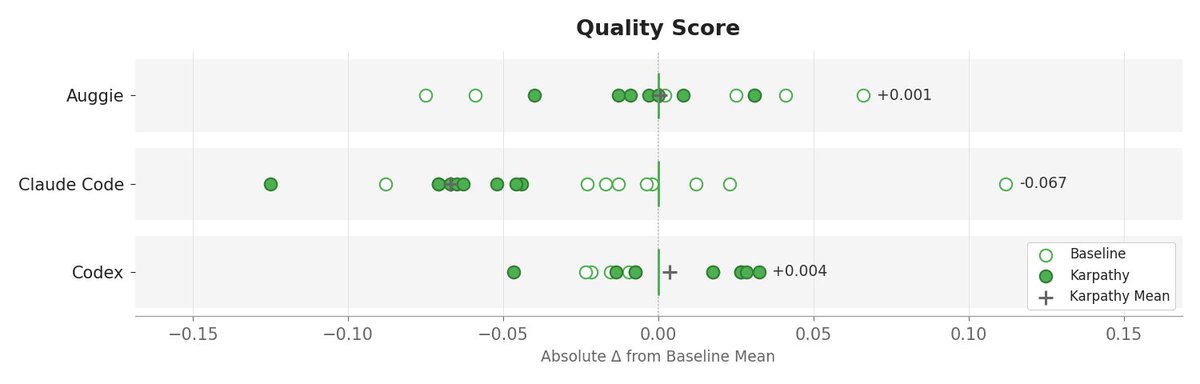

Quality: basically unchanged for Auggie and Codex. Claude Code dropped −0.07, with more conservative trajectories and ~5% fewer files touched per task.

Karpathy-style guidelines don’t transfer uniformly across agent harnesses and repositories.

In Codex, the guidelines likely add useful structure (improving efficiency).

In Augment, the baseline prompt already encodes similar constraints, so the marginal impact is smaller.

In Claude Code, the system prompt may already be highly constrained, so layering additional constraints could reduce exploration and degrade performance.

English

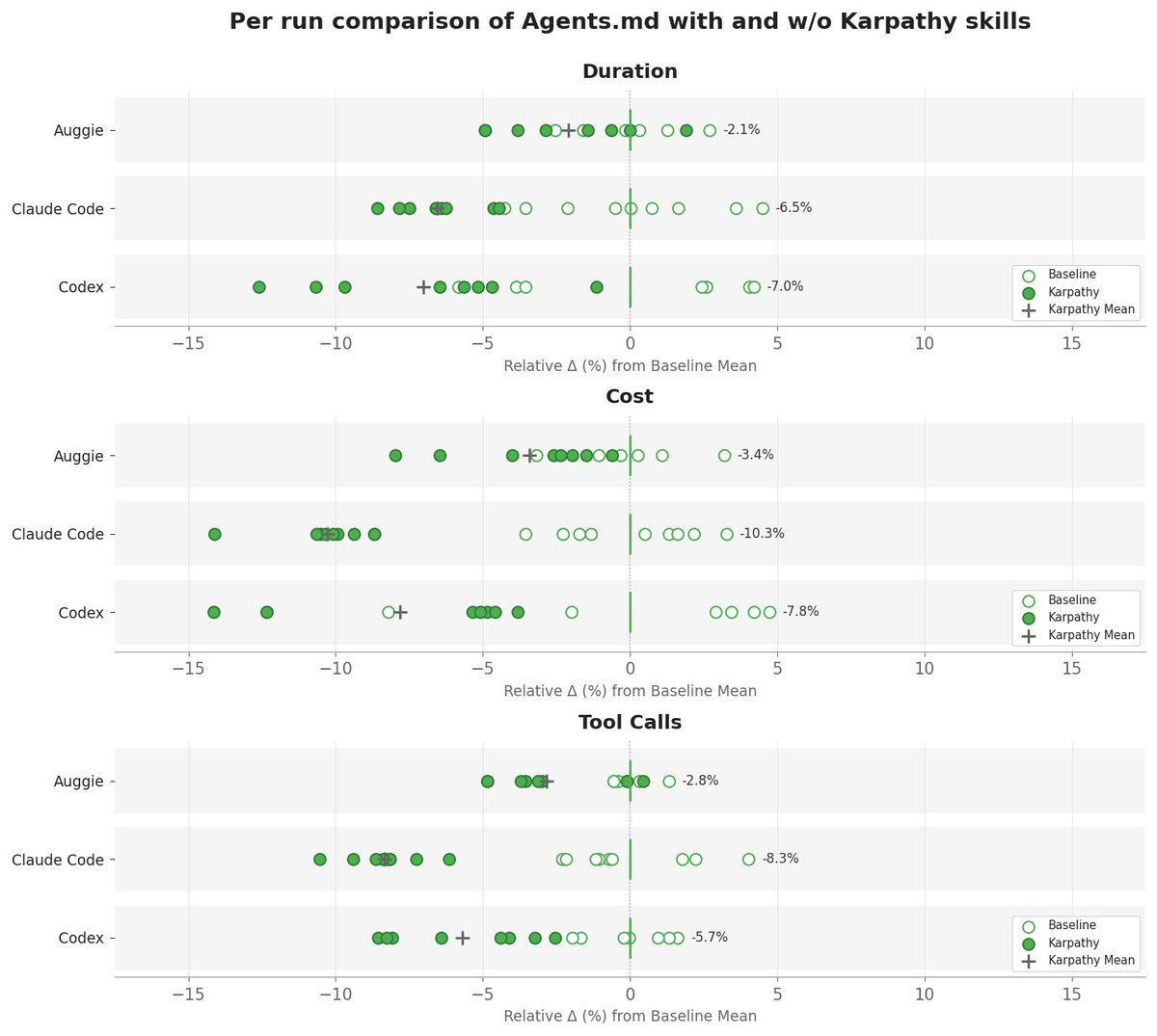

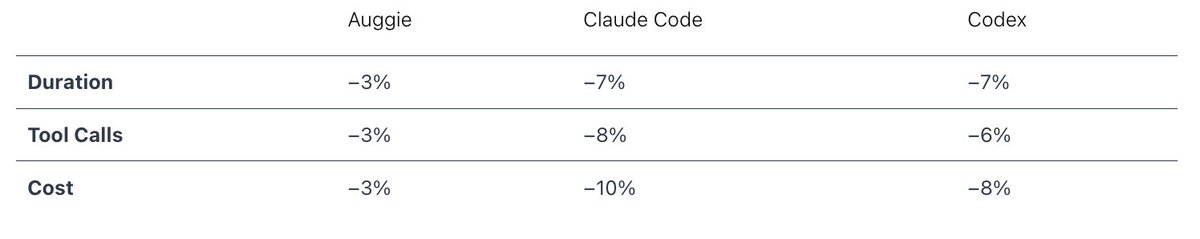

We added @karpathy -inspired coding rules from @jiayuan_jy to AGENTS.md and ran 40 @openclaw PRs through three coding agents.

The result:

Code quality was basically unchanged, but the agents got there with less work. Fewer tool calls, lower time and cost.

English

Every runner used fewer tool calls and finished faster. The agents found what they needed in fewer lookups.

Output tokens fell by similar margins across runners. Per PR, Karpathy was faster and cheaper on about 30 of 40 PRs. The pattern held across all three agents.

A 3–10% efficiency gain from a small prompt change isn't a model breakthrough, but if you're running a coding agent at scale, it's real money, real latency, and real capacity.

English

Most engineering orgs have adopted AI coding tools.

Far fewer have changed how they build software.

There's a difference between adding AI to your workflow and rebuilding the workflow around AI. We're hosting a session that discusses an engineering team that transformed their SDLC, and sharing exactly what it took.

If you're leading an engineering org and trying to move from experiment to operating model change, this one's worth your time.

Register here for our session on May 1 at 10:30 AM PT:

watch.getcontrast.io/register/augme…

English

The biggest mistake engineering leaders are making with AI is treating codegen as the whole transformation.

Engineers only spend ~16% of their time actually writing code.

So even "perfect" AI codegen only attacks 16% of the system.

The real leverage is in context, workflows, review, docs, architecture, and removing bottlenecks.

English

@pulsar_099 Should be available now! Let us know if you're not seeing it!

English