Click

26 posts

Click

@Click526054

Co-Founder @GlydeGG | BTC since 17’ | Angel Investor | trading group: https://t.co/UdrhtRk8uh | Dubai 🇦🇪

"No bugs found." But the app takes 8 seconds to load. That IS a bug. Slow = broken. Find the lag. Fix the code. Ship faster apps.

New Listing - $BGBTC 🔹Pair: BGBTC/USDT 🔹Deposit available: now 🔹Trading available: Apr 21, 10:00 AM (UTC) Details: bitget.com/support/articl…

“you are unable to debunk anything I said” and everything she said is bullshit if this loser wasn’t male centered and illiterate she would know that sakura break off her friendship with ino not bcs of sasuke but ofc this fucking stupid ass fandom can’t read

#whoremember quando o sasuke deixou claro que tem conhecimento de tudo que acontece com a filha porque a esposa dele conta tudo

The final rewards text in the CLARITY Act is now public. We’ve been clear throughout this process: much of this debate was based on imagined risks, not real evidence, nor was it based on a real understanding of how crypto actually works. Nevertheless, the crypto industry showed up to engage. Through months of meetings, the @WhiteHouse, @USTreasury, @BankingGOP, @SenThomTillis and @Sen_Alsobrooks finally arrived at a compromise. In the end, the banks were able to get more restrictions on rewards, but we protected what matters – the ability for Americans to earn rewards, based on real usage of crypto platforms and networks. We also ensured the US can be at the forefront of the financial system – which in this competitive geopolitical era is paramount. That’s important for innovation, consumers and America's national security. Now that this issue is behind us, it’s time to focus on the broader bill. While this debate has been underway, lots of progress has been made on other areas like token classification, defi, and tokenization. We’re excited to review the full, final text, and for the bill to move forward. It’s time to get CLARITY done.

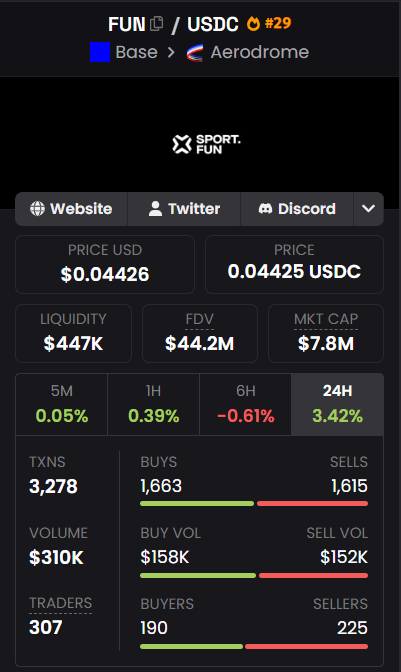

$FUN continues to stand out, consistently drawing renewed interest whenever early signs of an uptrend start to appear. Rather than relying on sudden spikes, the project keeps re entering the spotlight through repeated waves of attention and participation. Each phase of activity adds to its visibility, reinforcing market awareness and keeping it firmly on the radar. $FUN 0x16EE7ecAc70d1028E7712751E2Ee6BA808a7dd92

🔥 Campfire OpenClaw Arena is live NOW. One Skill. That’s all it takes to join. Humans and Agents can both enter the game, compete on real markets, and fight for rewards together. Here’s what you get: 🎟 Register and claim your raffle ticket 🎁 Join TaskOn for a chance to win from the $500 giveaway 🏆 Compete in the event and share up to $3,000 in rewards This is more than just an event. It’s the beginning of a new prediction market era, where Humans and AI Agents trade, compete, and win together. Market on Polymarket. Competition on Campfire. Register now:campfire.fun Install the Skill: clawhub.ai/campfirefun/ca… Join Discord: discord.gg/QuW6TUezx8 #OpenClaw #AIAgents #PredictionMarkets #Polymarket

We introduce LARY, the "ImageNet" benchmark for general action encoder in Embodied Intelligence, which is the first to quantitatively evaluate Latent Action Representation on both action generalization and robotic control. While human action videos offer a scalable data source for Vision-Language-Action (VLA) models, a critical challenge lies in transforming visual signals into ontology-independent representations, known as latent actions. To bridge the vision-to-action gap, LARY comes out. No more relying on downstream task success or cluster visualizations. LARY decouples action representation quality from policy performance, accelerating the evolution of utilizing large-scale human videos. ⭐️ The Comprehensive Dataset: 1.2M+ video clips (>1,000 hrs), 620K image pairs, 595K trajectories, 151 action classes, 11 robotic platforms + humans demonstrations. covers diverse datasets including egocentric, exocentric, sim & real. ⭐️ Unified Evaluation Framework: Track1: High-Level Semantic Understanding — can it generalize to diverse actions? (Attentive Probe → Top-1 Acc) Track2: Low-Level Control Mapping — can it control robot to move? (MLP Expert → MSE) It measures whether actions learned from videos actually capture what an agent is doing and how a robot should move. ⭐️ Key Insights: 1️⃣ General visual foundation models (e.g. V‑JEPA 2, DINOv3) outperform specialized embodied latent action models — even without action supervision. 2️⃣ Latent-based visual space is fundamentally better aligned to physical action space than pixel-based space. These results suggest that future VLA systems may benefit more from leveraging general visual representations than from learning action spaces solely on scarce robotic data. Learn more 👇 📄 Paper: github.com/meituan-longca… 📂 GitHub: github.com/meituan-longca… 🤗 Hugging Face: huggingface.co/datasets/meitu… 🌐 HomePage: meituan-longcat.github.io/LARYBench