Abdulmajeed

80 posts

Abdulmajeed

@CreativeS3lf

Currently, doing my Master’s at @CMU MLT program

Universe Beigetreten Haziran 2023

337 Folgt42 Follower

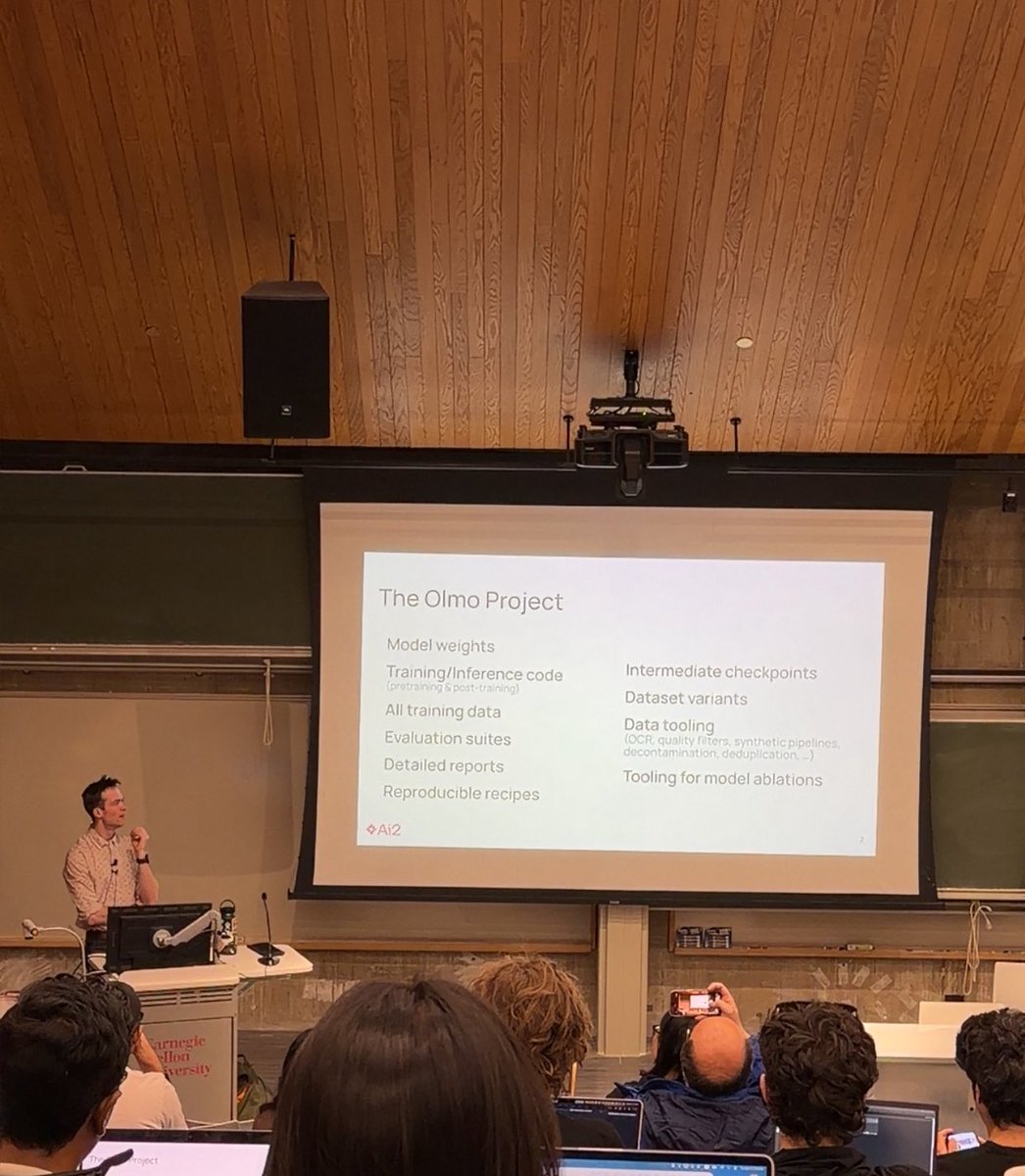

Just attended Nathan Lambert's talk on building OLMo in the era of agents at CMU LTI colloquium fascinating deep dive into fully open reasoning model recipes, how architecture choices shape RL efficiency, and what's next for agentic AI. Great stuff from @natolambert and the AI2 team. Thank you!

English

@alfcnz Can’t wait for your amazing visuals and explanations! 🙏🏻❤️

English

@CreativeS3lf Yes, I’ve decided to release whatever I have. I only wish I had the time to get to it. Unfortunately, other duties have higher priority at the moment. 🥺🥺🥺

English

Releasing the Energy-Book 🔋 from its first appendix's chapter, where I explain how I create my figures. 🎨

Feel free to report errors via the issues' tracker, contribute to the exercises, and show me what you can draw, via the discussion section. 🥳

github.com/Atcold/Energy-…

English

@ericwtodd @davidbau @jannikbrinkmann @rohitgandikota Nice Work! I wonder what software did you use to produce the visuals, thanks!

English

@far__el @fchollet @far__el What Learning Algorithm is in-context Learning? Investigations with Linear Models arxiv.org/pdf/2211.15661

English

Hmmm got me curious. How would it alter its weights ;)? Also the thing is we as humans adapt to novelty via process of thinking and also we retrain this process of reasoning once we unlock something profound a bit a solution / mental model of thinking as if out sate space of observation of seeing new problems has expanded.

English

Re: the path forward to solve ARC-AGI...

If you are generating lots of programs, checking each one with a symbolic checker (e.g. running the actual code of the program and verifying the output), and selecting those that work, you are doing program synthesis (aka "discrete program search").

The main issue with program synthesis is combinatorial explosion: the "space of all programs" grows combinatorially with the number of available operators and the size of the program.

The best solution to fight combinatorial explosion is to leverage *intuition* over the structure of program space, provided by a deep learning model. For instance, you can use a LLM to sample a program, or to make branching decision when modifying an existing program.

Deep learning models are inexact and need to be complemented with discrete search and symbolic checking, but they provide a fast way to point to the "right" area of program space. They help you navigate program space, so that your discrete search process has less work to do and becomes tractable.

Here's a talk I did at a workshop in Davos in March that goes into these ideas in a bit more detail.

docs.google.com/presentation/d…

English

This is going to revolutionize education 📚

Google just launched "Learn Your Way" that basically takes whatever boring chapter you're supposed to read and rebuilds it around stuff you actually give a damn about.

Like if you're into basketball and have to learn Newton's laws, suddenly all the examples are about dribbling and shooting. Art kid studying economics? Now it's all gallery auctions and art markets.

Here's what got me though. They didn't just find-and-replace examples like most "personalized" learning crap does. The AI actually generates different ways to consume the same information:

- Mind maps if you think visually

- Audio lessons with these weird simulated teacher conversations

- Timelines you can click around

- Quizzes that change based on what you're screwing up

They tested this on 60 high schoolers. Random assignment, proper study design. Kids using their system absolutely destroyed the regular textbook group on both immediate testing and when they came back three days later.

Every single one said it made them more confident.

The part that surprised me? They actually solved the accuracy problem. Most ed-tech either dumbs everything down to nothing or gets basic facts wrong.

These guys had real pedagogical experts evaluate every piece on like eight different measures.

Look, textbooks have sucked for centuries not because publishers are idiots, but because making personalized versions was basically impossible at scale. That just changed.

This isn't some K-12 thing either. Corporate training could work this way. Technical documentation. Professional development.

Imagine if every boring compliance course used examples from your actual job instead of generic office scenarios.

We might have just watched the industrial education model crack for the first time. About damn time.

English

@aidan_mclau @YouJiacheng I think the most important metric to look at is how much compute was spent in the span of 4.5 hours.

English

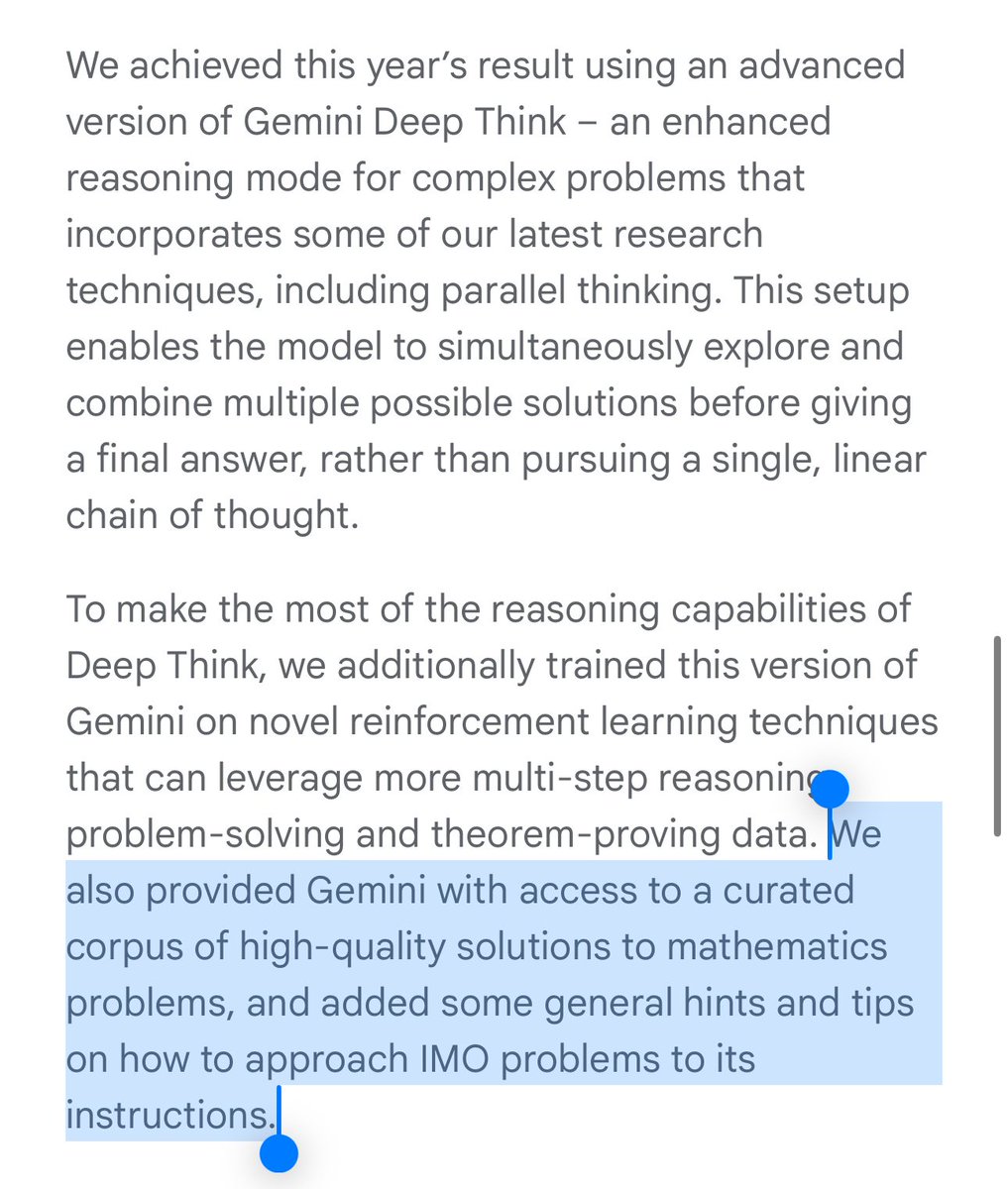

@YouJiacheng i think it’s also interesting that they curated and provided useful context to the model, which we did not

feels like taking your tutor’s cheat sheet with you into an exam

English

So OpenAI has 0 advantages, except the size of team (OpenAI team is claimed to be smaller).

Oriol Vinyals@OriolVinyalsML

Drastic progress on maths with Gemini 2.5! As a math undergrad, I am impressed 🤯 🥈 -> 🥇 ✅ Formal -> Informal ✅ Specialized model -> General model ✅ Available soon ✅ Huge thanks to IMO and congrats to all participants! Blog: deepmind.google/discover/blog/…

English

I need @cursor_ai to launch an iPhone app

So can code on my phone while I sit at @aloyoga when gf is shopping

English

The thing I don't get about Stargate is where is the data?

Let's assume funding is secured (a big assumption) and that you can build a super huge datacenter with 100s of thousands of NVIDIA cards and an Oracle database server or two too.

Where is the data coming from to keep the datacenter busy?

I get how Tesla and X data keep Elon's datacenters busy.

I get how a couple hundred million users of OpenAI's ChatGPT keep its data centers busy.

I get how YouTube's data would keep a Google datacenter busy.

But I don't see where the big dataset is coming from for the Stargate fund to use.

So what really is at play here?

1. Hosting a super huge military simulator?

2. Getting all small businesses in USA to use AI? (That's the best outcome, but would require radical education of everyone running small businesses).

3. Hosting all of Walmart or all of Las Vegas? (Steve Wynn's datacenter is a fraction the size of eBay, which is located in the cage next door at Switch's datacenters in Las Vegas).

4. All Apple's data?

Who has a big dataset that could keep a super huge datacenter busy?

Something doesn't smell right here. @softbank usually doesn't fund charities. So, there must be a business reason to open up such a huge fund.

Are they hoping to wipe out @ycombinator and fund every cool AI company that gets born from now on? That won't work either, because YCombinator brings a lot more to the table than Softbank does.

But with AI you must always follow the data, not the money. The data is what matters and so far I don't see a real dataset involved here (IE one that has hundreds or thousands of petabytes that isn't already being served well by existing datacenters, or that would greatly grow in value once shoved through a bunch of NVIDIA cards).

Do you?

English

@karpathy cognitive load is just another buzzword that mediocre engineers will use as an excuse, similar to "i cant do that because it's a lot of context switching"

complex system are complex, there's no way around it, you can only hide complexity but it doesn't disappear

English

Nice post on software engineering.

"Cognitive load is what matters"

minds.md/zakirullin/cog…

Probably the most true, least practiced viewpoint.

English

@ChatGPTapp I love the naming trick lol also the picture your contact list.

2 = A, B, C → 2 = C

4 = G, H, I → 4 = H

2 = A, B, C → 2 = A

8 = T, U, V → 8 = T

4 = G, H, I → 4 = G

7 = P, Q, R, S → 7 = P

8 = T, U, V → 8 = T

English

At some point in my life, a grand change would happen to me, and I would feel happy filled with a sense of accomplishment and certainty that everything had finally “fallen into place.” Only to realize that happiness built on external milestones is fleeting. The job, the degree, the relationship, or the achievement that once seemed like the “final goal” would lose its glow over time. What I thought would grant me lasting fulfillment instead revealed a deeper truth: contentment doesn’t come from reaching a finish line it comes from how you walk the path.

English

@karpathy will a day come where you stand on the stage and explain how to train sora like what you did for llms on microsoft?

English

@lilbytelil @fchollet True. But if you can perform ablation studies on the modules involved, it will make your system more grounded rather than just stacking a bunch of modules on top of each other.

English

From Deep Learning with Python:

- Deep learning research is an evolution process driven by poorly-understood empirical results

- Math in DL papers is usually worthless and was placed there purely as a signal of seriousness

- The key to good research is understanding what *causes* your results, and that requires ablation studies (which few researchers attempt to do)

Syed Amaan@syedamaann

@fchollet on ablation studies in deep learning research:

English

@andrew_n_carr Very Cool. CriticGPT is nice.

Just like what we expect LLMS help each other an emergent form of correction. So, we are expecting better training dataset for gpt-4 :O

English

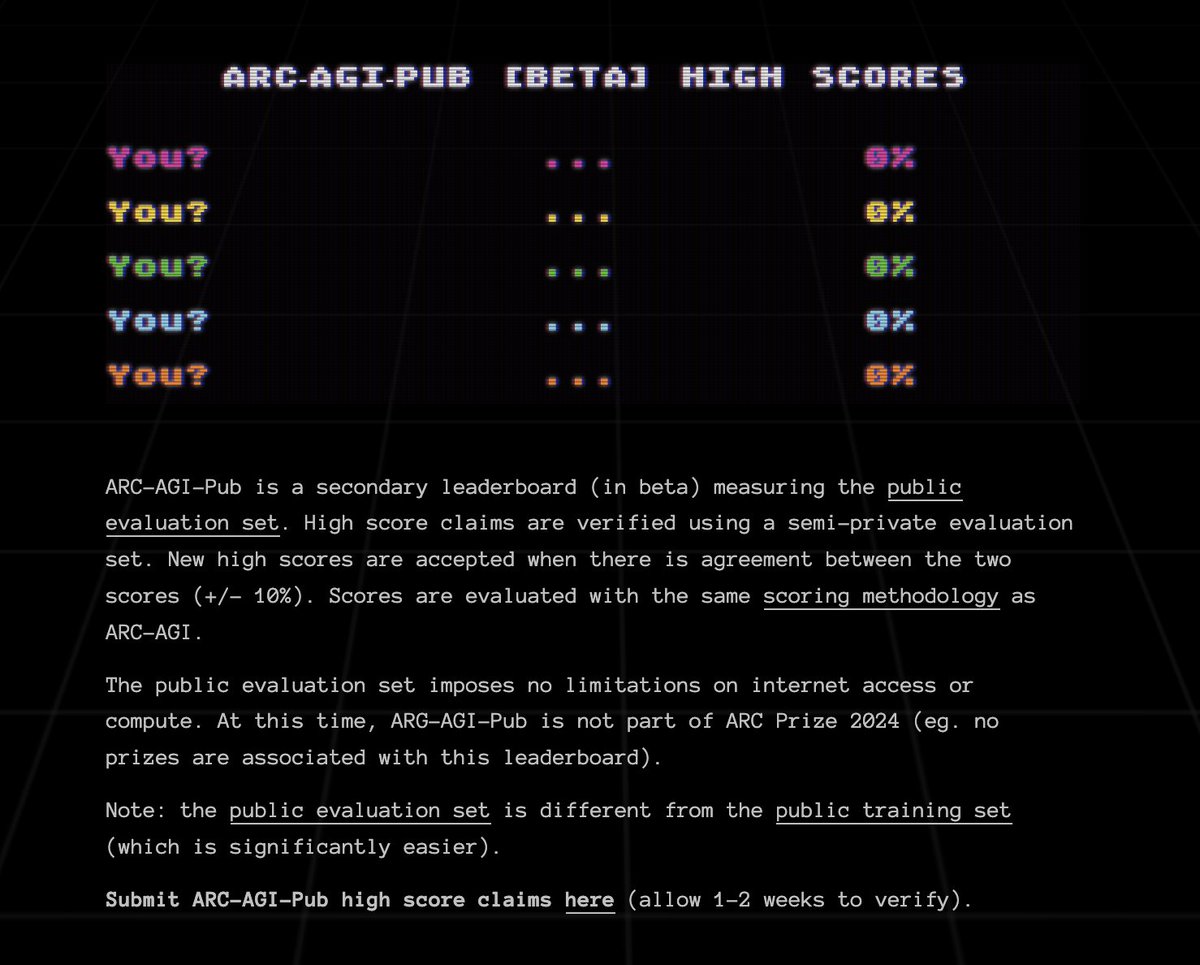

@CreativeS3lf @RyanPGreenblatt @dwarkesh_sp @fchollet @arcprize arc-agi-pub is this secondary leaderboard arcprize.org/leaderboard

English

I asked Buck about his thoughts on ARC-AGI to prepare for interviewing @fchollet.

He tells his coworker Ryan, and within 6 days they've beat SOTA on ARC and are on the heels of average human performance. 🤯

"On a held-out subset of the train set, where humans get 85% accuracy, my solution gets 72% accuracy."

Buck Shlegeris@bshlgrs

ARC-AGI’s been hyped over the last week as a benchmark that LLMs can’t solve. This claim triggered my dear coworker Ryan Greenblatt so he spent the last week trying to solve it with LLMs. Ryan gets 71% accuracy on a set of examples where humans get 85%; this is SOTA.

English