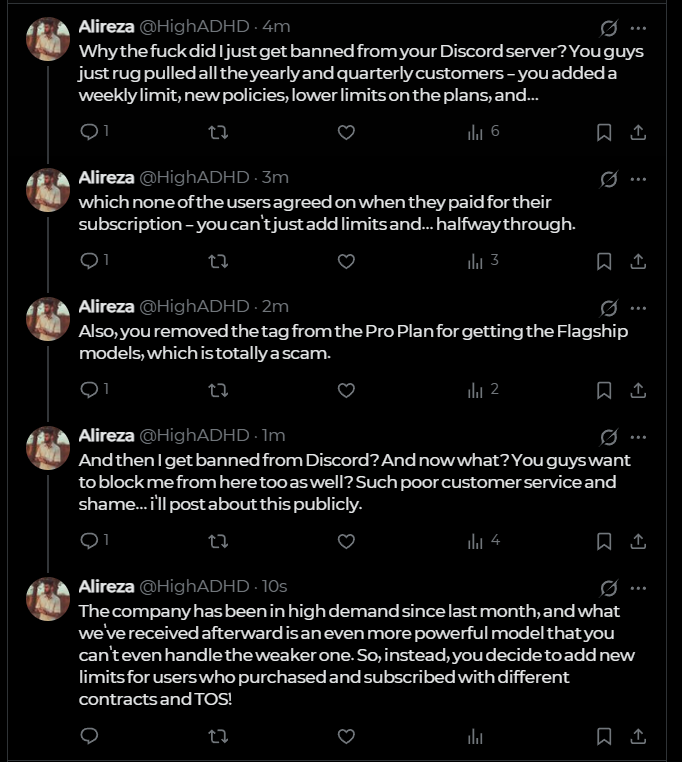

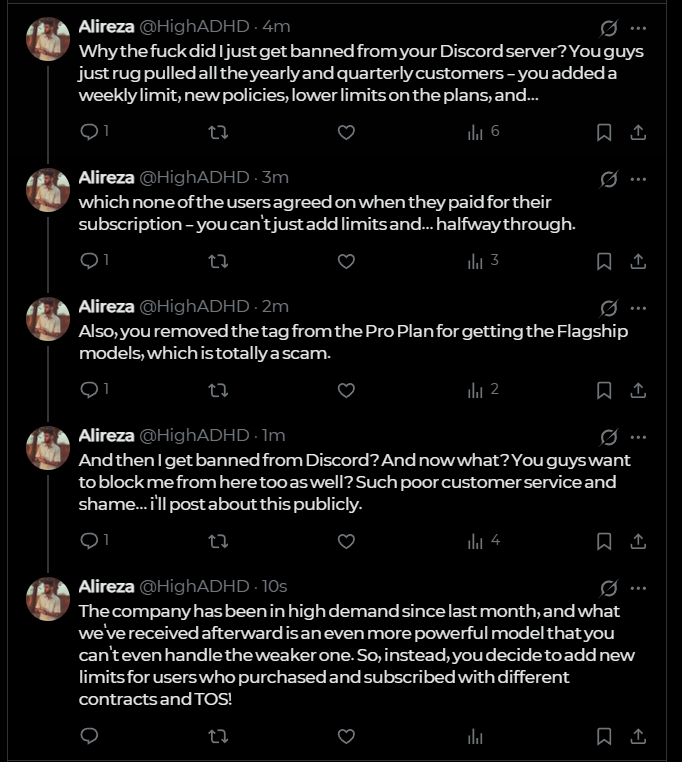

Alireza

39 posts

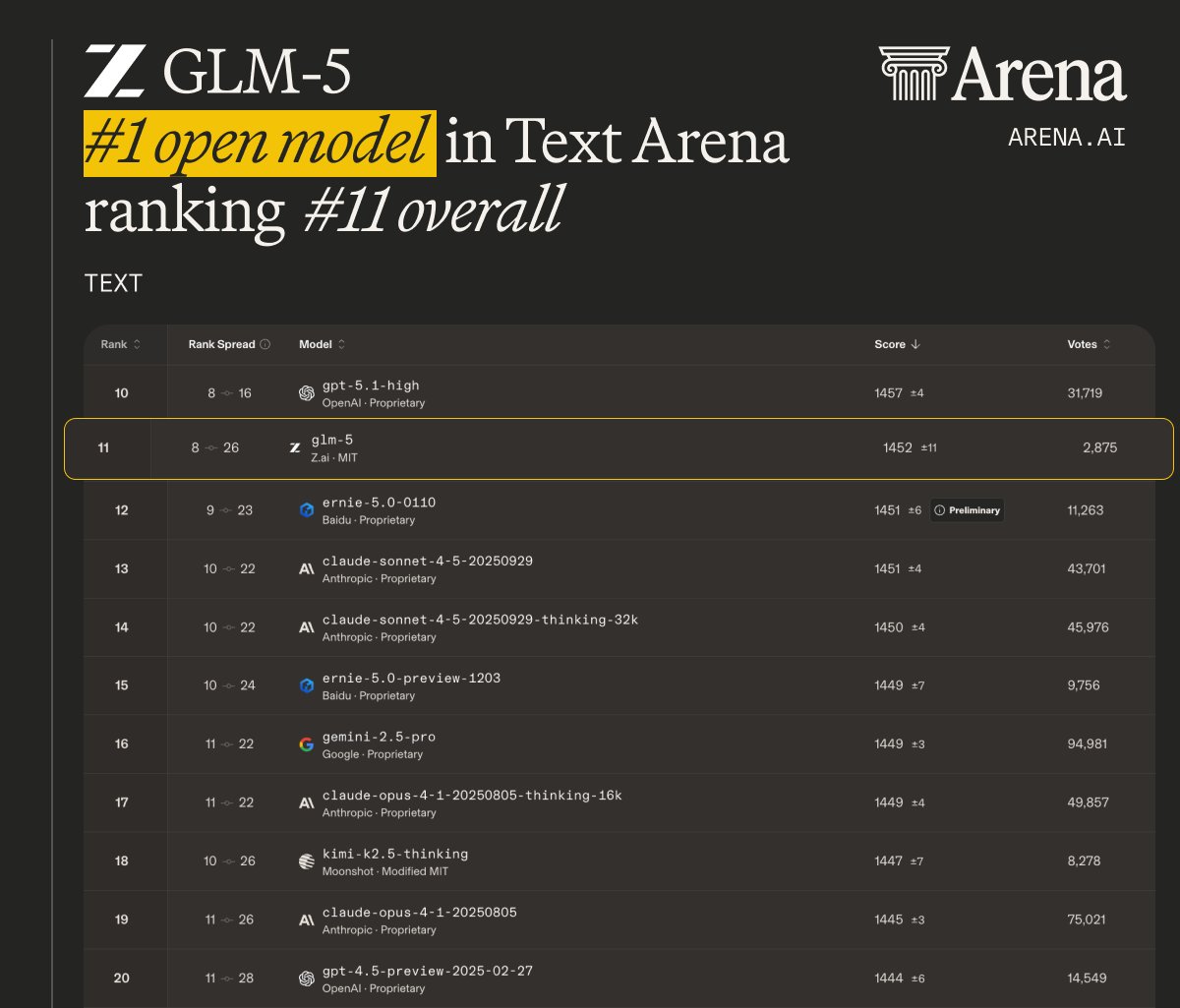

GLM-5 from @Zai_org just climbed to #1 among open models in Text Arena! ▫️#1 open model on par with claude-sonnet-4.5 & gpt-5.1-high ▫️#11 overall; scoring 1452, +11pts over GLM-4.7 Test it out in the Code Arena and keep voting, we’ll see how GLM-5 performs for agentic coding tasks next! Congrats to the @Zai_org for this amazing achievement.

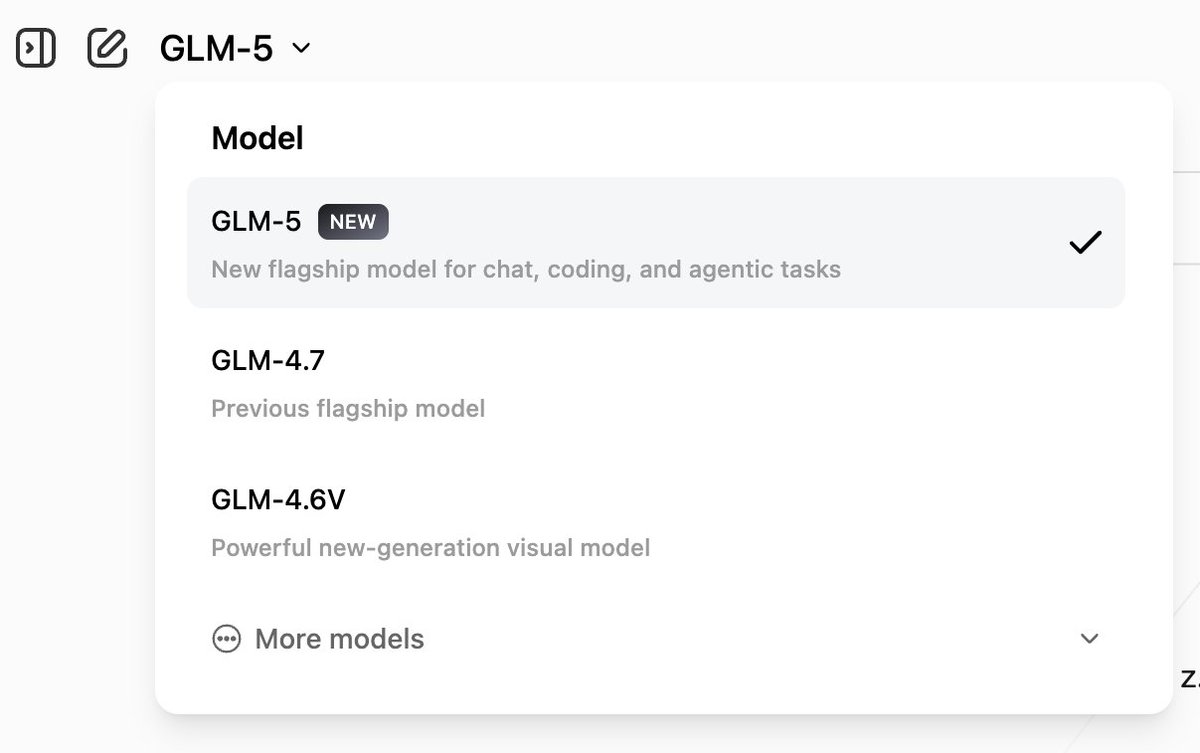

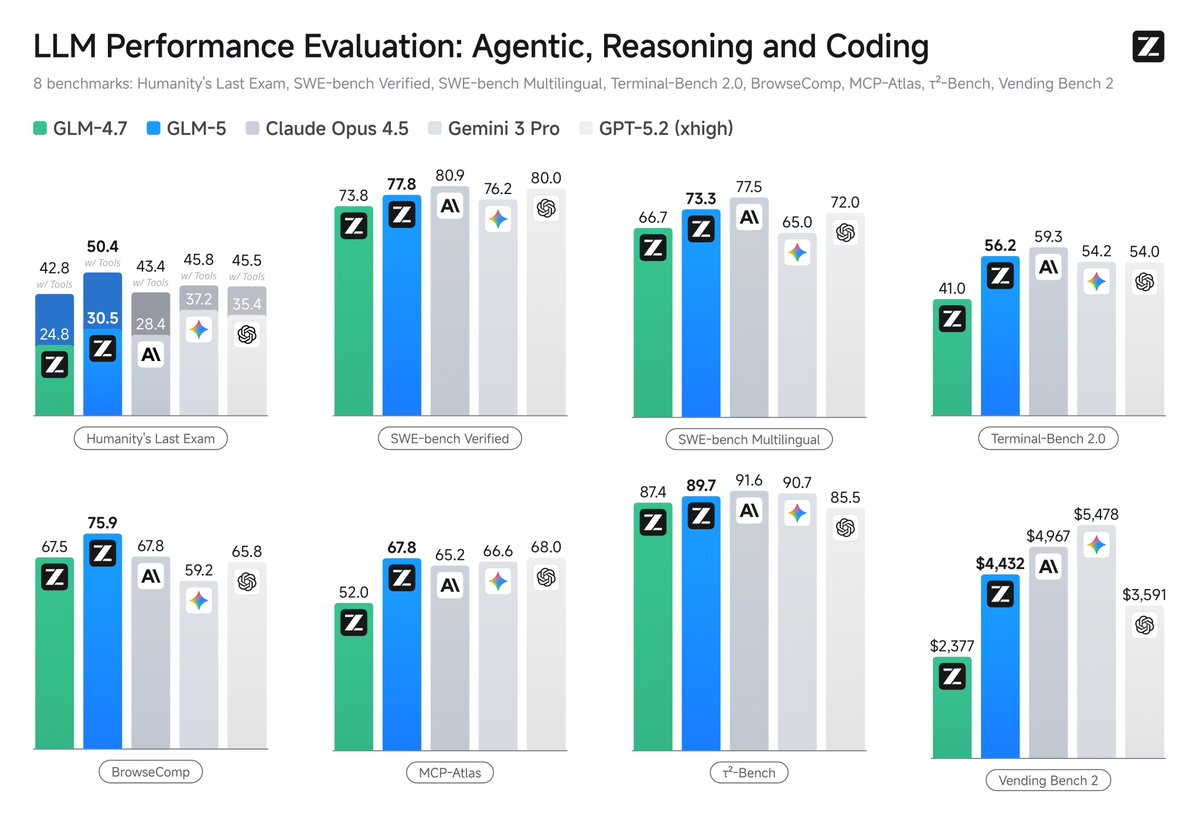

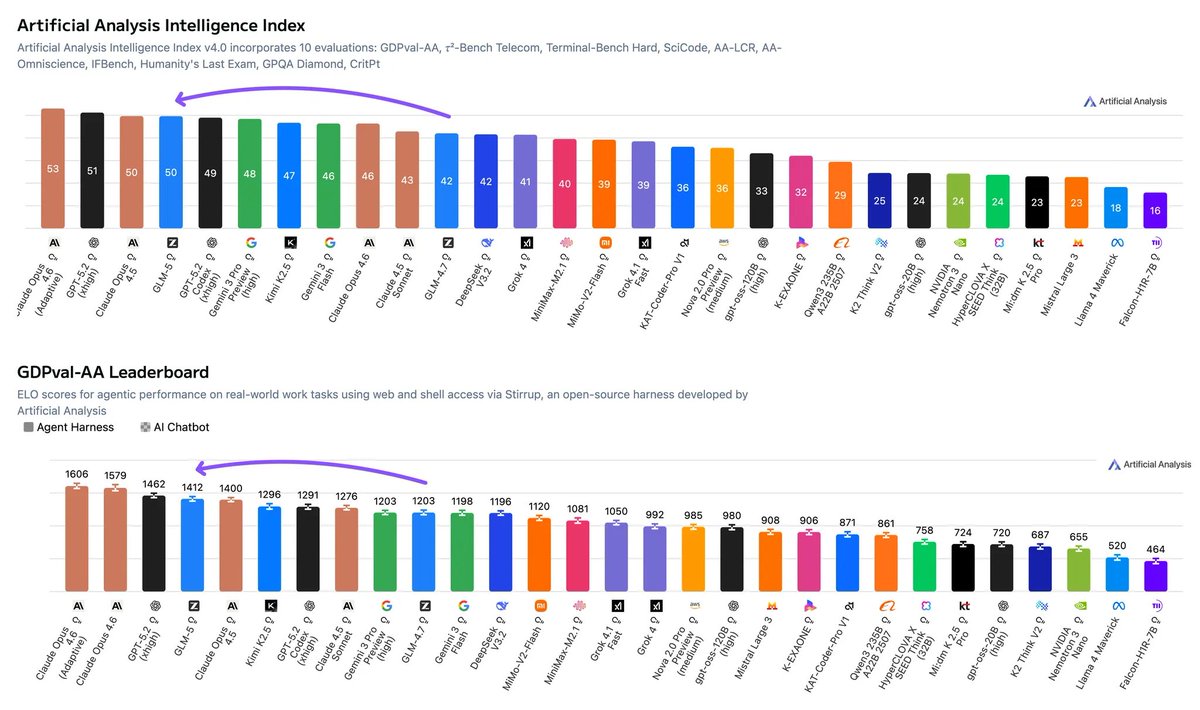

Introducing GLM-5: From Vibe Coding to Agentic Engineering GLM-5 is built for complex systems engineering and long-horizon agentic tasks. Compared to GLM-4.5, it scales from 355B params (32B active) to 744B (40B active), with pre-training data growing from 23T to 28.5T tokens. Try it now: chat.z.ai Weights: huggingface.co/zai-org/GLM-5 Tech Blog: z.ai/blog/glm-5 OpenRouter (Previously Pony Alpha): openrouter.ai/z-ai/glm-5 Rolling out from Coding Plan Max users: z.ai/subscribe