🚨🚨🚨 7 PhD Studentships at UCL in Statistical Science. More information 👇:

Jeremias Knoblauch

398 posts

@LauchLab

Associate Professor & EPSRC Fellow @ UCL. Post-Bayesian seminar series sign-up @ https://t.co/a0MyAQOh16 Research mission @ https://t.co/kNIjvCrGne

🚨🚨🚨 7 PhD Studentships at UCL in Statistical Science. More information 👇:

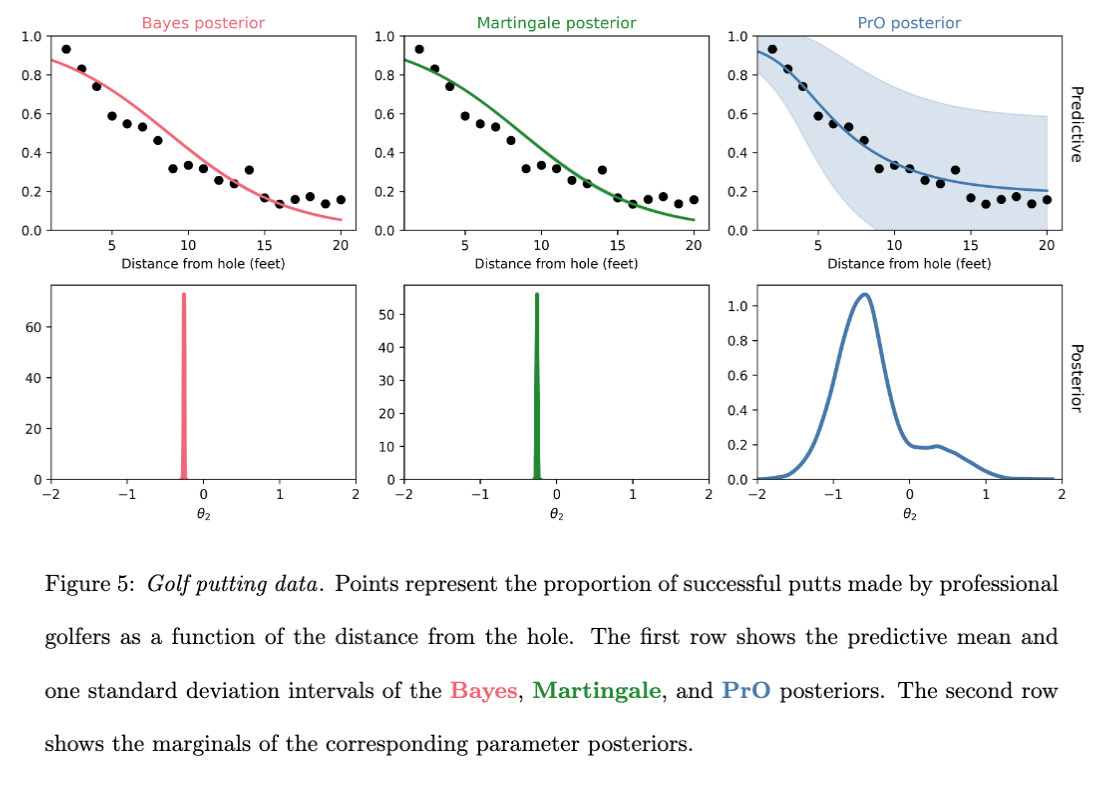

Our paper “Martingale Posterior Neural Networks for Fast Sequential Decision Making” has been accepted at #neurips2025! Joint work with @l_sbetancourt, @AlvaroCartea and @sirbayes Blog: grdm.io/posts/bnn-with… Paper: arxiv.org/abs/2506.11898 Code: github.com/gerdm/martinga…

New paper on Stationary MMD points 📣 arxiv.org/pdf/2505.20754 1️⃣ Samples generated by MMD flow exhibit 'super-convergence' 2️⃣ A discrete-time finite-particle convergence result for MMD flow Joint work with Toni Karvonen, Heishiro Kanagawa, @fx_briol, Chris J. Oates

We are excited to announce that registration for the inaugural post-Bayes workshop on May 15./16. at UCL is now open! Website: postbayes.github.io/workshop2025/ Registration link: tinyurl.com/postBayesWorks…

We are excited to announce that registration for the inaugural post-Bayes workshop on May 15./16. at UCL is now open! Website: postbayes.github.io/workshop2025/ Registration link: tinyurl.com/postBayesWorks…

Congratulations to @UCL / @stats_UCL's @matialtamiranom on being one of the 2024-2025 @Bloomberg #DataScience Ph.D. Fellows! Learn more about Matías’ research focus and our latest cohort of Ph.D. Fellows: bloom.bg/4itDDiN #AI #ML #NLProc #NeurIPS2024

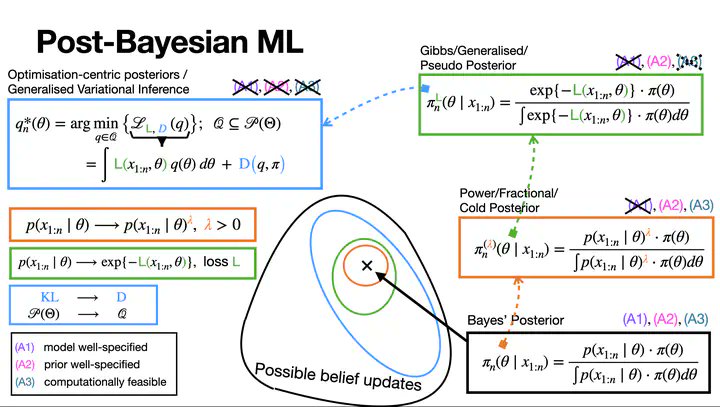

📢 Post-Bayesian online seminar series coming!📢 To stay posted, sign up at tinyurl.com/postBayes We'll discuss cutting-edge methods for posteriors that no longer rely on Bayes Theorem. (e.g., PAC-Bayes, generalised Bayes, Martingale posteriors, ...) Pls circulate widely!