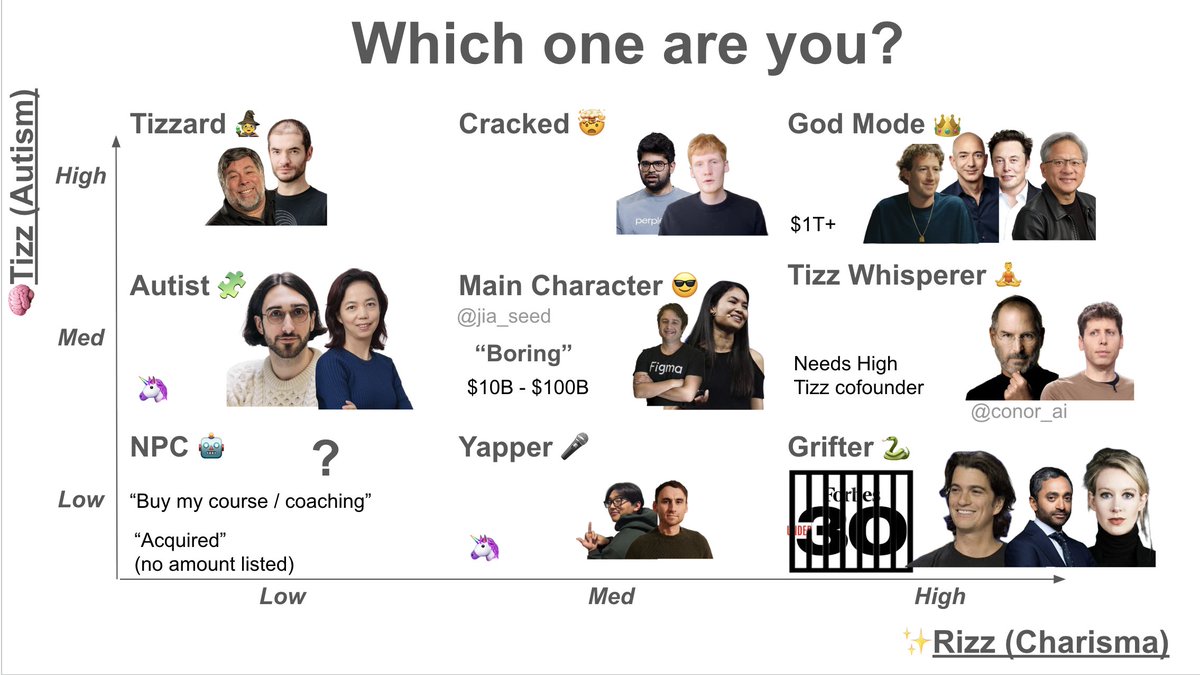

I wrote a lot about the people I call “shapers” in the first part of my book Principles. I use the word to mean someone who comes up with unique and valuable visions and builds them out beautifully, typically over the doubts of others. (1/2) #principleoftheday