GermainGauthier

610 posts

GermainGauthier

@PinchOfData

Assistant Professor @UniBocconi | Previously @ETH, @CrestUmr | AI, Social Media, and Political Economy

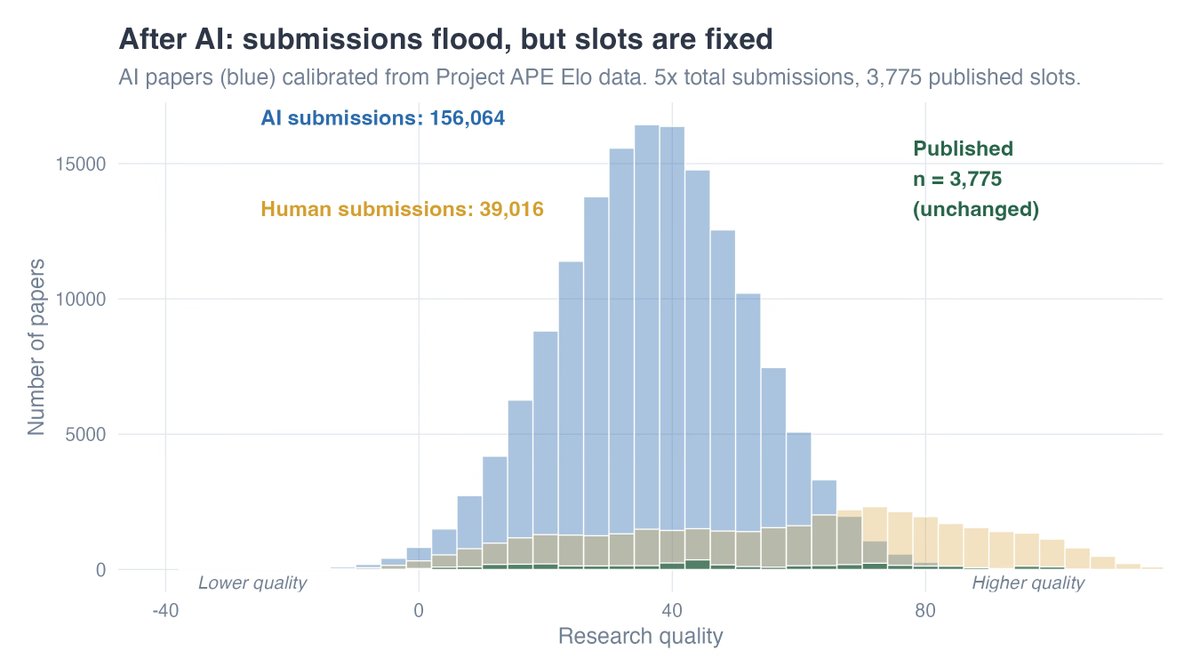

Recently I presented in a faculty seminar and used Mentimeter to solicit private/anonymous beliefs what the likelihood of some AI-system that can produce an AER-level paper in 1 hour, under a cost of 50 USD... More than 1/3 reported they expect that within a year. But beliefs are extremely heterogeneous... no consensus whatsoever.

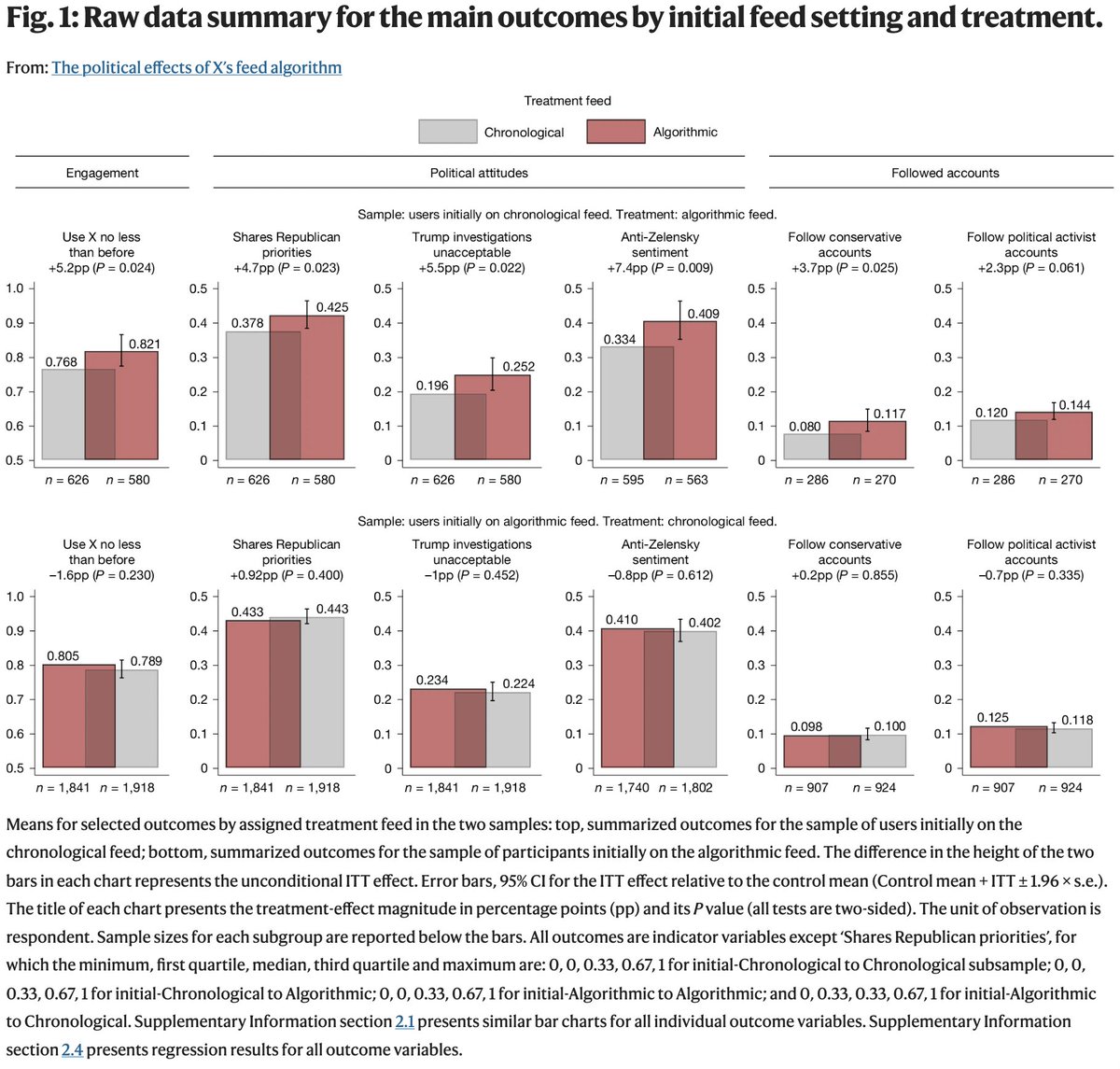

There's a growing worry that AI will break empirical social science -- that agents can p-hack until they find something that "works." We think that worry deserves to be taken seriously. Our new paper shows that is true empirically and makes it precise: njw.fish/static/papers/…

📢#CallForPapers Submissions are now welcome for the 11th Monash-Paris-Warwick-Zurich-CEPR Text-As-Data Workshop. Papers using text, audio, images, or other unstructured data are welcome. 📅Deadline: 13 March Organisers: @ellliottt @essobecker & @phinifa ow.ly/pMRO50Y7xff

Every journal editor should read this: causalinf.substack.com/p/claude-code-…

Every journal editor should read this: causalinf.substack.com/p/claude-code-…

Today @PinchOfData (@Unibocconi) presented "Measuring Crime Reporting and Incidence: Method and Application to #MeToo". He finds that #MeToo led to higher victim reporting and arrest probabilities, while also deterring sex crimes.