RunAnywhere (YC W26)

209 posts

RunAnywhere (YC W26)

@RunAnywhereAI

RunAnywhere: The default way of running on-device AI at scale. Backed by @ycombinator @yoheinakajima

At @RunAnywhereAI we just extended MetalRT with 👀 support: beating @Apple at their own game once AGAIN and delivering the FASTEST VLM decode engine on the market for Apple Silicon right now. - 279 tok/s vision decode - 1.22× faster than mlx-vlm We crushed mlx-vlm and llama.cpp across every configuration tested on Qwen3-VL-2B-Instruct 4-bit quantized across multiple image resolutions on a single M4 Max. Vision decode just hit warp speed! Video analysis coming soon :) #ycombinator #runanywhere #metalrt #applesilicon #vlm #ondeviceai

At @RunAnywhereAI we just extended MetalRT with 👀 support: beating @Apple at their own game once AGAIN and delivering the FASTEST VLM decode engine on the market for Apple Silicon right now. - 279 tok/s vision decode - 1.22× faster than mlx-vlm We crushed mlx-vlm and llama.cpp across every configuration tested on Qwen3-VL-2B-Instruct 4-bit quantized across multiple image resolutions on a single M4 Max. Vision decode just hit warp speed! Video analysis coming soon :) #ycombinator #runanywhere #metalrt #applesilicon #vlm #ondeviceai

In just 48 hours at @RunAnywhereAI we built MetalRT: beating @Apple at their own game and delivering the FASTEST LLM inference engine on the market for Apple Silicon right now. - 570 tok/s decode @liquidai LFM 2.5-1.2B 4-bit - 658 tok/s decode @Alibaba_Qwen Qwen3-0.6B, 4-bit - 6.6 ms time-to-first-token - 1.19× faster than Apple's own MLX (identical model files) - 1.67× faster than llama.cpp on average We crushed Apple MLX, llama.cpp, uzu(by TryMirai), and Ollama across four different 4-bit models, including the on-device optimized LFM2.5-1.2B on a single M4 Max. Excited for this one! #ycombinator #runanywhere #ondeviceai #applesilicon #mlx

just shipped Personalities on RCLI: your local voice AI can now be professional, sarcastic, cynical, or a full-blown nerd who references Star Wars in every answer siri could never have a personality crisis running entirely on your GPU all of it still powered by our fastest inference engine: MetalRT by @RunAnywhereAI , check it out! #runanywhere #ondeviceai #sirikiller #metalRT

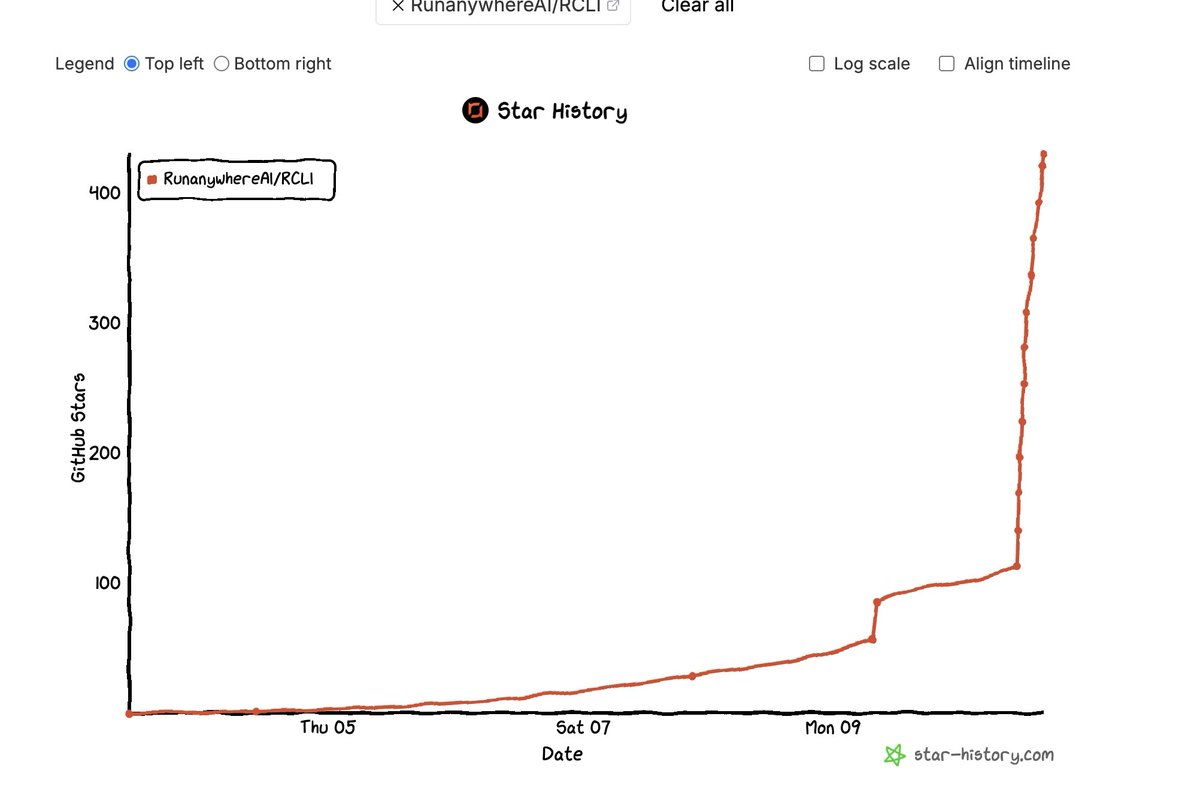

Launching RCLI + MetalRT: the FASTEST on-device voice AI for macOS, crushing Apple MLX, MLX Whisper, MLX Audio, llama.cpp, sherpa-onnx, Ollama, uzu & every inference engine out there, built by @RunAnywhereAI - 658 tok/s LLM decode (1.19x > MLX) - 714x real-time STT (4.6x > MLX Whisper) - 8.8x RTF TTS (2.8x > MLX Audio) - 63ms voice-to-audio latency Hybrid RAG: <4ms retrieval (HNSW+BM25+RRF), sub-200ms w/ embedding cache 36 macOS actions: open apps, web search, control Spotify - all local, offline, open-source - accepting PRs for more custom actions. No API keys. Stay tuned, more updates incoming, about to hit WARP SPEED!! #ycombinator #runanywhere #applesilicon #metalRT

The top .1% of users are playing with local LLMS This will 10x every 12 months.... Until Apple, Dell and MSFT have one on your local device BY DEFAULT in 2028 This takes the local hardware spec race from irrelevant for 99% of users to critical. Going to be insane.

We built the future of voice AI on your Mac. RCLI is here @RunAnywhereAI! Our optimized end-to-end voice + RAG pipeline: talk → instant control + doc answers, ~131ms latency, - all LOCAL - all OPEN SOURCE - all FREE. 43 actions, no cloud, your data forever private. Siri: “Let me think about that…” RCLI: 131 ms voice-to-action. Done. Next. Experience it—install & command your machine: curl -fsSL raw.githubusercontent.com/RunanywhereAI/… | bash Next level incoming: MetalRT support (fastest Apple Silicon inference 658 tok/s decode, blazing ASR and TTS). Your Mac's about to hit warp speed! #OnDevice #MetalRT #YCW26 #NoMoreWaiting

In just 48 hours at @RunAnywhereAI we built MetalRT: beating @Apple at their own game and delivering the FASTEST LLM inference engine on the market for Apple Silicon right now. - 570 tok/s decode @liquidai LFM 2.5-1.2B 4-bit - 658 tok/s decode @Alibaba_Qwen Qwen3-0.6B, 4-bit - 6.6 ms time-to-first-token - 1.19× faster than Apple's own MLX (identical model files) - 1.67× faster than llama.cpp on average We crushed Apple MLX, llama.cpp, uzu(by TryMirai), and Ollama across four different 4-bit models, including the on-device optimized LFM2.5-1.2B on a single M4 Max. Excited for this one! #ycombinator #runanywhere #ondeviceai #applesilicon #mlx

We built the future of voice AI on your Mac. RCLI is here @RunAnywhereAI! Our optimized end-to-end voice + RAG pipeline: talk → instant control + doc answers, ~131ms latency, - all LOCAL - all OPEN SOURCE - all FREE. 43 actions, no cloud, your data forever private. Siri: “Let me think about that…” RCLI: 131 ms voice-to-action. Done. Next. Experience it—install & command your machine: curl -fsSL raw.githubusercontent.com/RunanywhereAI/… | bash Next level incoming: MetalRT support (fastest Apple Silicon inference 658 tok/s decode, blazing ASR and TTS). Your Mac's about to hit warp speed! #OnDevice #MetalRT #YCW26 #NoMoreWaiting

Esta startup es una seria amenaza para Siri ☠️ Se llama @RunAnywhereAI y acaba de soltar RCLI: un asistente de voz 100% local que ya le gana en velocidad y privacidad. 131 ms end-to-end (voz → respuesta hablada) ⭐️Controla 43 acciones nativas de macOS (Spotify, ventanas, FaceTime, recordatorios…). ⭐️RAG instantáneo en tus PDFs y documentos. ⭐️Todo offline, sin nube, sin API keys. Eso no es todo. Lo que se viene... mamita. El founder acaba de revelar MetalRT (su nuevo motor TTS hecho con Metal y lo que ves en el vídeo) que logra 291 ms para 5 palabras y 8.4x más rápido que el tiempo real. Cuando salga esa actualización… Siri va a llorar. Por mientras, REPOOO 👇

Qwen just dropped a 2B multimodal base model with 1M token context on Hugging Face Native 262k context, extensible to 1M tokens for long documents

“The first time we realized agents were possible was watching @yoheinakajima build with Claude and Replit side by side.” @Replit CEO @amasad on the origins of coding agents: “He would prompt Claude Code, paste it into Replit, run it, get an error, copy the error back to Claude, and repeat.” “And watching that process, it became obvious.” “We could just automate the whole loop.” “That’s where agents start.”