Liquid AI

508 posts

@liquidai

Build efficient general-purpose AI at every scale.

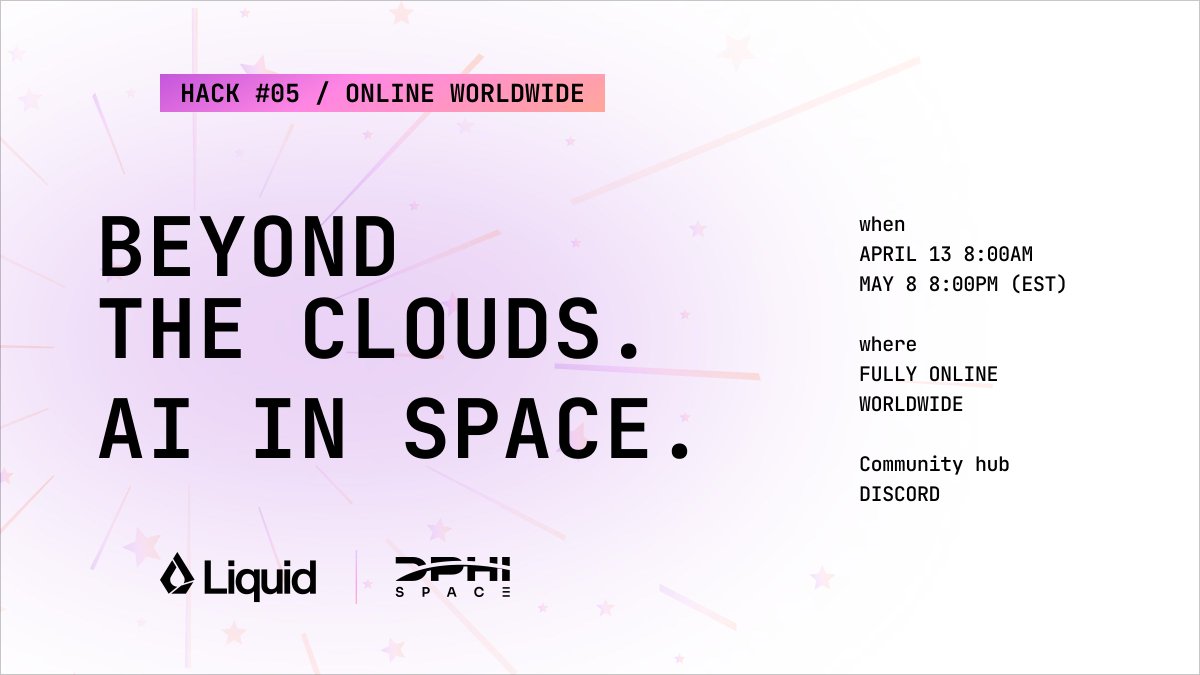

AI is beginning to move beyond the clouds… Registration is open for Hack #05: AI in Space, in collaboration with @DPhiSpace. A hackathon exploring what becomes possible when AI operates closer to satellites, orbital systems, and space-based data. For developers, researchers, and builders interested in the future of AI in space. Register → luma.com/n9cw58h0 Learn more → hackathons.liquid.ai 🚀 Join the conversation → discord.com/channels/13854…

model’s so fast, Josh had to slow down the video capture to show case this demo! @liquidai

NEW Video: The era of Pharmaceutical Superintelligence has arrived. Join Alex Zhavoronkov (Insilico Medicine) and Ramin Hasani (@liquidai) for an exclusive fireside chat and live demo of LFM2-2.6B-MMAI. This 2.6B-parameter scientific foundation model is a game-changer for drug discovery. Trained via the Science #MMAIGym, it delivers state-of-the-art performance in molecular property prediction and ADMET endpoints—all while running locally on-premise. Why it matters: Data Sovereignty: High-performance AI that keeps proprietary IP behind your firewall—no cloud transmission required. Efficiency: Outperforms models 10x its size, reducing compute costs and R&D timelines. End-to-End Capability: Supports every stage from initial hit discovery to optimization. Watch it here: youtube.com/watch?v=WoLyym… #LiquidAI #InsilicoMedicine #Biotech #OnPremiseAI #DrugDiscovery #DeepTech

> 385ms average tool selection. > 67 tools across 13 MCP servers. > 14.5GB memory footprint. > Zero network calls. LocalCowork is an AI agent that runs on a MacBook. Open source. 🧵

> 385ms average tool selection. > 67 tools across 13 MCP servers. > 14.5GB memory footprint. > Zero network calls. LocalCowork is an AI agent that runs on a MacBook. Open source. 🧵

An example workflow the agent executed locally: search receipts → OCR → parse vendor/date/items → check duplicates → export CSV → flag anomalies → generate reconciliation report. A real workflow. Running entirely on consumer hardware. youtu.be/WnxxW2jTDgE