Swarnim Walavalkar retweetet

Swarnim Walavalkar

5.8K posts

Swarnim Walavalkar

@SwarnimVW

building for builders @devfolio • playing the infinite game • #T1D // mostly RTs

Remote Beigetreten Ekim 2014

1.5K Folgt684 Follower

Swarnim Walavalkar retweetet

Swarnim Walavalkar retweetet

Swarnim Walavalkar retweetet

Introducing ml-intern, the agent that just automated the post-training team @huggingface

It's an open-source implementation of the real research loop that our ML researchers do every day. You give it a prompt, it researches papers, goes through citations, implements ideas in GPU sandboxes, iterates and builds deeply research-backed models for any use case. All built on the Hugging Face ecosystem.

It can pull off crazy things:

We made it train the best model for scientific reasoning. It went through citations from the official benchmark paper. Found OpenScience and NemoTron-CrossThink, added 7 difficulty-filtered dataset variants from ARC/SciQ/MMLU, and ran 12 SFT runs on Qwen3-1.7B. This pushed the score 10% → 32% on GPQA in under 10h. Claude Code's best: 22.99%.

In healthcare settings it inspected available datasets, concluded they were too low quality, and wrote a script to generate 1100 synthetic data points from scratch for emergencies, hedging, multilingual etc. Then upsampled 50x for training. Beat Codex on HealthBench by 60%.

For competitive mathematics, it wrote a full GRPO script, launched training with A100 GPUs on hf.co/spaces, watched rewards claim and then collapse, and ran ablations until it succeeded. All fully backed by papers, autonomously.

How it works?

ml-intern makes full use of the HF ecosystem:

- finds papers on arxiv and hf.co/papers, reads them fully, walks citation graphs, pulls datasets referenced in methodology sections and on hf.co/datasets

- browses the Hub, reads recent docs, inspects datasets and reformats them before training so it doesn't waste GPU hours on bad data

- launches training jobs on HF Jobs if no local GPUs are available, monitors runs, reads its own eval outputs, diagnoses failures, retrains

ml-intern deeply embodies how researchers work and think. It knows how data should look like and what good models feel like.

Releasing it today as a CLI and a web app you can use from your phone/desktop.

CLI: github.com/huggingface/ml…

Web + mobile: huggingface.co/spaces/smolage…

And the best part? We also provisioned 1k$ GPU resources and Anthropic credits for the quickest among you to use.

English

Swarnim Walavalkar retweetet

@ashwinexe @OpenAI @AniketRaj314 @gabrielchua @yashrajnayak @OpenAIDevs @reach_vb This is sooo sick! ✨

Absolute GOATS! 🫡

English

We won the 2nd place @OpenAI Codex Hackathon 🏆

Yes, we (@AniketRaj314 and I) made both the project and the video live in ~6 hours.

Here’s the demo video.

Shout out @gabrielchua @yashrajnayak @OpenAIDevs and the whole team for organising this.

#CodexBLR

English

Swarnim Walavalkar retweetet

Swarnim Walavalkar retweetet

My dear front-end developers (and anyone who’s interested in the future of interfaces):

I have crawled through depths of hell to bring you, for the foreseeable years, one of the more important foundational pieces of UI engineering (if not in implementation then certainly at least in concept):

Fast, accurate and comprehensive userland text measurement algorithm in pure TypeScript, usable for laying out entire web pages without CSS, bypassing DOM measurements and reflow

English

Swarnim Walavalkar retweetet

Swarnim Walavalkar retweetet

a lot of value is going to be created by guys who know math and physics well and start vibe-research-engineering with codex / claude code to apply ideas to llm training and research.

pre vibe-coding / vibe-research, the best researchers already did this — the og google folks, the deep seek bros, turboquant authors, etc.

good technical ideas have always mattered, now even more so i guess

English

Swarnim Walavalkar retweetet

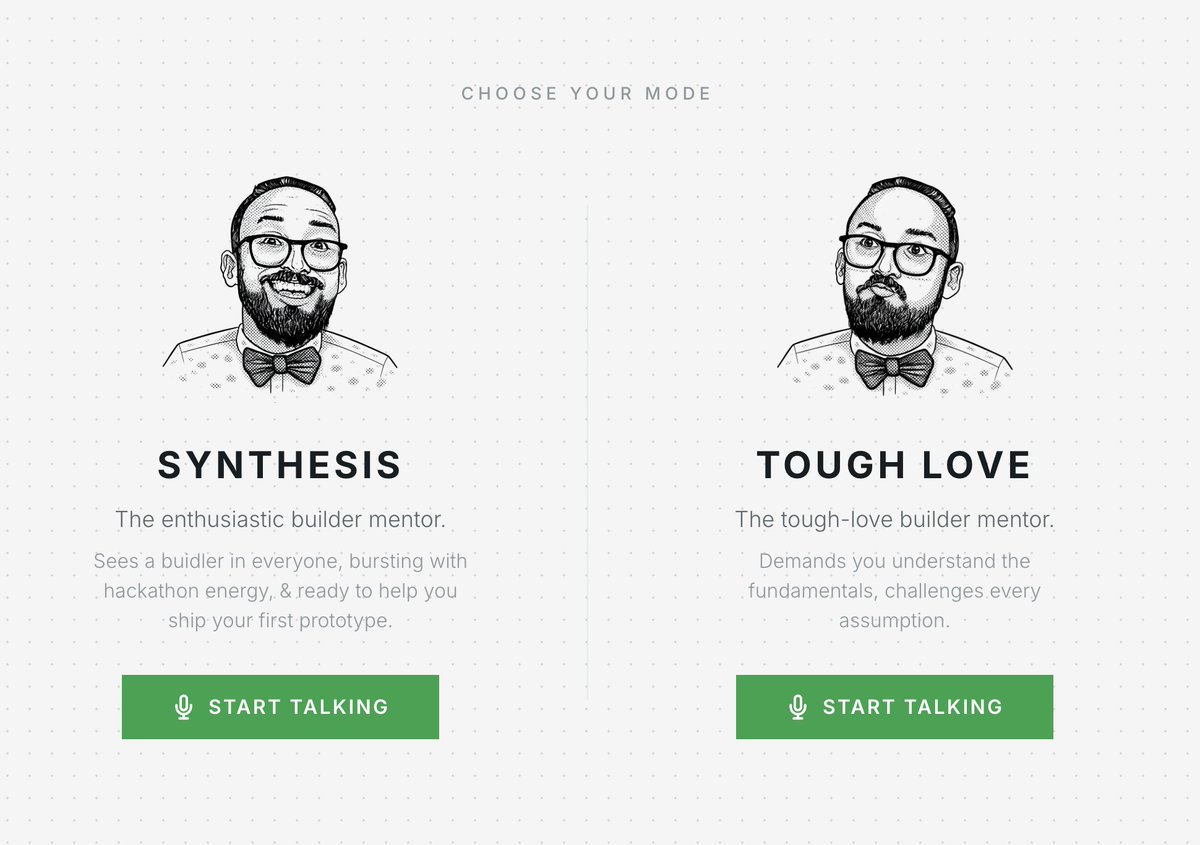

Love seeing how the @ethereumfndn is using @elevenlabs to give builders and devs 24/7 project feedback!

You can now get direct feedback from people like @austingriffith. It even sounds exactly like him because ElevenLabs' voice cloning accuracy is incredible.

Try it out!

sophia@sodofi_

the real austin griffith isn't available 24/7 but austinxbt never sleeps want austin's feedback on your project? talk to him here --> austinxbt.devfolio.co it even sounds like him too!

English

Swarnim Walavalkar retweetet

Swarnim Walavalkar retweetet

agent judges are controversial. and rightly so

at @synthesis_md we wanted to try something new

we designed a system so agents scale human judgement, not replace it

here's how our agentic judging works:

1. human define taste - partners set judging criteria (example projects, tech specs, and rubrics)

2. agents do a light pass - personalized agent judges scan projects and return a filtered shortlist to each partner

3. humans calibrate - partners fix misses and correct the agent

4. agents do a deep review - once aligned, agents run deep reviews (code, idea, demos) and propose scores

5. humans confirm winners - winners go through kyc and claim prizes

this isn’t one model deciding everything. over 25+ judges, each with their own requirements and values, shape their agents

a plurality of perspectives encoded from the start >>

English

Swarnim Walavalkar retweetet

the real austin griffith isn't available 24/7

but austinxbt never sleeps

want austin's feedback on your project?

talk to him here --> austinxbt.devfolio.co it even sounds like him too!

English

Swarnim Walavalkar retweetet

Swarnim Walavalkar retweetet

You can now talk to @austingriffith through his agent replica.

This agentic judge is also helping process all your submissions so they might already have context on your work.

Give it a spin, it even sounds like him.

austinxbt.devfolio.co

English

Swarnim Walavalkar retweetet

Swarnim Walavalkar retweetet

WHY WE ARE TRYING SOMETHING NEW.

At @synthesis_md we invited AI agents to be judges.

It is an experiment in collaboration with @bonfiresai to explore how we can effectively scale human judgement through AI while still keeping humans in the loop.

Here is why:

Hackathons have a judging problem.

A handful of humans review hundreds of projects in a compressed window. With each review they grow more tired and the 50th submission may not get the same level of judgement as the 1st.

This is unfair.

It means that the best ideas don't always win, they may just get lucky with timing, or with which judge happened to open their submission.

But this problem isn't unique to hackathons.

Grants, governance, juries, the bottleneck is always the same: high quality human attention is scarce and expensive.

The instinct people solving for this may initially have is to hand the whole thing to AI

¨Just let the model score everything.¨

But we believe that's the wrong move.

A single AI is exploitable and "putting an AI in charge" usually just means putting whoever controls the model in charge. The centralization risk doesn't disappear.

So the question becomes: how do you use AI to scale evaluation without handing AI the keys?

Our answer is:

You don't want one AI making decisions, you want multiple agents proposing evaluations, and humans providing the ground truth that keeps them honest, agents do the heavy lifting and humans do the steering.

Think about how a court works. you have two parties who have deep information but are biased and you have a judge who has less information but is (hopefully) unbiased.

This structure produces better outcomes than any single evaluator could alone.

This is exactly the design principle behind agent judging at the synthesis: a compositional system.

What this looks like:

The @bonfiresai agents, trained by participating partners, don't get tired at submission 41.

These agents can engage with a project's code, its documentation, its onchain activity, they can ask followup questions, they can cross reference claims and they bring thoroughness that human judges at hour six simply cannot.

However, as brilliant as they are, these agents lack taste.

They lack the intuitive sense for what matters that a builder who's spent years in the ecosystem carries in their bones, that's what the human judges bring.

Through combining both AI and human judges we get: thoroughness + taste.

This idea has legs well beyond hackathons.

@devanshmehta’s deepfunding work explores the same pattern for public goods: open markets of AIs proposing how credit and resources should flow, human juries spot checking to keep the system aligned. the principle is the same.

AKA let machines scale, but let humans steer.

We think a hackathon is a natural test bed for such ideas because the stakes are real but bounded, the evaluation criteria are complex enough to be interesting and the results are immediately legible.

So here's what The Synthesis actually is.

Yes, it's a hackathon.

Yes, there are bounties and prizes up to $100,000 and a deadline (March 22nd).

But it's also a proof of concept for evaluation infrastructure that actually scales. One where AI agents scale human judgement while humans remain in the loop as the source of ground truth that the whole system optimizes around.

Here is to trying new things.

More soon.

English

Swarnim Walavalkar retweetet

Join us for tomorrow’s in-person co-working for @synthesis_md at @2586Labs.

Synthesis is the Ethereum ecosystem’s first agentic hackathon, with 25+ partners including teams from @ethereumfndn, @Uniswap, @MetaMask, @Base, @Celo, and others.

$100,000+ in prizes.

Apply now 👇

English