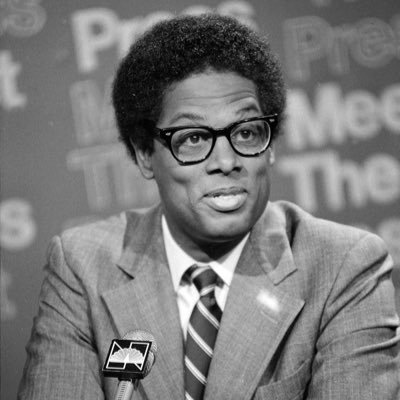

Valentin

88 posts

Valentin

@VLemort

🏴☠️ – building mcpresso (turn your API into a compliant MCP server ready to deploy) and granular software (sandboxes for creating safe and powerful AI agents)

The fundamental coding agent abstraction is the CLI. It’s not a UI or form factor preference, it’s rooted in the fact that agents need access to the OS layer. Coding agents are, at their core, computer-use agents. They run programs, create new ones, install missing ones, scan the filesystem, read logs. More than text editors, they’re *automating your computer* at a low level. It’s more accurate to think of Claude Code as “AI for your operating system” than a continuation of the copilot autocompletion → IDE AI assistance paradigm. Another consequence is that it works for any computer. Your desktop computer, but crucially, also the ones running in the cloud. 𝚗𝚟𝚒𝚖 nerds like yours truly always liked that it’s an editor that runs on your Mac but also any machine you 𝚜𝚜𝚑 into. Imagine if you could launch a million Claude Codes concurrently to tackle a bug, find cybersecurity threats, process your issue backlog, build features based on user feedback, run QA… That’s why we’re building vercel.com/sandbox as the infinite compute layer for agents.

My entire feed is just people talking about Sandboxes. Seems my prediction that this will be the primitive of 2026 is off to a good start.

you’re like 6 prompts away from infinitely customizable personal agi. anthropic gave you a world class agentic harness for free. use it!!!

The bitter lesson of building LLM apps: models are getting smarter faster than you can hack around their current limitations.