Thomas Chaton retweetet

How can we use small LLMs to shift more AI workloads onto our laptops and phones?

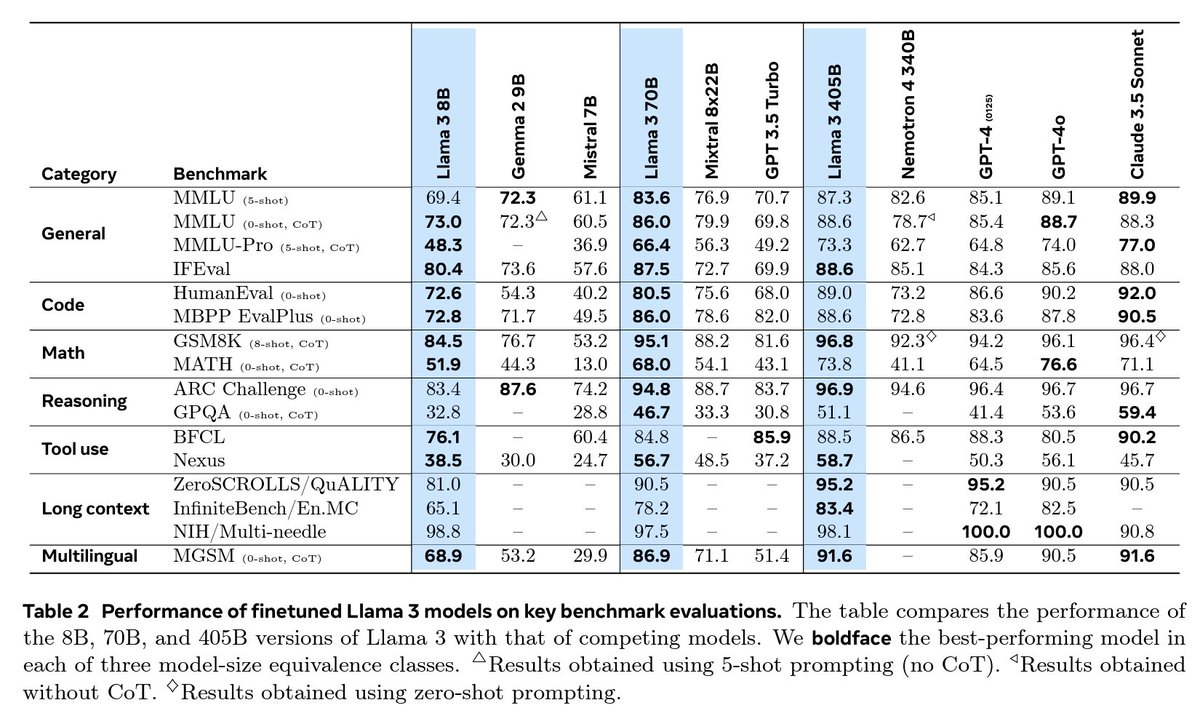

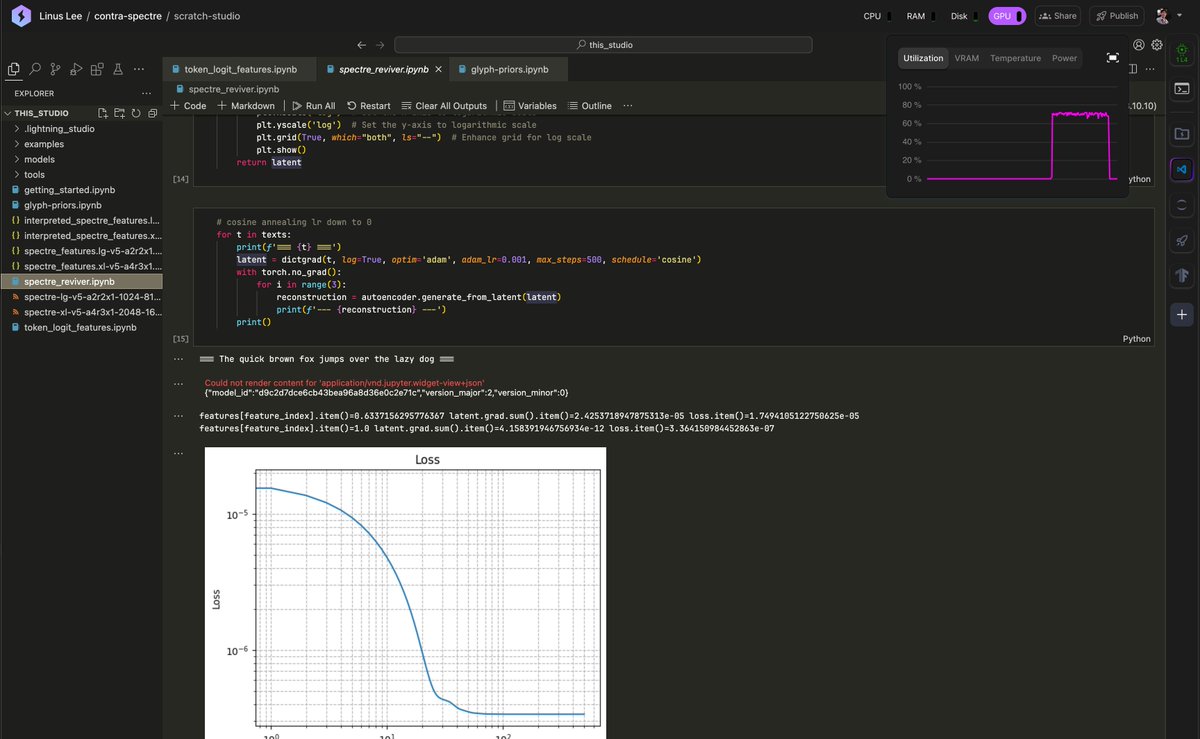

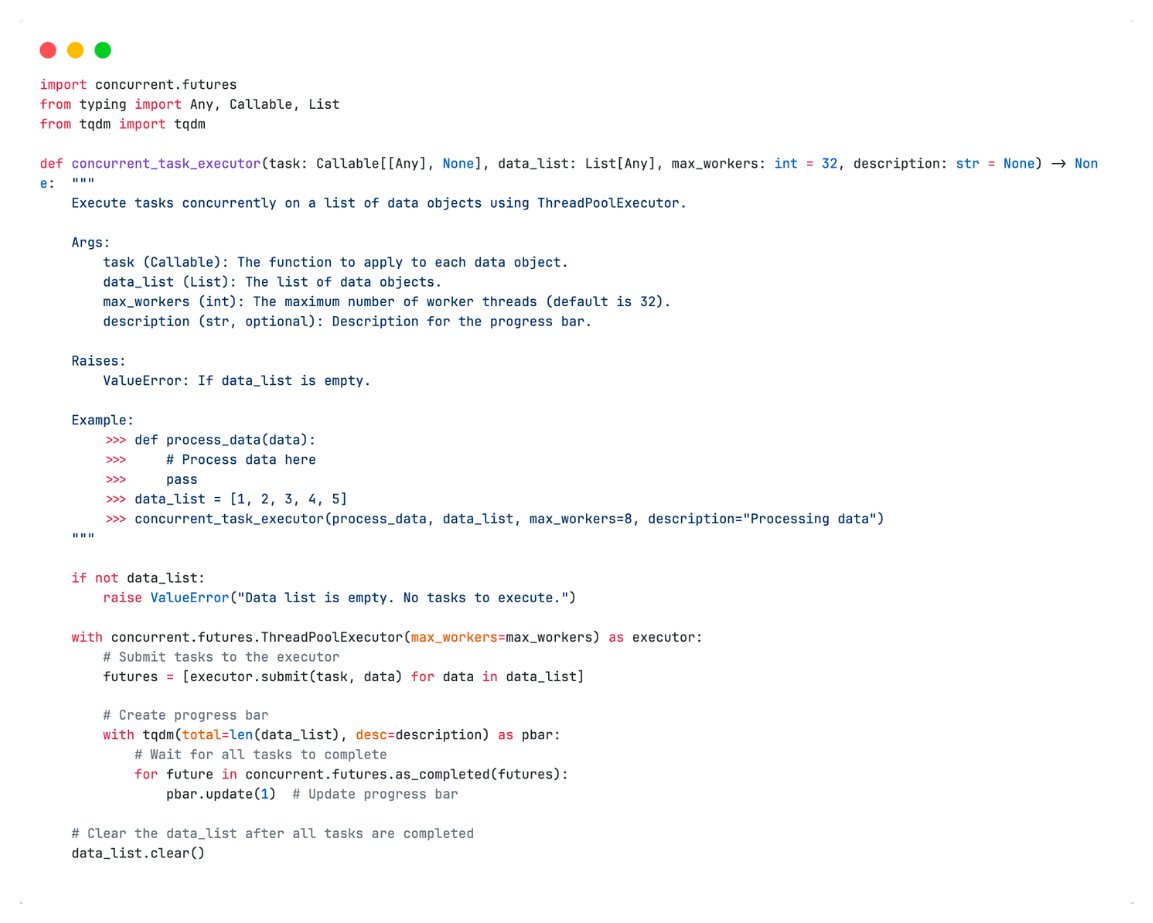

In our paper and open-source code, we pair on-device LLMs (@ollama) with frontier LLMs in the cloud (@openai, @together), to solve token-intensive workloads on your 💻 at 17.5% of the cloud cost while maintaining 97.9% of the accuracy.

See Gru and the Minions in action below, 🔉on please (h/t @cartesia)!

English