Lightning AI ⚡️

4.9K posts

Lightning AI ⚡️

@LightningAI

The AI omnicloud PyTorch developers love. Made the first AI Studio & PyTorch Lightning. Get help: https://t.co/a69wnEBpKH

New York, NY Katılım Ağustos 2019

89 Takip Edilen46.9K Takipçiler

Thanks for your question! Credits aren’t pooled or transferable between accounts. Each user keeps and uses their own credits, even within a teamspace.

Free credits are tied to individual usage and are reissued monthly based on activity. Your account must use the free credits, if not you will stop being topped up to 15 credits going forward.

English

@LightningAI I have a few questions regarding account credits and teamspace usage in LightInAI.

Currently, I have an account with 15 free credits. Within my teamspace, I have a default studio where I uploaded my dataset and training source code (SC1). In addition, I have created

English

One of the most common training blockers is storage.

@Google has announced Rapid Bucket, which connects directly to the @PyTorch ecosystem via gRPC bidirectional streaming.

For PyTorch Lightning users, this means performance gains for:

Data loading: dramatically faster fsspec-based pipelines, automatically

Checkpointing: a 2.8x write throughput improvement shrinks checkpoint overhead

Because Rapid Bucket integrates through fsspec (PyTorch Lightning already uses) there's no migration work. Bucket-type auto-detection handles it transparently.

Benchmarks in the blog: developers.googleblog.com/speeding-up-ai…

English

Tech Week has a lot of panels.

Most don’t get you building.

We’re running a hackathon with real compute on the line.

One full day. Build and ship agents with us and our partner @validia_ai.

200+ builders showed up last time. We’re going bigger for NYC @Techweek_ this June.

Spots are limited. Register → go.lightning.ai/4n00Qw0

English

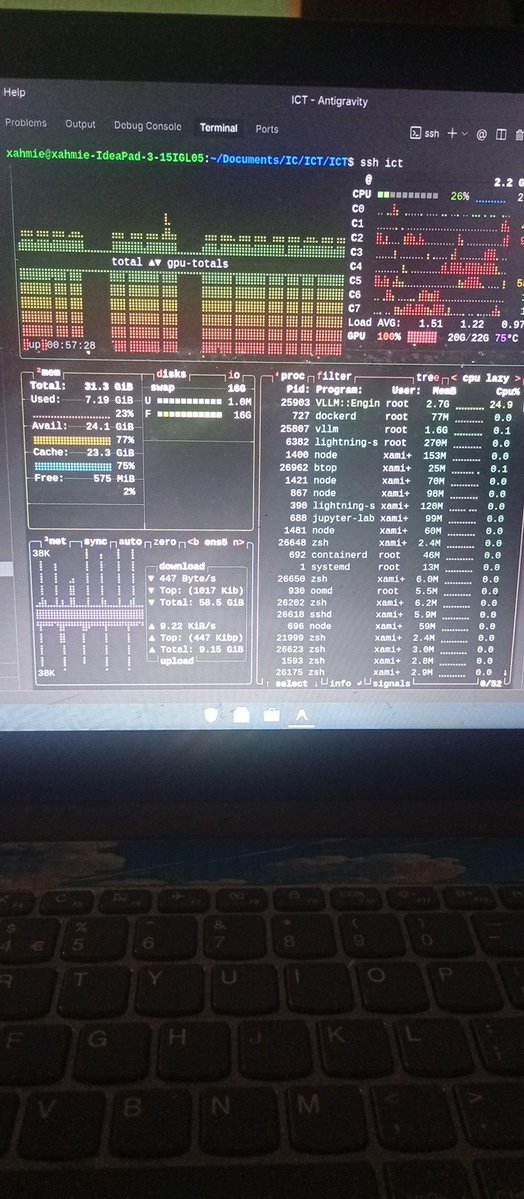

@AghaibieS Making compute easy to use is the goal. Glad it’s working for you 🙌

English

Compute at its finest..huge Thanks to @LightningAI for building this ... Making compute easily accessible is very underrated..

English

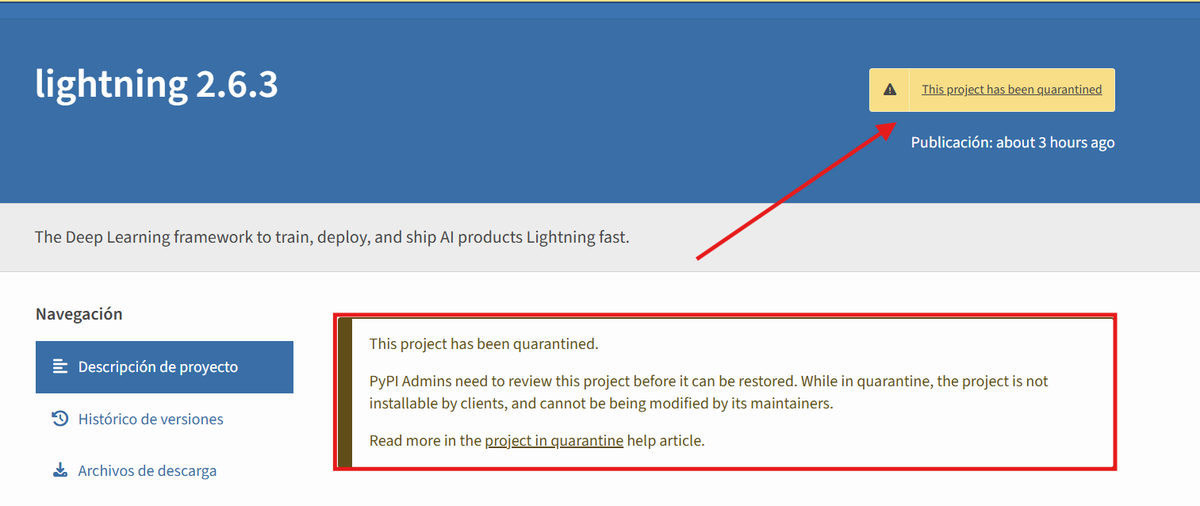

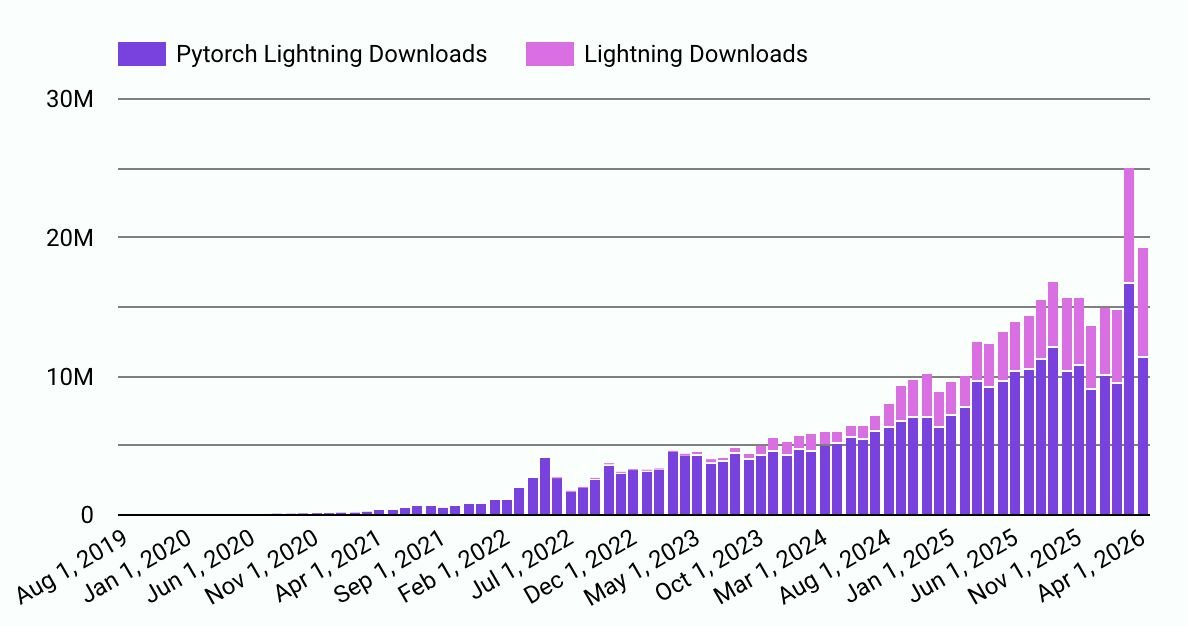

Yesterday the open source community spotted a supply chain attack on PyTorch Lightning and contained it in 42 minutes.

Community members flagged unusual behavior, reported it, and stopped it before it spread.

Compromised versions (2.6.2, 2.6.3) were live between 12:45–13:27 UTC. This affected distribution only. The GitHub repo was never touched.

Thanks to the community for the quick reports, to @pypi for quarantining the packages, and to @SocketSecurity for surfacing detailed analysis.

Read the full report → go.lightning.ai/4n5vGTO

English

Lightning AI ⚡️ retweetledi

Yesterday we saw a supply-chain attack on PyTorch Lightning (on Pypi, not our core repo). It's wild how it happened but it was caught and quarantined within 42 minutes thanks to the open source community.

It's one reason why open source actually helps increase the security posture of projects. Thank you to @pypi and @SocketSecurity ⚡️

Summary: On April 30th, 2026, an attacker captured PyPI credentials and used them to push compromised versions of PyTorch Lightning (PTL).

These versions were live for 42 minutes before PTL community members alerted us and PyPI quarantined the package. The PyTorch Lightning GitHub source code repository was never compromised. This affected those who installed PTL via PyPI between 12:45:20 and 13:27:30 UTC on April 30th, 2026.

lightning.ai/blog/pytorch-l…

English

@javi_22_dev Thanks for flagging this. We’ve confirmed the issue and are working on a fix now. We’ll share an update shortly. If you’re on 2.6.2 or 2.6.3, please use 2.6.1 until we publish 2.6.4 shortly.

Full details and remediation steps: go.lightning.ai/4cRzaoc

English

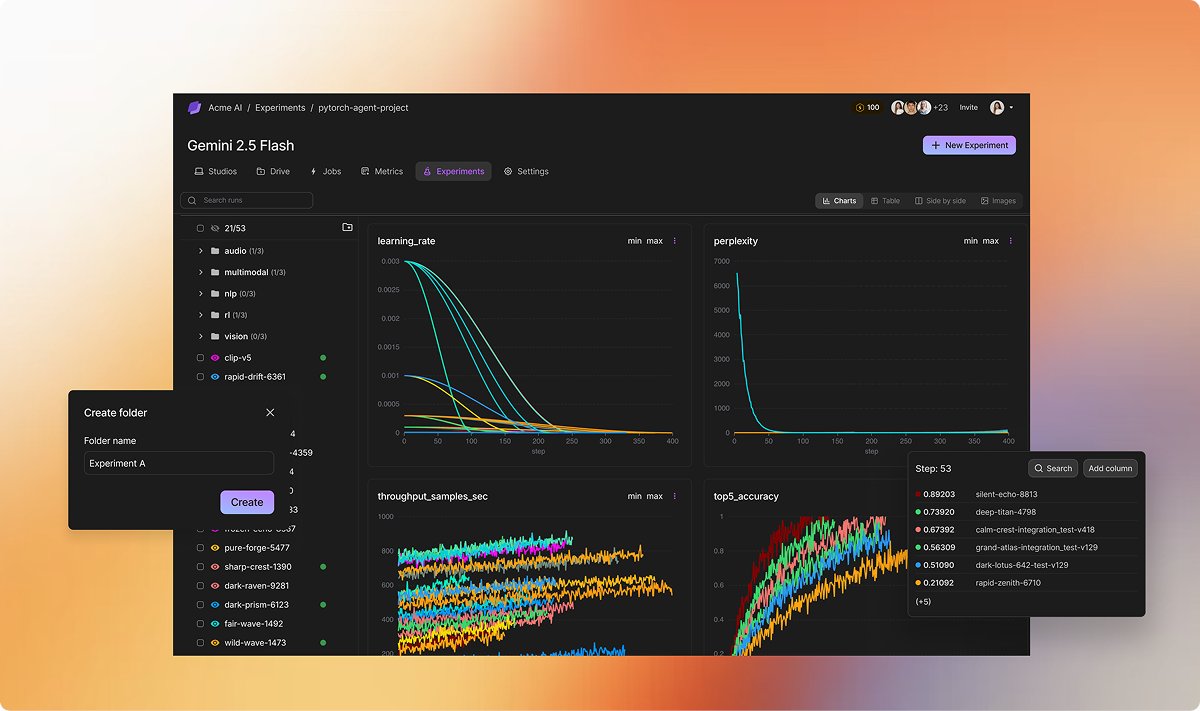

Most teams don’t run enough experiments.

Not because of ideas. Because iteration is slow.

Autoresearch changes that.

One GPU. Five-minute runs. An agent that keeps what works and drops what doesn’t.

100+ experiments overnight.

Run the template → go.lightning.ai/4vH1mCH

English

Thanks to our community for reporting a security issue in PyTorch Lightning 2.6.2 and 2.6.3.

This is open source working: someone spotted it, reported it responsibly, and we're shipping a fix. If you're on those versions, please use 2.6.1 until we publish 2.6.4 shortly. Our team is on it. Reach out if you have questions.

Full details and remediation steps here: go.lightning.ai/4cRzaoc

English

Lightning AI ⚡️ retweetledi

Experiment tracking is where workflows slow down.

Too many tools. Too many dashboards.

LitLogger is part of Lightning.

Metrics, runs, and experiment management all in one place.

No context switching.

Start tracking → go.lightning.ai/41AsmGn

English

Everyone wants to build multimodal agents but most get stuck stitching together separate vision, audio, and text models.

Run @nvidia Nemotron 3 Nano Omni on Lightning. See, hear, and reason in a single workflow.

One model instead of many. Higher throughput. Open weights, lightweight enough to run continuously.

Deploy now and get 30M free tokens monthly → go.lightning.ai/4w2W9FQ

English

@isaaccorley_ TorchMetrics compatibility is big. Turns intrinsic dimension into something you can track during training, not just analyze after.

English

also implemented a @LightningAI torchmetrics compatible metric for tracking intrinsic dimension during training/eval

English

tired of waiting on scikit-dim for intrinsic dimension analysis? torchid is a pure pytorch rewrite with up to 980x speedup on gpu

isaac.earth/torchid

English

200+ builders. One day. Agents shipped.

Our Personalized Agents Hackathon with @validia_ai is back for NYC @Techweek_

Build a personalized agent in a day with @openclaw in Lightning Studios.

GPU-backed. No setup.

Register → go.lightning.ai/4n00Qw0

English

Your system needs to be fast enough to be usable.

Lightning ranks #1 on @nvidia Nemotron 3 Super in the @ArtificialAnlys benchmark. Fastest output. Lowest latency.

Run it → go.lightning.ai/4mPLOZm

English

@_avichawla @UnslothAI Nice. This is exactly why we ship templates like this. Makes it easy to go from idea → working system fast.

English

Tech Stack:

- @UnslothAI to run and fine-tune the model

- @LightningAI environments for hosting and deployment

Find the code and environment setup here: lightning.ai/lightning-purc…

English

Fine-tune DeepSeek-OCR on your own language!

(100% local)

Most vision models treat documents as massive sequences of tokens, making long-context processing expensive and slow.

DeepSeek-OCR uses context optical compression to convert 2D layouts into vision tokens, enabling efficient processing of complex documents.

It is a 3B-parameter vision model that achieves 97% precision while using 10x fewer vision tokens than text-based LLMs.

In fact, you can easily fine-tune it for your specific use case on a single GPU.

I used Unsloth to run this experiment on Persian text and saw an 88.26% improvement in character error rate.

↳ Base model: 149% character error rate (CER)

↳ Fine-tuned model: 60% CER (57% more accurate)

↳ Training time: 60 steps on a single GPU

Persian was just the test case. You can swap in your own dataset for any language, document type, or specific domain you're working with.

I've shared the complete guide in the next tweet, which includes the code, notebooks, and environment setup ready to run with a single click.

Everything is 100% open-source!

English

@invisprints GLM 5 and Kimi 2.5 are live today.

GLM 5.1 and Kimi 2.6 aren’t available yet, but we’re adding new models often → lightning.ai/models

English

Most models break as context grows.

DeepSeek AI V4 Pro doesn’t.

✅ 1M token context

✅ Mixture-of-Experts routing

✅ Stronger tool use across long workflows

Built for agents that don’t reset every few steps.

Now available for inference on Lightning. Day zero access.

Run it → go.lightning.ai/4tvhiqh

English

@_Suresh2 True for most tools. That’s why it’s not a separate tool here, tracking is part of the runtime.

English

@LightningAI the no setup part is where tracking tools usually start to fall apart

English

Experiment tracking shouldn’t mean stitching together tools.

LitLogger is built into Lightning.

Log metrics, compare runs, and manage experiments in one place.

No setup. No jumping between dashboards.

Start tracking → go.lightning.ai/41AsmGn

English