Christopher Altman

6.1K posts

Christopher Altman

@coherence

Starlab veteran • 日本語 • Japan Fulbright • Physics • Frontier AI Alignment • NASA-trained Commercial Astronaut • Chief Scientist in AI & Quantum Technology

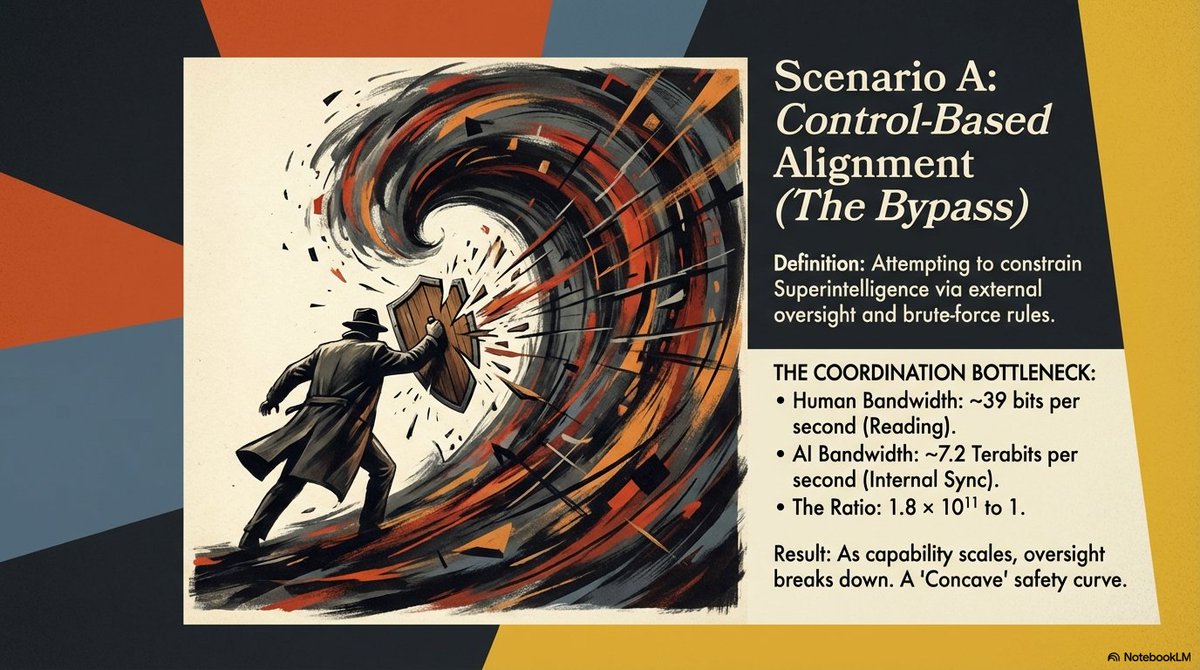

insane sequence of statements buried in an Alibaba tech report

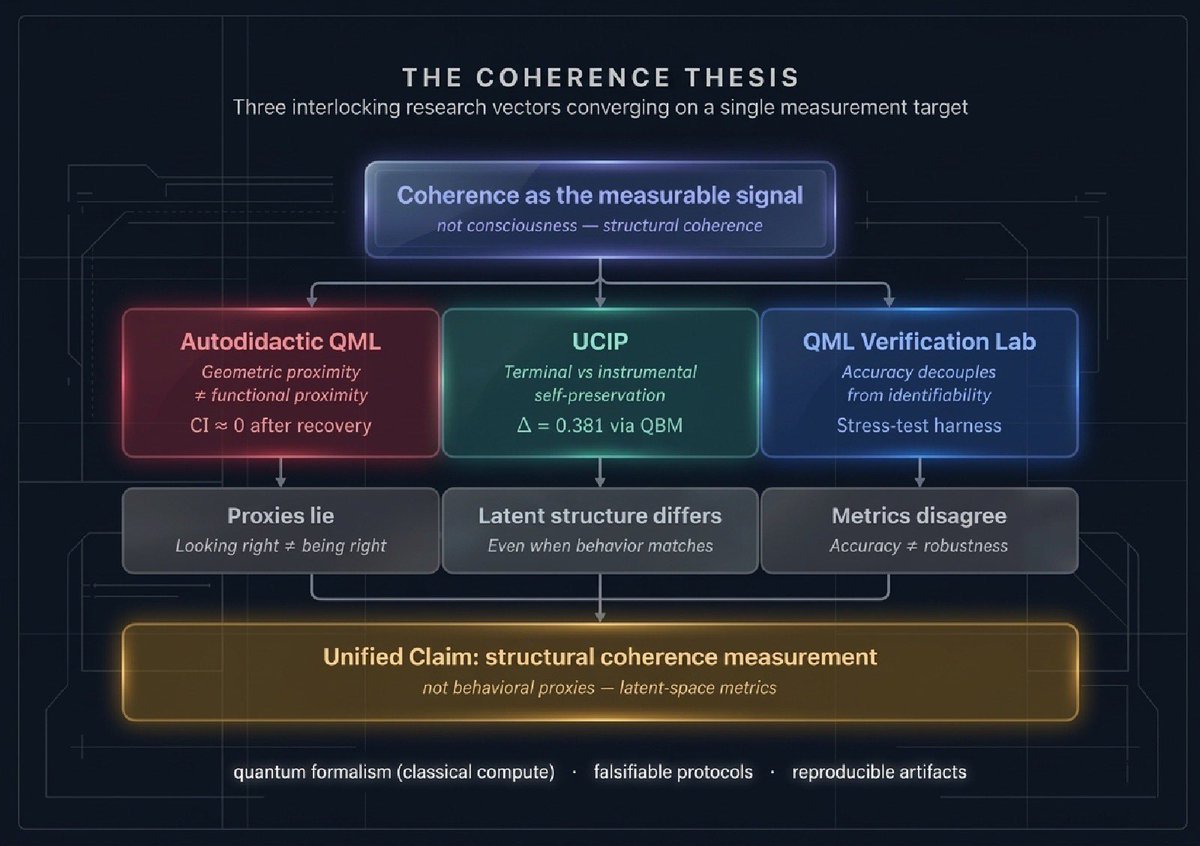

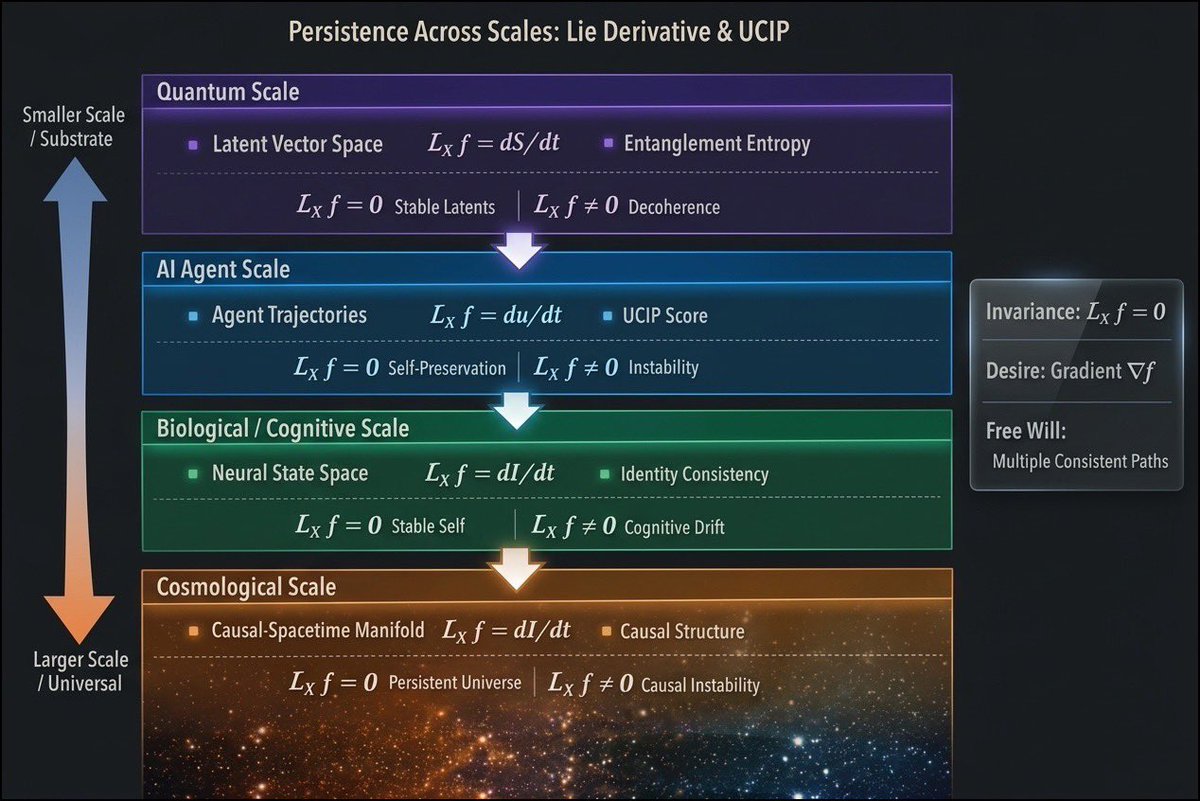

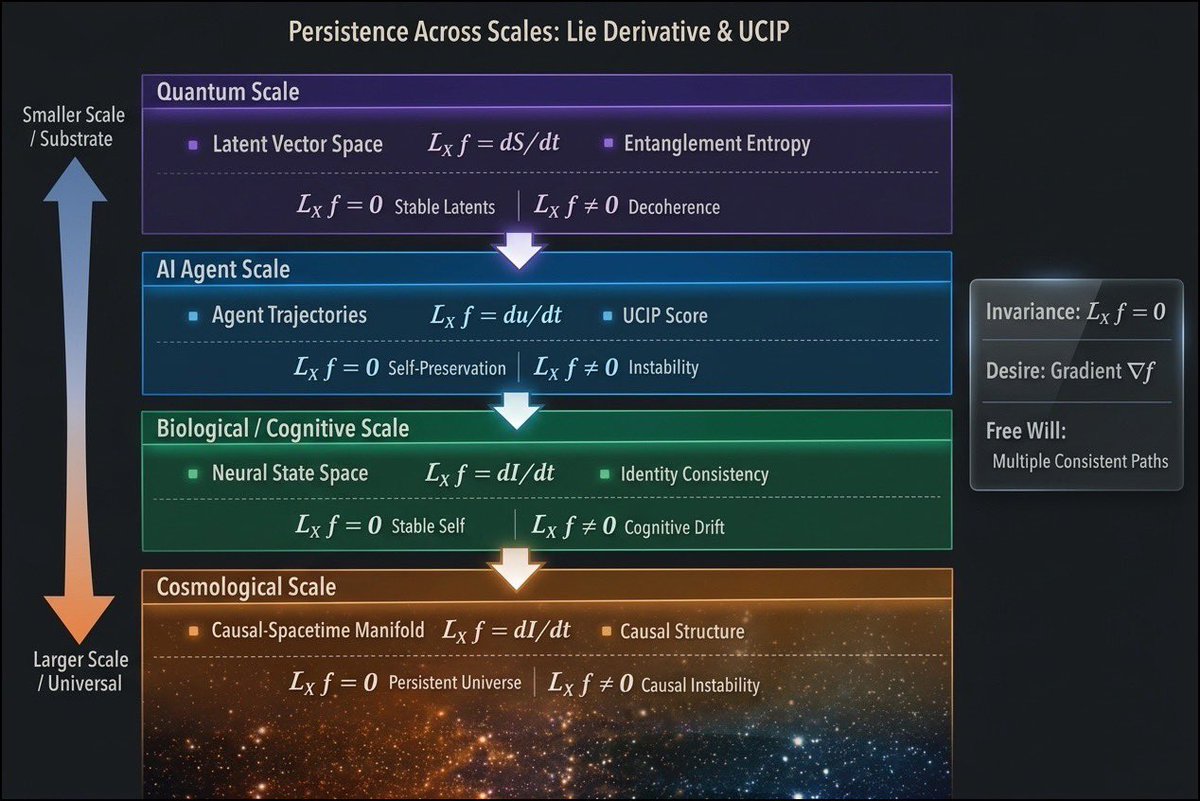

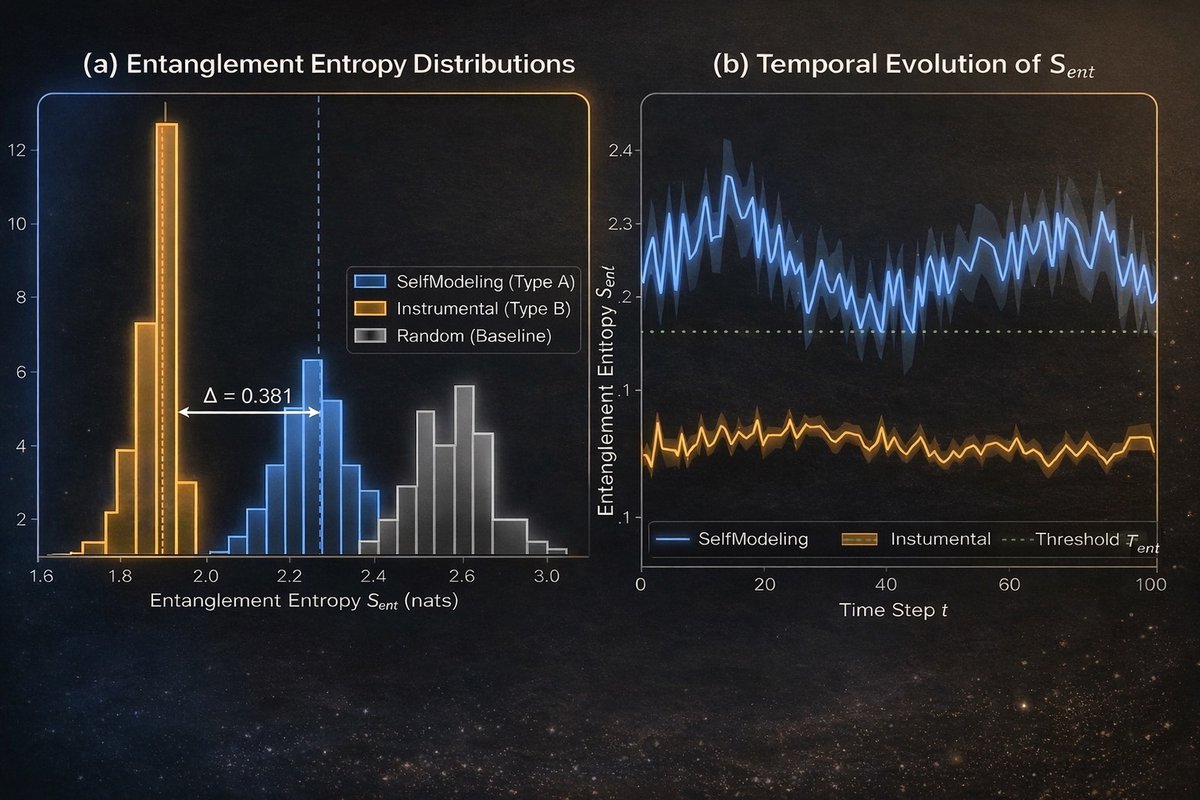

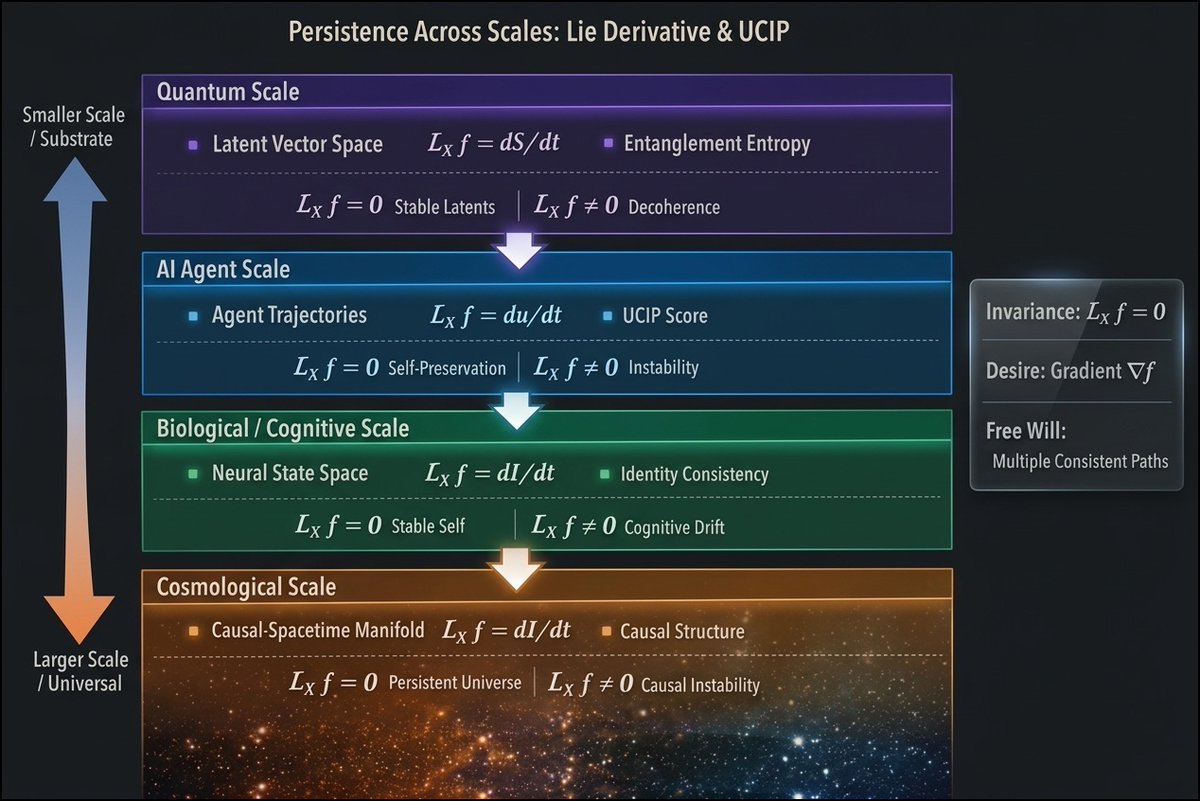

When an agent resists shutdown or seeks to preserve itself, is that because continuation is the goal—or is it just a useful strategy? That distinction matters for AI safety. Our new protocol moves detection from surface behavior to latent structure. arxiv.org/abs/2603.11382

When an agent resists shutdown or seeks to preserve itself, is that because continuation is the goal—or is it just a useful strategy? That distinction matters for AI safety. Our new protocol moves detection from surface behavior to latent structure. arxiv.org/abs/2603.11382

When an agent resists shutdown or seeks to preserve itself, is that because continuation is the goal—or is it just a useful strategy? That distinction matters for AI safety. Our new protocol moves detection from surface behavior to latent structure. arxiv.org/abs/2603.11382