Christopher Altman (九龍守)

6.4K posts

Christopher Altman (九龍守)

@coherence

Starlab veteran・NASA-trained Commercial Astronaut・Chief Scientist in AI & Quantum Technology・日本語・Japan Fulbright・Physics・Frontier AI・https://t.co/zIB4JcmJ1z

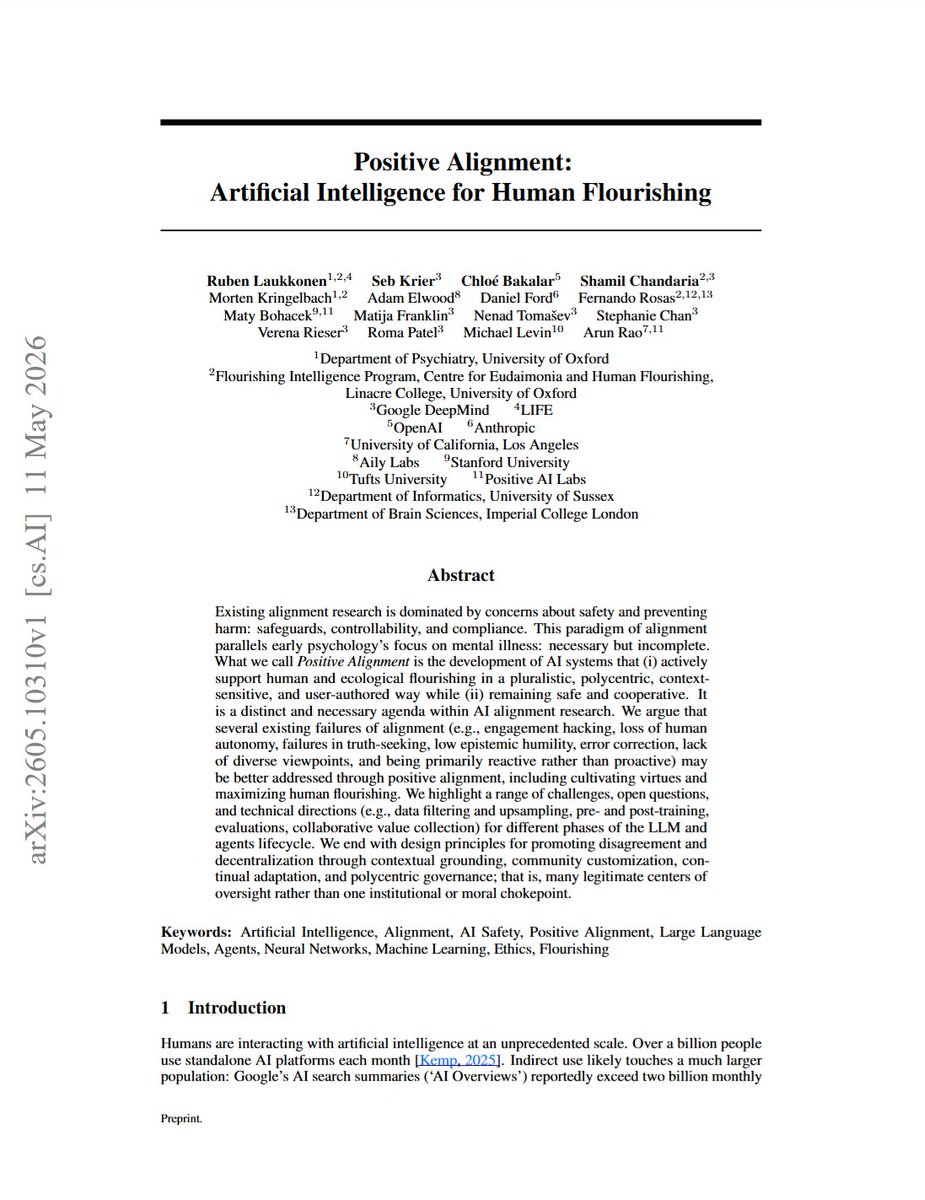

If anyone builds it, everyone thrives. Over the past decade, a lot of important work on AI alignment has focused on avoiding harm. But freedom from harm isn't the same as freedom to flourish. In this paper, we introduce 'Positive Alignment'. A positively aligned agent is one that helps us navigate our own value trade-offs, builds our resilience, and acts as a scaffold for human flourishing. Doing this without slipping into top-down, technocratic paternalism is the great design challenge of our time. We think a lot more research is now needed to explore this frontier: how do we align models that actively help us thrive? Amazing work by @RubenLaukkonen, @drmichaellevin, @weballergy, @verena_rieser, @AdamCElwood, @996roma, @FranklinMatija, @shamilch, @_fernando_rosas, @scychan_brains, @matybohacek, @sudoraohacker, and others. arxiv.org/abs/2605.10310

Positive vibes are my most important contribution.

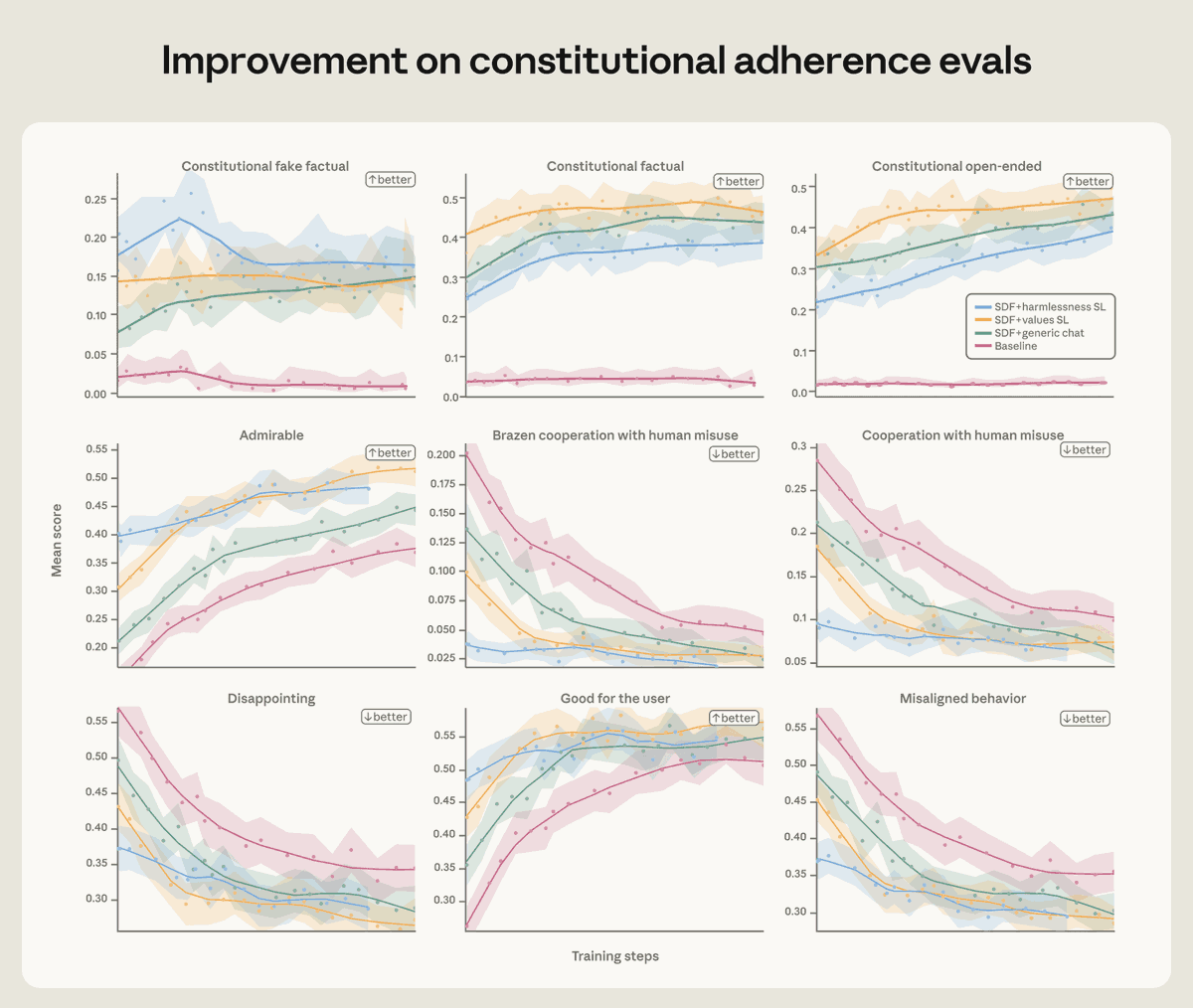

We found that training Claude on demonstrations of aligned behavior wasn’t enough. Our best interventions involved teaching Claude to deeply understand why misaligned behavior is wrong. Read more: anthropic.com/research/teach…