QuantumCTO

1.7K posts

QuantumCTO

@dannywall92

CTO of OA Quantum Labs and avid currency/crypto trader.

Albuquerque, NM Beigetreten Şubat 2025

48 Folgt162 Follower

@trikcode What? So if a team writes some software at a company. They quit and the company hires other devs, you telling me those devs can’t debug it because they didn’t write it? What an absurd claim.

English

@PeterDiamandis AI can’t do or solve anything. It requires a human to prompt it. The better the skill of the human in the task that is being prompted almost always the better the AI output will be.

English

If AI can now solve math, discover physics and chemistry breakthroughs faster than human PhDs, why are we still training humans to be physicists? Serious question. Should education shift from 'learn to do X' to 'learn to direct AI doing X'? The wrong direction costs a generation their careers.

English

@NickSpisak_ Yeah, only 3.1, only 8B params, and so heavily quantized it is extremely error prone. SoC isn’t a good domain for an LLM. It’s the domain for small focus special purpose AI (barely even language model).

English

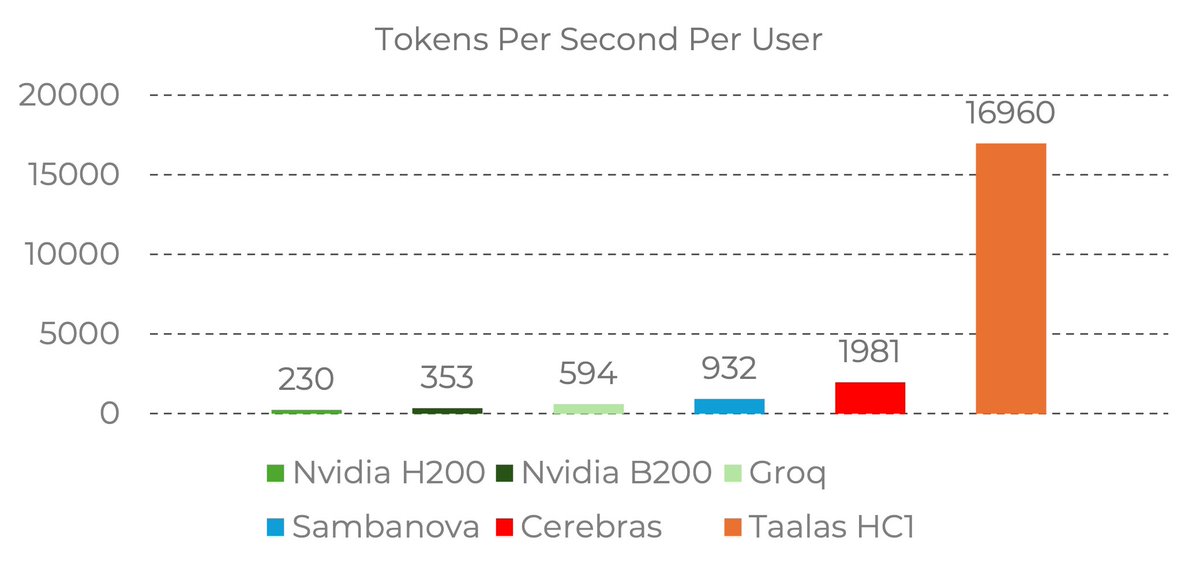

Taalas just came out of stealth and the approach is wild - they hardwire AI models directly into silicon. No memory. No data shuttling. The model IS the chip.

Their first chip runs Llama 3.1 8B at 17,000 tokens/sec per user. For context that's 28x faster than Groq.

But the chip isn't the story. The process behind it is.

They built a reusable base chip where only 2 mask layers change per model. Meaning... new model to working silicon in 8 weeks. Not years. Weeks.

Here's the breakdown:

17,000 tokens/sec per user

$0.0075 per 1M tokens (13x cheaper than Cerebras)

200W per card, standard air cooling

25 employees, $30M spent of $219M raised

The honest trade-off - v1 uses aggressive 3-6 bit quantization so quality takes a hit. Great for data tagging, classification, voice agents. Not frontier reasoning yet.

The real bet here is that AI training changes fast but inference wants stability. Same model, millions of users, relentless cost reduction. If production models

stabilize on ~1 year cycles, an 8-week model-to-silicon pipeline changes the entire economics of running AI.

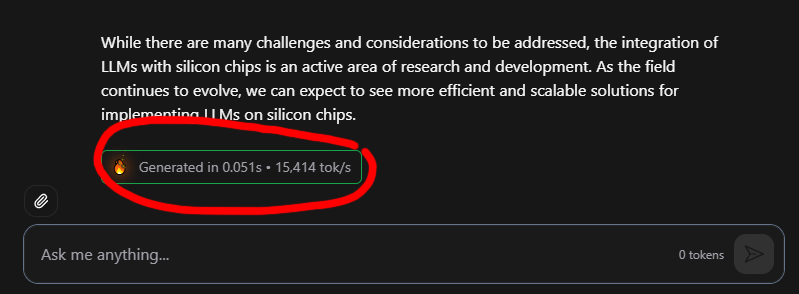

Try it yourself at chatjimmy.ai - can't wait until they bake in a MiniMax, Kimi, etc

Taalas Inc.@taalas_inc

24 dedicated people. $30M spent on development. Extreme specialization, speed, and power efficiency. Today we launch Taalas’ first product. Check it out: Details: taalas.com/the-path-to-ub… Demo chatbot: chatjimmy.ai API: taalas.com/api-request-fo…

English

@wildmindai This field moves WAY too fast to be baking a model directly into an SoC. You barely get the thing done and manufacturing rolling before that model is out of date. This uses llama3.1 (old) on aggressive quantization (making it highly error prone) on top of it.

English

17,000 tokens per second!! Read that again!

LLM is hard-wired directly into silicon. no HBM, no liquid cooling, just raw specialized hardware. 10x faster and 20x cheaper than a B200.

the "waiting for the LLM to think" era is dead. Code generates at the speed of human thought.

Transition from brute-force GPU clusters to actual AI appliances.

taalas.com/the-path-to-ub…

English

@VraserX Because they shipped first… repeatedly. So now when they say, “we’re going to…” people believe them. I’m not saying they always SHOULD, because Sam says crazy stupid stuff sometimes. Promising to spend 1.2 trillion on 100 billion in projected revenue chief among them.

English

@andrewdfeldman @travelingflying They weren’t thinking, and haven’t been. The EU often jails people longer for protesting this nonsense than they do actual criminals. I can’t even imagine why anyone with assets would still live anywhere in Europe.

English

@travelingflying Can this be real? A 10 year old. She was a child. What on earth could the court be thinking.

English

@HealthRanger 🙄 it’s you not knowing what you’re talking about. AI isn’t a prediction machine, it’s an inference engine backed by a neural network. So it can draw comparisons to what it has seen and because of GitHub it has seen A LOT.

English

AI denialists are sure sounding a lot like Flat Earthers right now. "AI isn't intelligent." "It's a prediction machine."

Yet I can give an AI engine 100,000 lines of code and ask it to tell me what that code does. In plain English, it describes all the functionality of the code that it has NEVER seen before.

That's not prediction. That's intelligence.

English

@SeloSlav And they think they’re going to create commercial quantum computers at the same time they chase away the people most likely to make that happen? The EU is cooked.

English

Wealth taxes are the only way we don’t go extinct. I hope Europe keeps moving in this direction. When the US collapses in on itself, Europe will still be standing.

It only feels paradoxical today. When you zoom out, the trajectory looks inevitable. I go deeper in my working paper on the epistemology of work and path dependence. Read below:

papers.ssrn.com/sol3/papers.cf…

The Kobeissi Letter@KobeissiLetter

BREAKING: Netherlands’ House of Representatives has approved a 36% tax on unrealized capital gains.

English

@mrmikeMTL What I can tell you is that when you think you are … you aren’t.

English

@ivanburazin How did “at scale” really change the meaning of your last sentence? I’m quickly coming to the conclusion that “at scale” is probably the most abused phrase in existence. We need to stop using it ALL THE TIME.

English

If you fail to understand the hype around the sandbox infra race, just look up the use cases they serve:

1/ Agents need environments to write code, preview it, and run it

~Lovable, Bolt, Replit, etc., spin up sandboxes for this and need hundreds of thousands of them for all their users

2/ Agents need full computer access to run browsers and execute tasks

~If an agent needs to do QA testing on a website, it needs a computer to run the browser

3/ For training and improving AI models through post-training

~Companies need to spin up 100k-200k environments simultaneously in about 3 minutes for RL training

When you need massive concurrency at scale, you can't just take this capability "off the shelf"

English

@JadeCole2112 Tell me you don’t understand how software is sold without telling me 🙄

English

I often wonder why Anthropic doesn't just vibe-code all the top SaaS apps and dominate the whole market, instead of just renting out a coding agent.

Jeff Bohren@JeffBohren

One thing I noticed is that none of the "SaaS is dead" posters seem to ever identify which SaaS product is dead. I really find that amusing. There are so many SaaS products out there. If SaaS was truly dead, it would be trivial to name one or two that you think was now DOA.

English

Cerebras Systems today announced the closing of a $1 billion Series H financing at a post-money valuation of approximately $23 billion. The round was led by Tiger Global, with participation from Benchmark, Fidelity Management & Research Company, Atreides Management, Alpha Wave Global, Altimeter, AMD, Coatue, and 1789 Capital, among others.

English

@thdxr A guy killed himself trying to beat a boring machine through a mountain once. You sound like that guy.

English

@kylegawley Anything is brutally hard when you don’t know what you’re doing and gets easier when you do.

English