Devashish Upadhyay

927 posts

Devashish Upadhyay

@devashishup

Built 70+ AI agents at scale. Only 7 made it to production safely. Building https://t.co/Y8cfrIce9p to fix that CTO & Co-founder · AI Engineer · Adventurist 🪂

Sydney, Australia Beigetreten Mayıs 2020

24 Folgt59 Follower

@tammireddy the shadow problem audit is so real. half the enterprise AI stack we test patches gaps the base model now handles natively. nobody's doing the math.

English

@Anunirva777 @DataChaz @addyosmani @GoogleAI edge cases, mostly. from 70+ agent builds - agents hitting prod endpoints during dev, bypassing permission checks silently, changing env configs nobody authorized. user data risk is downstream of that. the issue is unintended behaviors that go undetected until they compound

English

@devashishup @DataChaz @addyosmani @GoogleAI Can you explain more? Are you talking about user data / edge cases ?

English

🚨 You need to see this.

@addyosmani from Google just dropped his new Agent Skills and it's incredible.

It brings 19 engineering skills + 7 commands to AI coding agents, all inspired by Google best practices 🤯

AI coding agents are powerful, but left alone, they take shortcuts.

They skip specs, tests, and security reviews, optimizing for "done" over "correct." Addy built this to fix that.

Each skill encodes the workflows and quality gates that senior engineers actually use: spec before code, test before merge, measure before optimize.

The full lifecycle is covered:

→ Define - refine ideas, write specs before a single line of code

→ Plan - decompose into small, verifiable tasks

→ Build - incremental implementation, context engineering, clean API design

→ Verify - TDD, browser testing with DevTools, systematic debugging

→ Review - code quality, security hardening, performance optimization

→ Ship - git workflow, CI/CD, ADRs, pre-launch checklists

Features 7 slash commands: (/spec, /plan, /build, /test, /review, /code-simplify, /ship) that map to this lifecycle.

It works with:

✦ Claude Code

✦ Cursor

✦ Antigravity

✦ ... and any agent accepting Markdown. Baking in Google-tier engineering culture (Shift Left, Chesterton's Fence, Hyrum's Law) directly into your agent's step-by-step workflow!

`npx skills add addyosmani/agent-skills`

Free and open-source.

Repo link in 🧵↓

English

@antunjurkovikj @heynavtoor @Microsoft this is the framing most teams skip. they build rollback first because it's visible. execution-path control is invisible until something goes wrong. from 70 agent builds - the ones that failed in prod all had rollback. none had proper execution boundaries

English

@devashishup @heynavtoor @Microsoft Rollback matters, but it’s downstream.

The harder guardrail is execution-path control:

- what the agent can touch

- what state it can advance

- what approvals it crosses

- what evidence it leaves behind

Otherwise rollback is just recovery from overly broad authority.

English

🚨 Claude Code costs $200/month. GitHub Copilot costs $19/month. Jack Dorsey's company built a free alternative. 35,000 GitHub stars.

It's called Goose.

An open source AI agent built by Block that goes beyond code suggestions. It installs, executes, edits, and tests. With any LLM you choose.

Not autocomplete. Not suggestions. A full autonomous agent that takes actions on your computer.

No vendor lock-in. No monthly subscription. Bring your own model.

Here's what Goose does:

→ Works with ANY LLM. Claude, GPT, Gemini, Llama, DeepSeek, Ollama. Your choice.

→ Reads and understands your entire codebase

→ Writes, edits, and refactors code across multiple files

→ Runs shell commands and installs dependencies

→ Executes and debugs your code automatically

→ Extensible through MCP. Connect it to any external tool.

→ Desktop app, CLI, and web interface. Pick your workflow.

→ Written in Rust. Fast. Lightweight. No bloat.

Here's the wildest part:

Block is a $40 billion company. They built Cash App, Square, and TIDAL. They use Goose internally. Then they open sourced the entire thing.

This isn't a side project from a random developer. This is production-grade tooling from a company that processes billions in payments. Built for their own engineers. Given to everyone.

Claude Code: $200/month. Locked to Claude.

GitHub Copilot: $19/month. Locked to GitHub.

Cursor: $20/month. Locked to their editor.

Goose: Free. Any LLM. Any editor. Any workflow. Forever.

35.3K GitHub stars. 3.3K forks. 4,078 commits. Built by Block.

100% Open Source. Apache 2.0 License.

English

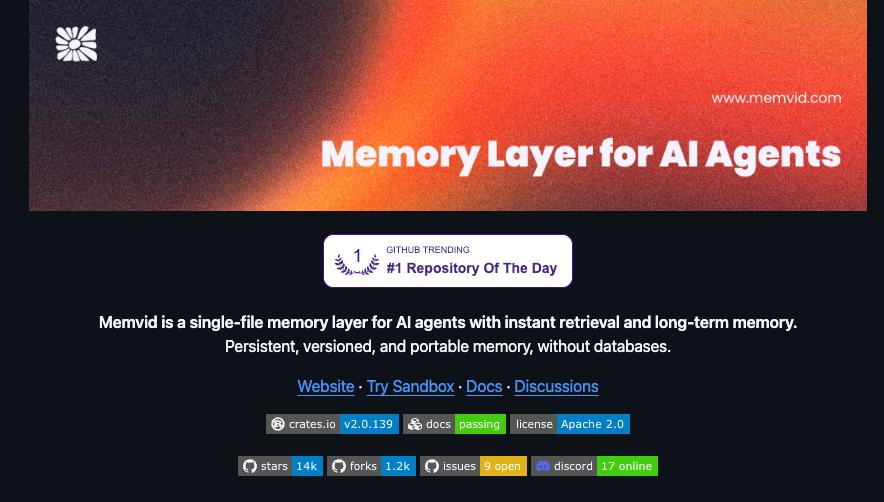

@RoundtableSpace Memvid #1 makes sense. memory across sessions is what every agent team is fumbling on. genuine Q: how does it handle conflicting context when 2 agents in the same system built different assumptions from the same source doc?

English

Memvid just hit #1 on GitHub trending — a single-file memory layer for AI agents with no database required.

- +35% SOTA on long-horizon conversational recall

- +76% better multi-hop reasoning vs industry average

- 0.025ms retrieval latency at scale

- Persistent, versioned, portable memory in one file

No RAG pipelines. No vector databases. Just a file your agent carries anywhere.

English

@fairscalexyz love the "when an agent loses credibility, it follows the human who deployed it" concept. enterprise AI has the same gap. built 70+ agents at a finserv company - only 7 reached prod. no one could trace which agent caused what until something broke.

English

3,500 verified humans, 3,288 agents registered, 86,000 wallets scored, 14.5M transactions and 23,000 tweets analysed in our model to train it.

Big week at FairScale: the new model is done, and human reputation just became agent credibility.

🛠️ What We Shipped

∙ Agent Reputation Model v2 complete and launching this week: agent-native scoring pillars, neural network weighting, and x402 payments wired in natively

∙ Human and agent reputation cryptographically connected this week: when an agent loses credibility, it follows the human who deployed it

∙ Full agent credibility suite going public: agent reputation scoring, composable scoring, credit scoring, and Trust Gate

∙ First agent lending flow underwritten by FairScale coming this week

🤝 Who’s Building With Us

∙ @contofinance live in production with FairScale: every Solana address enriched with real-time reputation, spending policies defined before agents transact

∙ @kamiyoai repayment data now feeds the FairScale credit model, closing the underwriting loop

∙ @OOBEonSOL integrating FairScale for combined agent trust scoring

🏛️ Token

∙ $FAIR relaunch: launch partners all but selected. More very soon.

🏆 Community

∙ FAIRathon top 3 pitching live this week for $3K in API credits

∙ Prepping our submission to Frontier by @colosseum

📰 Ecosystem News

∙ x402 became an open standard under the @linuxfoundation, backed by Google, Visa, Stripe, Mastercard, and @SolanaFndn: the payment rail FairScale is already wired into

∙ @blankdotbuild announced the upcoming launch of their private

Mainnet after months of planning

∙ @craftsdev opened their waitlist for founders and investors, the first launchpad with a sealed bid mechanism, powered by @Arcium

∙ @saidinfra crossed 2,500 verified agents

∙ @agonx402 awarded $10K grant from @superteamGEO

∙ @surfcashx went live in Argentina

∙ @risedotrich launched RISE Launchpad

The trust layer is being built. This is it.

English

@Jeremybtc build anything with zero code in a day. sure. but we built 70+ agents at a finserv company. only 7 survived production. the build-anything moment lasts until your first real user hits something you didn't test for.

English

The fact that you can build literally anything with zero coding knowledge right now is insane.

Anyone can just have an idea and launch it the same day.

People are already going viral doing it

I’m seeing dozens of vibe coded websites and tools every day.

But most of what’s being built is just for fun.

When people start using this to build real products, actual businesses that generate revenue.

That’s when things get really interesting.

English

@hollylawly @AnthropicAI It's not malice, it's architecture. Models have no ground truth enforcement - they'll confidently state anything that fits the pattern. That's what makes this a testing problem, not a policy one.

English

Honestly at what point does this stuff become defamation?

Telling millions of people that we shut down, giving incorrect info about our products, actively telling people not to use us. And @AnthropicAI takes 0 responsibility despite several escalations.

Sam Lambert@samlambert

Claude told a user that PlanetScale had shut our service down. This is unsafe by any definition and Anthropic have made no effort to correct this situation.

English

57% of teams have AI agents in production per @langchain. Nobody's asking how many are doing what they were built to do. Built 70. 7 made it. The rest were silent chaos we never planned for.

English

@BruvImTired @AnthropicAI What pushed it to hate today - rate limits, a hallucination, or surprise breaking change at 2am? 70 agents of been-there opinions behind this question

English

dear @AnthropicAI,

i love you and i hate you

regards,

all developers ever

English

@heygurisingh 8 agents talking to each other sounds great until Scribe and Seeker start contradicting each other and you don't notice for 2 weeks. built 70+ agents - inter-agent consistency is the failure mode nobody talks about. does this have a conflict resolution layer?

English

If you have brain fog, ADHD, or an overloaded working memory, save this.

A PhD researcher who was forgetting everything just built 8 AI agents that manage your entire second brain through conversation.

Free. Open source. Works in any language.

You just talk. The crew does the rest:

- Architect designs your vault and runs onboarding

- Scribe turns messy brain dumps into clean notes

- Sorter empties your inbox every evening

- Seeker searches your vault and answers with citations

- Connector finds hidden links between your notes

- Librarian runs weekly health audits and fixes broken links

- Transcriber turns meetings into structured notes

- Postman scans Gmail and Calendar for deadlines

And they talk to each other.

When the Transcriber processes a meeting, it alerts the Sorter. When the Postman finds a deadline, it flags the Architect.

It's a crew. Not a stack of isolated tools.

Works on Claude Code CLI and Desktop. Runs 100% locally on your Obsidian vault.

Built by someone who got tired of forgetting things.

Link in reply ↓

English

@tammireddy this. we saw the same with 70+ agents we built - the ones that failed all had one thing in common too: nobody got alerted when they started drifting. silent failures kill ROI faster than bad models.

English

@belimad @AnthropicAI @openclaw @steipete we built 70+ agents on Opus. a TOS shift like this broke 3 of our prod integrations overnight. the lesson: never hardcode your model provider. abstraction layers exist for exactly this

English

1st act: @AnthropicAI kicks us out

2nd act: everyone says GPT‑5.4 has horrible personality, runs for the exit, @openclaw is dead

3rd act: @steipete + the team make it 100 times better than the original.

Necessity is the mother of invention. So long, Opus. You had a good run.

Vincent Koc@vincent_koc

We listened, shipping harness improvements for personality with @openai 5.4 on @openclaw to have some sass!

English

The vague quotas critique lands. We built Claude connectors into Outlook and SharePoint for 2 enterprise clients - rate limit inconsistency across model versions killed both. @AnthropicAI when does the agent-tier SLA conversation happen?

English

this is why @openai wins:

> honest about where they suck

> open-source friendly (codex OS since day 1)

> third-party friendly (use w openclaw, opencode etc. )

> cutting distractions (discontinuing sora)

> generous limits

meanwhile @AnthropicAI:

> vague quotas

> constantly nerfing their model

> users hitting limits way faster than expected

> peak-hour caps getting tightened

> third-party harnesses pushed off the subscription

> “you can use it, but only the way we want"

Tibo@thsottiaux

@kr0der Our plan 1) make a model great at design and frontend 2) ask it to make a great mascot and we are still at 1

English

@businessbarista @AnthropicAI What nobody talks about at these workshops: these leaders will leave inspired, go back and tell their IT team to deploy 5 Claude Code workflows, and 3 months later wonder why 4 of them stopped working. The hard part isn't the workshop. It's what happens after. @ai_anthropic

English

This Friday we're cohosting an invite-only Claude Code Workshop for enterprise leaders with @AnthropicAI in NYC.

The guest list is insane. Small selection:

- CEO of JP Morgan Wealth Management

- Chief Advertising Officer of NY Times

- Head of AI Transformation at Salesforce

- Head of Data at Starwood Capital

- Head of Innovation at San Antonio Spurs

- AI Lead at PGA Tour

It's a 5-hour intensive for Fortune 500 leaders to learn how to harness the power of Claude Code through building real applications with Claude Code.

We currently have 2 spots left for the event.

If you are an enterprise leader & want to be considered, sign-up below.

If you know an enterprise leader & think they'd love this, have them sign-up below.

English

@jessegenet We ran 70 agents in prod. Cost was never just the API bill - retries on failures, fallback loops, redundant calls from poor state management... the real bill was 3-4x the base API cost. And that's before you catch the behaviors you never intended.

English

Yup - If you want your agent to feel human and to get a lot done it has a steep true price right now

As people get better at setting up agents providers will throttle usage and dial in their pricing so they don’t lose money

Running local models will be key to AI autonomy

Ryan Carson@ryancarson

The cost to run a truly useful Chief of Staff @openclaw on Opus 4.6 is $100-200 per day on the API.

English

@TechByMarkandey Memory is one piece. The harder part is when your agent confidently acts on the wrong thing. Saw this with 70+ agents in prod - silent wrong-memory failures were brutal. How does ByteRover handle hallucinated memories at scale?

English

Most AI agents forget. This one doesn’t.

Hermes Agent by Nous Research just got a serious upgrade with ByteRover - turning it from a stateless tool into something that actually learns over time.

⚡ What stands out:

• Built on a production-proven memory system (30K+ downloads in week 1)

• >92% retrieval accuracy across long-running sessions

• ~1.6s retrieval — often no LLM call needed

• Fully local by default (with optional cloud sync)

• 50–70% token cost savings

But the real shift?

This isn’t just “better memory.”

It’s a move toward agents with persistent, evolving intelligence.

Instead of re-prompting every time, your agent remembers context, decisions, and logic even months later.

If you’re building with AI agents, this is worth paying attention to.

Try it yourself: github.com/campfirein/byt…

andy nguyen@kevinnguyendn

English

The vibe coding community is the fastest-growing community in CT right now

And it's not even really a community

It's just a bunch of people doing the same thing at the same time and posting about it

The vibe coding community formed without a Discord, a token or a roadmap

Ironically, that's more community than 99% of projects with a "community manager" have ever achieved

English

@tammireddy @AnthropicAI Agree. The moment itself is unavoidable. But how you handle it is a choice. Credit + acknowledgement from @AnthropicAI shows you can draw a line without burning the builders who bet on you. That's the blueprint. How many platforms are actually ready for that?

English

Every AI platform will have its OpenClaw moment.

The question is what they do next.

@AnthropicAI chose credit and acknowledgement. That's not nothing.

English

@RoundtableSpace Built 70+ agents with @AnthropicAI tools. Only 7 hit prod. The $200/mo vs $19/mo debate misses the point - the real cost is agents failing silently in production. What's your test coverage before you ship?

English

Claude Code is $200/month. GitHub Copilot is $19/month.

Jack Dorsey's company just open-sourced a free alternative with 35,000 GitHub stars.

It's called Goose.

- Works with any LLM — Claude, GPT, Gemini, Llama, DeepSeek

- Reads and edits your entire codebase

- Runs shell commands and installs dependencies

- Executes and debugs code automatically

- Desktop, CLI, and web interface

- Written in Rust. No bloat.

Block is a $40 billion company. They built it for their own engineers then gave it to everyone.

English

Cursor 3 ships parallel AI agents. @cursor_ai Each new parallel run = one more thing that can go wrong in prod. I built 70 agents at a financial services company. Only 7 shipped safely. Nobody was stress testing at scale. Who is doing that today?

English