this diagram took my good 1.5 hours😭

Antaripa Saha

5.3K posts

@doesdatmaksense

building @specstoryai | consulting companies in applied ai | doing maths in my free time

this diagram took my good 1.5 hours😭

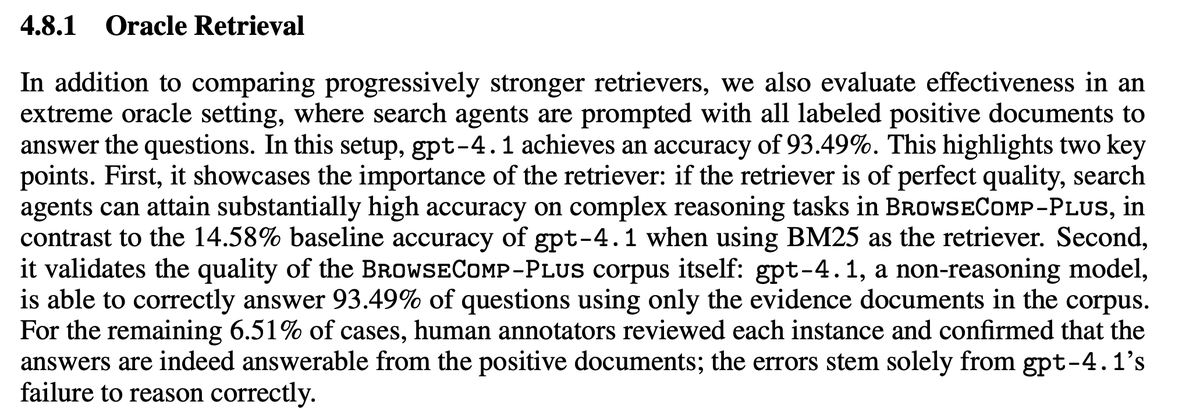

BrowseComp-Plus, perhaps the hardest popular deep research task, is now solved at nearly 90%... ... and all it took was a 150M model ✨ Thrilled to announce that Reason-ModernColBERT did it again and outperform all models (including models 54× bigger) on all metrics

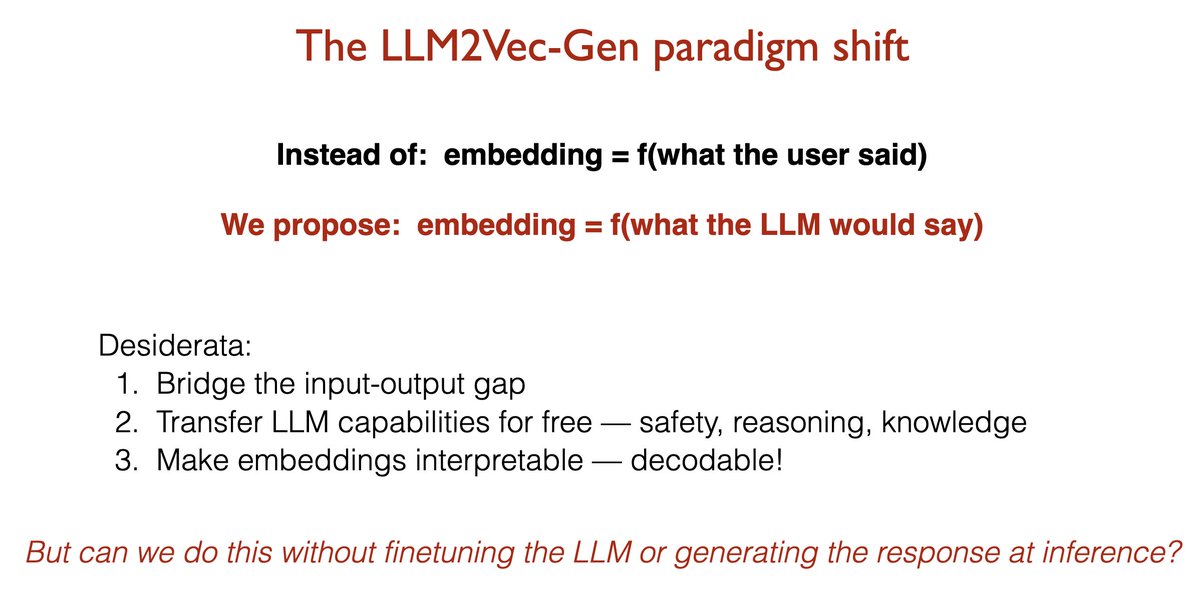

Your LLM already knows the answer. Why is your embedding model still encoding the question? 🚨Introducing LLM2Vec-Gen: your frozen LLM generates the answer's embedding in a single forward pass — without ever generating the answer. Not only that, the frozen LLM can decode the embedding back into text. 🏆 SOTA self-supervised embeddings 🛡️ Free transfer of instruction-following, safety, and reasoning

Droid is the best agent harness based on outcomes, closely followed by Codex. I have stopped using everything else, mostly except for a few one offs. I would highly recommend trying it out with BYOK + VibeProxy github.com/automazeio/vib… You can use all your subs in there

We’ve identified industrial-scale distillation attacks on our models by DeepSeek, Moonshot AI, and MiniMax. These labs created over 24,000 fraudulent accounts and generated over 16 million exchanges with Claude, extracting its capabilities to train and improve their own models.