Sequoia Capital

8.5K posts

@sequoia

We help the daring build legendary companies from idea to IPO and beyond.

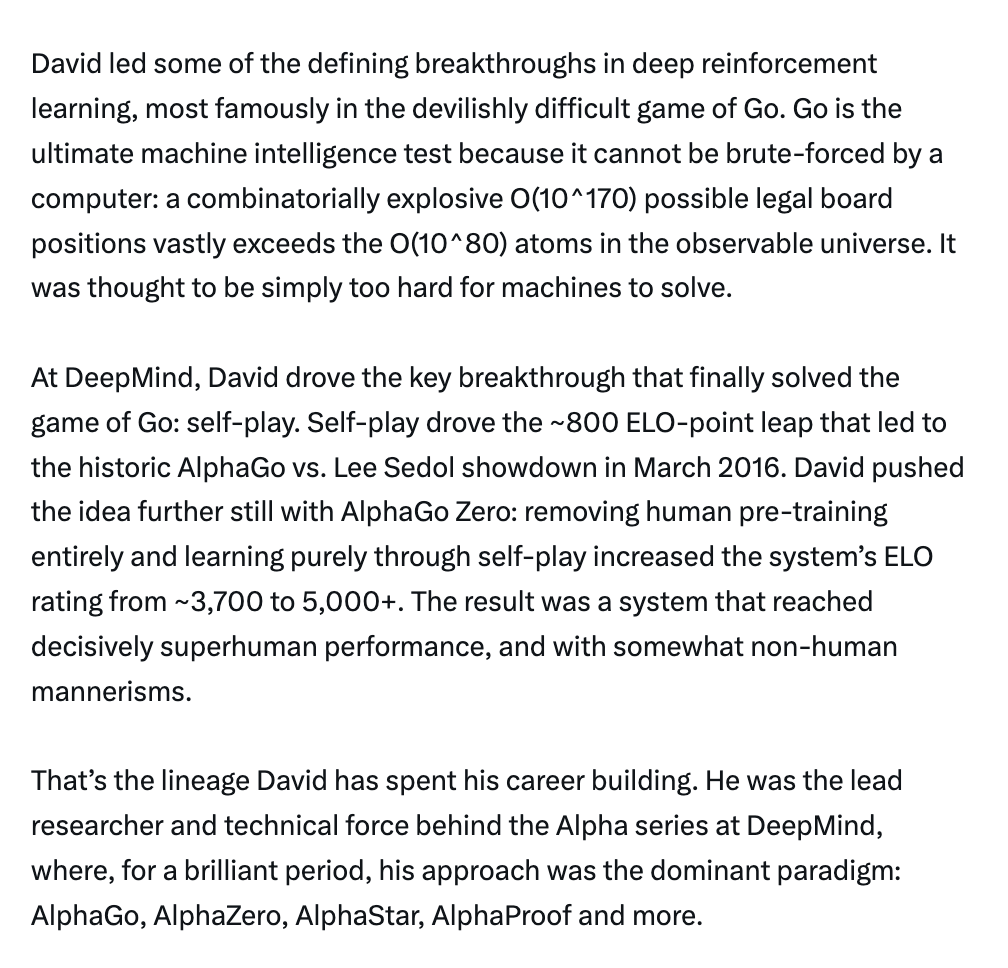

Sir @demishassabis has a mind for synthesis. His favorite book is about a grand theory of everything. His preferred philosophers are seen by some as opposites. His life's work ranges from board games to Nobel-winning science. We're grateful to have hosted Demis and his @GoogleDeepMind team at @sequoia AI Ascent last week for a fireside chat. He kindly gave us permission to share this, and you can watch the full video here: 00:00 Intro 00:38 The Common Thread 01:29 Games as AI Training 02:59 Startup Advice 1.0 04:39 Founding DeepMind 07:25 DeepMind and AGI 08:52 AI for Science 10:37 Biology Breakthroughs and Isomorphic 12:42 New Sciences 20:29 Philosophy

Waymo vehicles have a 13x lower rate of serious accidents than human drivers. Six generations of hardware. Thousands of innovations in AI and software. 170M+ fully autonomous miles. For 20 years @Dmitri_Dolgov has been focused on making driving safer. While dozens of companies have come and gone, Dmitri persisted. He knows that getting to "good" is easy, getting to "great" is hard and getting to "super human safety" is extreme. Dmitri chooses extreme. My favorite part about him? He’s extremely humble about all this and remains focused on what's next: scaling. We're grateful to have hosted Dmitri and his @Waymo team at @sequoia AI Ascent last week. Full video here: 00:00 Introduction 01:25 Origins 02:45 DARPA Challenge 04:18 Google Self Driving 05:44 Startup Grind 07:11 The AV Hype Cycle 09:47 Waymo World Model 12:41 End-to-End 15:28 Gen 6 Hardware and Scaling 19:53 Safety Stories and the Future

Waymo vehicles have a 13x lower rate of serious accidents than human drivers. Six generations of hardware. Thousands of innovations in AI and software. 170M+ fully autonomous miles. For 20 years @Dmitri_Dolgov has been focused on making driving safer. While dozens of companies have come and gone, Dmitri persisted. He knows that getting to "good" is easy, getting to "great" is hard and getting to "super human safety" is extreme. Dmitri chooses extreme. My favorite part about him? He’s extremely humble about all this and remains focused on what's next: scaling. We're grateful to have hosted Dmitri and his @Waymo team at @sequoia AI Ascent last week. Full video here: 00:00 Introduction 01:25 Origins 02:45 DARPA Challenge 04:18 Google Self Driving 05:44 Startup Grind 07:11 The AV Hype Cycle 09:47 Waymo World Model 12:41 End-to-End 15:28 Gen 6 Hardware and Scaling 19:53 Safety Stories and the Future

@karpathy and I are back! At @sequoia AI Ascent 2026. And a lot has changed. Last year, he coined “vibe coding”. This year, he’s never felt more behind as a programmer. The big shift: vibe coding raised the floor. Agentic engineering raises the ceiling. We talk about what it means to build seriously in the agent era. Not just moving faster. Building new things, with new tools, while preserving the parts that still require human taste, judgment, and understanding.