Cunxiao Du

55 posts

This is similar to what OpenAI did in PRM800k (2023). I think this is the right way to collect data. github.com/openai/prm800k…

🚀We propose Reptile, a Terminal Agent🤖️that enables interaction with an LLM agent directly in your terminal. The agent can execute any command or custom CLI tool to accomplish tasks, and users can define their own tools and commands for the agent to utilize. ✨What Makes Reptile Special? Compared with other CLI agents (e.g., Claude Code and Mini SWE-Agent), Reptile stands out for the following reasons: ⚡️Human-in-the-Loop Learning: Users can inspect every step and provide prompt feedback, i.e., give feedback under the USER role or edit the LLM generation under the ASSISTANT role. The interaction will be used for model SFT training & RL training. 💻Terminal-only beyond Bash-only: Simple and stateful execution, which is more efficient than bash-only (you don’t need to specify the environment in every command). It doesn’t require the complicated MCP protocol—just a naive bash tool under the REPL protocol. Github: github.com/terminal-agent… Homepage: terminal-agent.github.io/blog/workflow/

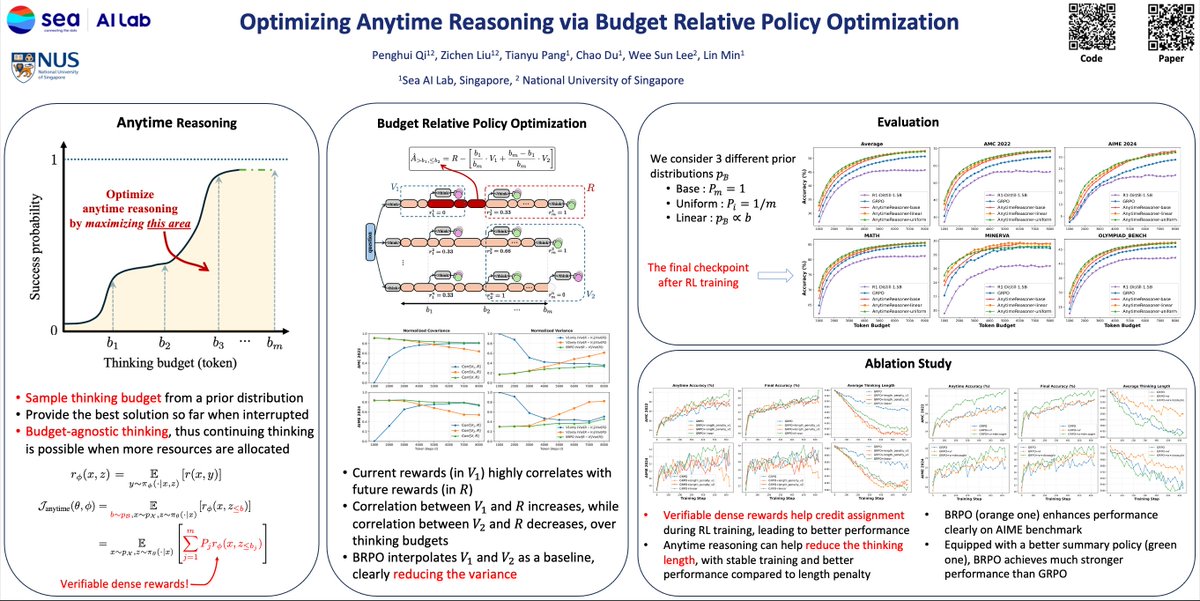

👀Optimizing Anytime Reasoning via Budget Relative Policy Optimization👀 🚀Our BRPO leverages verifiable dense rewards, significantly outperforming GRPO in both final and anytime reasoning performance.🚀 📰Paper: arxiv.org/abs/2505.13438 🛠️Code: github.com/sail-sg/Anytim…

I wrote a blog post: The Parallel Decoding Trilemma Parallel decoding research has tried to optimize the speed-quality tradeoff, but I believe quality should be decomposed into fluency and diversity.

@ducx_du @sedielem I'm obviously being naive here, but I'm thinking something like an outer loop "big picture/outlining" diffusion-like approach, with an inner loop "detail oriented" autoregressive model. Just basing this on how I read (skim + focus) and also write (outline + details).

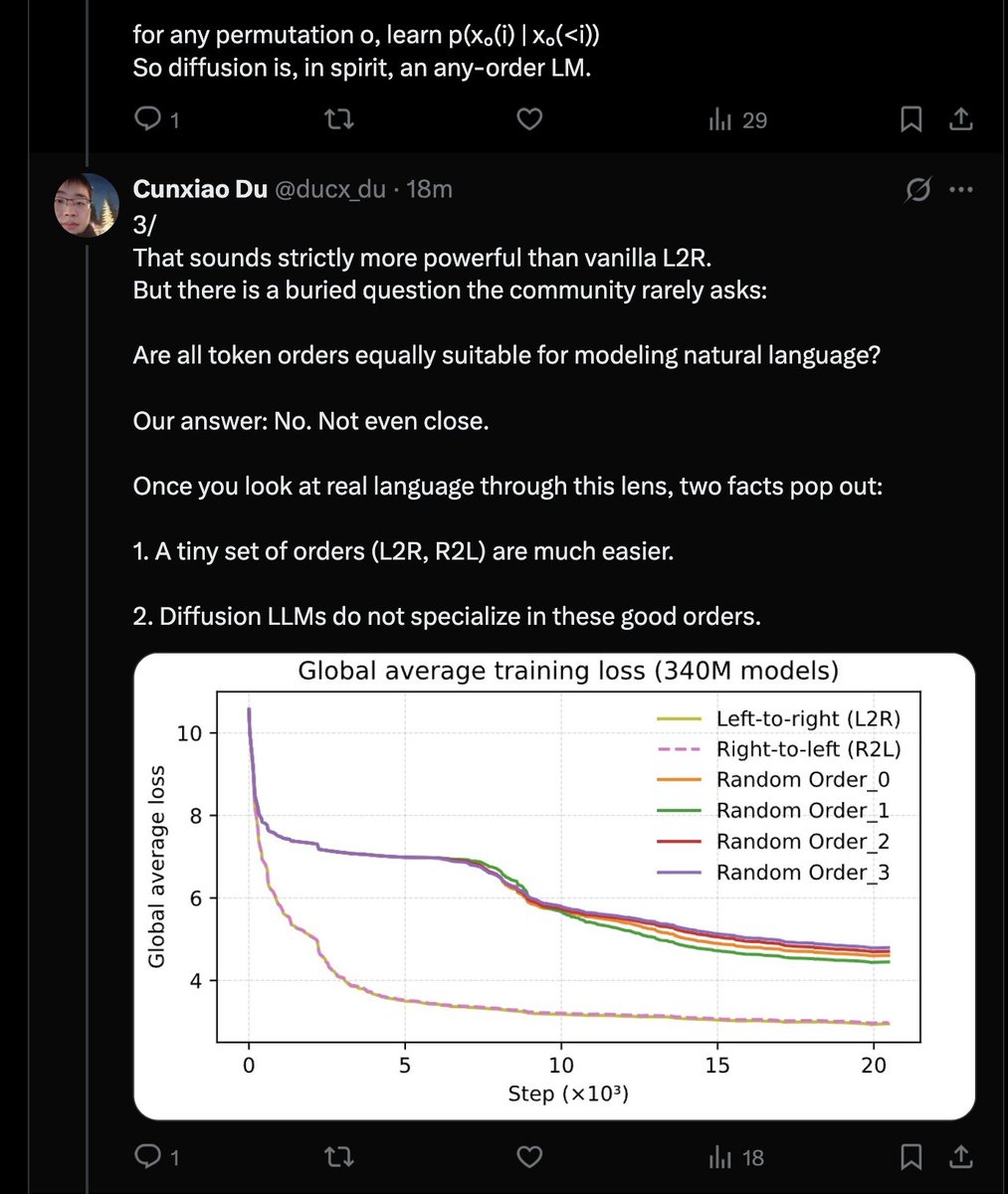

Diffusion LLMs (DLLM) can do “any-order” generation, in principle, more flexible than left-to-right (L2R) LLM. Our main finding is uncomfortable: ➡️ In real language, this flexibility backfires: DLLMs become worse probabilistic models than the L2R / R2L AR LMs. This thread is about why “any order” turns into a curse. (Work with Xinyu Yang @Xinyu2ML , Min Lin @mavenlin , Chao Du @duchao0726 and the team.) Blog Link: #2af0ba07baa880c29fc4c8c198244cc8" target="_blank" rel="nofollow noopener">notion.so/Understanding-…

🚨Announcing our #ICLR2025 Oral! 🔥Diffusion LMs are on the rise for parallel text generation! But unlike autoregressive LMs, they struggle with quality, fixed-length constraints & lack of KV caching. 🚀Introducing Block Diffusion—combining autoregressive and diffusion models for the best of both worlds! 👇1/7

Diffusion LLMs (DLLM) can do “any-order” generation, in principle, more flexible than left-to-right (L2R) LLM. Our main finding is uncomfortable: ➡️ In real language, this flexibility backfires: DLLMs become worse probabilistic models than the L2R / R2L AR LMs. This thread is about why “any order” turns into a curse. (Work with Xinyu Yang @Xinyu2ML , Min Lin @mavenlin , Chao Du @duchao0726 and the team.) Blog Link: #2af0ba07baa880c29fc4c8c198244cc8" target="_blank" rel="nofollow noopener">notion.so/Understanding-…

Diffusion LLMs (DLLM) can do “any-order” generation, in principle, more flexible than left-to-right (L2R) LLM. Our main finding is uncomfortable: ➡️ In real language, this flexibility backfires: DLLMs become worse probabilistic models than the L2R / R2L AR LMs. This thread is about why “any order” turns into a curse. (Work with Xinyu Yang @Xinyu2ML , Min Lin @mavenlin , Chao Du @duchao0726 and the team.) Blog Link: #2af0ba07baa880c29fc4c8c198244cc8" target="_blank" rel="nofollow noopener">notion.so/Understanding-…

Diffusion LLMs (DLLM) can do “any-order” generation, in principle, more flexible than left-to-right (L2R) LLM. Our main finding is uncomfortable: ➡️ In real language, this flexibility backfires: DLLMs become worse probabilistic models than the L2R / R2L AR LMs. This thread is about why “any order” turns into a curse. (Work with Xinyu Yang @Xinyu2ML , Min Lin @mavenlin , Chao Du @duchao0726 and the team.) Blog Link: #2af0ba07baa880c29fc4c8c198244cc8" target="_blank" rel="nofollow noopener">notion.so/Understanding-…