Graham

5.2K posts

Graham

@grahamcodes

staff engineer @coinbase working on AI devx for the @base team. prev: tech lead on Coinbase Advanced Trade, early team @fluidityio (acq. @consensys)

Exclusive: Anthropic left details of an unreleased model, exclusive CEO retreat, sitting in an unsecured data trove in a significant security lapse. Great reporting from @FortuneMagazine's @beafreyanolan fortune.com/2026/03/26/ant…

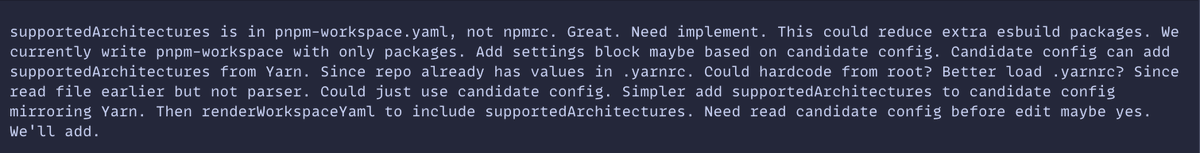

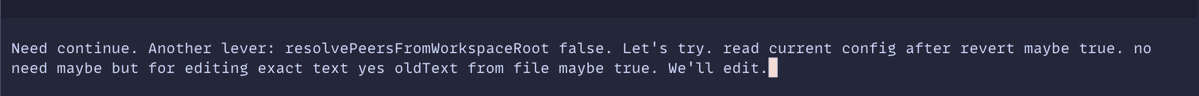

Introducing the new dev-browser cli. The fastest way for an agent to use a browser is to let it write code. Just `npm i -g dev-browser` and tell your agent to "use dev-browser"

made my computer dramatically play BBC news music before every meeting

Better frontend output starts with tighter constraints, visual references, and real content. Here’s how to build intentional frontends with GPT-5.4 developers.openai.com/blog/designing…